GITNUXSOFTWARE ADVICE

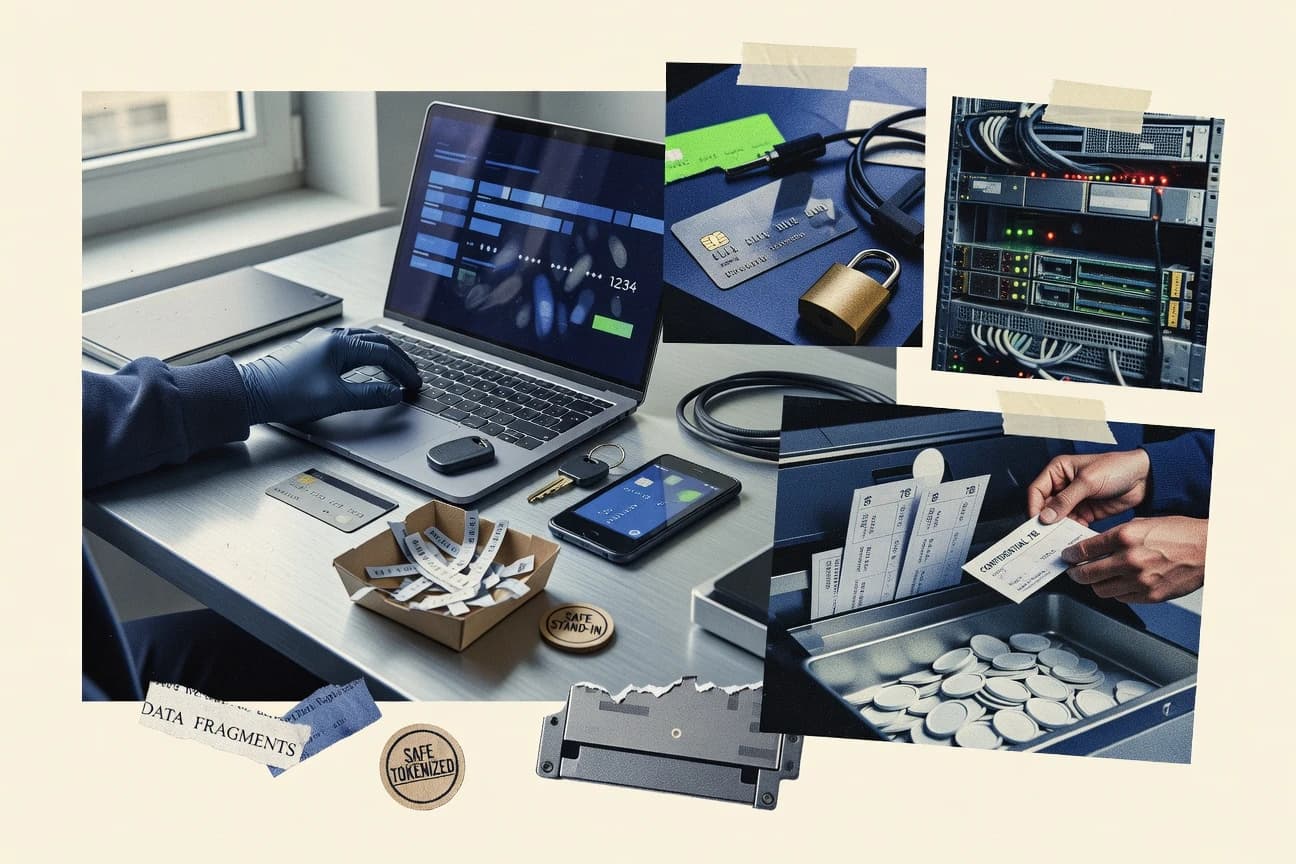

Cybersecurity Information SecurityTop 10 Best Data Tokenization Software of 2026

Discover the top data tokenization software solutions to protect sensitive data.

How we ranked these tools

Core product claims cross-referenced against official documentation, changelogs, and independent technical reviews.

Analyzed video reviews and hundreds of written evaluations to capture real-world user experiences with each tool.

AI persona simulations modeled how different user types would experience each tool across common use cases and workflows.

Final rankings reviewed and approved by our editorial team with authority to override AI-generated scores based on domain expertise.

Score: Features 40% · Ease 30% · Value 30%

Gitnux may earn a commission through links on this page — this does not influence rankings. Editorial policy

Editor picks

Three quick recommendations before you dive into the full comparison below — each one leads on a different dimension.

TokenEx

Format-preserving tokenization for maintaining downstream data compatibility

Built for enterprises modernizing payment and sensitive-data systems without major schema changes.

Entrust nShield Connect

HSM-backed nShield Connect tokenization using hardware key protection and controlled key policies

Built for enterprises tokenizing regulated identifiers needing HSM-backed security and format compatibility.

Thales CipherTrust

CipherTrust Manager-driven tokenization policies tied to centralized key management

Built for large enterprises standardizing tokenization and key governance across many systems.

Related reading

Comparison Table

This comparison table evaluates data tokenization and related encryption platforms, including TokenEx, Entrust nShield Connect, Thales CipherTrust, Protegrity, and IBM Guardium Data Encryption and Tokenization. It summarizes how each tool handles token generation, key management, format preservation, deployment model, and integration with databases and applications so readers can map capabilities to specific use cases.

| # | Tool | Category | Overall | Features | Ease of Use | Value |

|---|---|---|---|---|---|---|

| 1 | TokenEx Provides format-preserving tokenization and vault services to protect sensitive data such as payment card details and PII across enterprise and digital channels. | enterprise tokenization | 8.6/10 | 9.0/10 | 8.0/10 | 8.8/10 |

| 2 | Entrust nShield Connect Supports secure tokenization architectures by providing HSM-backed key management used to protect tokenization keys and cryptographic workflows. | HSM-backed tokenization | 8.0/10 | 8.5/10 | 7.4/10 | 7.9/10 |

| 3 | Thales CipherTrust Enables data protection and key management capabilities that integrate with tokenization deployments to secure sensitive information at rest and in transit. | enterprise data protection | 8.1/10 | 8.7/10 | 7.4/10 | 7.9/10 |

| 4 | Protegrity Provides tokenization and format-preserving protection with an enterprise vault to reduce exposure of sensitive fields in applications and data stores. | data-centric tokenization | 7.9/10 | 8.6/10 | 7.2/10 | 7.8/10 |

| 5 | IBM Guardium Data Encryption and Tokenization Delivers data encryption and tokenization controls that help protect sensitive data while enforcing access and monitoring policies. | DLP-adjacent tokenization | 7.7/10 | 8.2/10 | 7.1/10 | 7.5/10 |

| 6 | Google Cloud Data Loss Prevention with tokenization workflows Supports detection and protection workflows that can integrate tokenization patterns to minimize cleartext exposure for sensitive data. | cloud security workflows | 8.0/10 | 8.4/10 | 7.4/10 | 8.0/10 |

| 7 | Microsoft Purview Provides data discovery and classification capabilities plus protection workflows that can support tokenization strategies for sensitive data governance. | governance-integrated protection | 7.7/10 | 8.0/10 | 6.9/10 | 8.2/10 |

| 8 | AWS Encryption SDK Offers client-side encryption and key wrapping primitives that commonly underpin tokenization systems by protecting tokens and associated keys. | encryption building blocks | 8.0/10 | 8.4/10 | 7.5/10 | 7.9/10 |

| 9 | Oracle Cloud Infrastructure Vault Provides managed vault services for storing cryptographic secrets used to secure tokenization keys and encryption material. | vault and key management | 7.2/10 | 7.6/10 | 6.7/10 | 7.0/10 |

| 10 | Amazon Aurora encryption integration Supports encryption for data at rest in managed databases used in tokenization pipelines, reducing exposure of sensitive artifacts. | managed database protection | 7.1/10 | 7.3/10 | 7.0/10 | 6.9/10 |

Provides format-preserving tokenization and vault services to protect sensitive data such as payment card details and PII across enterprise and digital channels.

Supports secure tokenization architectures by providing HSM-backed key management used to protect tokenization keys and cryptographic workflows.

Enables data protection and key management capabilities that integrate with tokenization deployments to secure sensitive information at rest and in transit.

Provides tokenization and format-preserving protection with an enterprise vault to reduce exposure of sensitive fields in applications and data stores.

Delivers data encryption and tokenization controls that help protect sensitive data while enforcing access and monitoring policies.

Supports detection and protection workflows that can integrate tokenization patterns to minimize cleartext exposure for sensitive data.

Provides data discovery and classification capabilities plus protection workflows that can support tokenization strategies for sensitive data governance.

Offers client-side encryption and key wrapping primitives that commonly underpin tokenization systems by protecting tokens and associated keys.

Provides managed vault services for storing cryptographic secrets used to secure tokenization keys and encryption material.

Supports encryption for data at rest in managed databases used in tokenization pipelines, reducing exposure of sensitive artifacts.

TokenEx

enterprise tokenizationProvides format-preserving tokenization and vault services to protect sensitive data such as payment card details and PII across enterprise and digital channels.

Format-preserving tokenization for maintaining downstream data compatibility

TokenEx stands out for enterprise tokenization that supports both payment card and sensitive data use cases through centralized policy controls. The platform provides token vault capabilities that separate stored tokens from source data, reducing exposure during processing and storage. It also supports encryption, format preservation, and workflow features that help maintain downstream application compatibility while routing sensitive fields to tokenization and detokenization services.

Pros

- Centralized token vault design limits access to original sensitive data

- Supports encryption plus tokenization for layered protection workflows

- Format-preserving tokenization helps keep legacy integrations working

- Detokenization controls support controlled retrieval paths

- Enterprise deployment patterns fit high-volume transaction processing

Cons

- Integration work can be substantial for complex application field mapping

- Workflow tuning may require specialist configuration and testing

- Operational ownership is needed to manage policies and vault access

Best For

Enterprises modernizing payment and sensitive-data systems without major schema changes

More related reading

Entrust nShield Connect

HSM-backed tokenizationSupports secure tokenization architectures by providing HSM-backed key management used to protect tokenization keys and cryptographic workflows.

HSM-backed nShield Connect tokenization using hardware key protection and controlled key policies

Entrust nShield Connect stands out for combining an HSM-backed trust boundary with tokenization services that use nShield key material for cryptographic operations. It supports format-preserving tokenization patterns for payment and regulated data so downstream systems can store tokens while keeping original values protected. Integration focuses on connecting applications to secure key services rather than pushing encryption logic into every client. Operationally, it emphasizes policy-controlled key usage and auditable cryptographic workflows tied to the HSM environment.

Pros

- HSM-grounded tokenization with strong key isolation via nShield hardware

- Format-preserving tokenization supports compatible schemas for legacy storage

- Centralized cryptographic policy and auditable key usage reduce client complexity

Cons

- Deployment and integration require infrastructure and security engineering

- Token lifecycle operations can be operationally heavy across multiple systems

- Use case fit depends on maintaining token mappings and access controls

Best For

Enterprises tokenizing regulated identifiers needing HSM-backed security and format compatibility

Thales CipherTrust

enterprise data protectionEnables data protection and key management capabilities that integrate with tokenization deployments to secure sensitive information at rest and in transit.

CipherTrust Manager-driven tokenization policies tied to centralized key management

Thales CipherTrust stands out for offering tokenization integrated with enterprise key management through CipherTrust Manager. It supports format-preserving and detokenization workflows for protecting sensitive data across databases, applications, and data stores. Centralized key control and policy-based access help enforce consistent protection across environments. Deployment supports both on-premises and cloud patterns, with integrations aimed at enterprise data pipelines.

Pros

- Centralized key management with policy control via CipherTrust Manager

- Format-preserving tokenization supports data constraints and limited downstream changes

- Detokenization workflows support authorized recovery for regulated use cases

Cons

- Enterprise configuration and integration require specialized implementation effort

- Fine-grained tokenization coverage can add complexity across many data sources

- Operational tuning for performance and consistency adds ongoing administration work

Best For

Large enterprises standardizing tokenization and key governance across many systems

More related reading

Protegrity

data-centric tokenizationProvides tokenization and format-preserving protection with an enterprise vault to reduce exposure of sensitive fields in applications and data stores.

Centralized policy management that governs tokenization scope, access, and detokenization

Protegrity focuses on enterprise-grade data tokenization and encryption across structured and unstructured data stores. The platform supports tokenization that preserves format and enables controlled token detokenization for authorized systems. Centralized policy controls define where sensitive data is tokenized, how keys are managed, and which applications can access detokenization.

Pros

- Enterprise policy-based tokenization with centralized control across applications

- Strong key and access governance for tokenization and detokenization workflows

- Format-preserving tokenization supports compatibility with downstream systems

Cons

- Deployment complexity is higher when covering multiple data stores and apps

- Operational setup and tuning can require significant security and data engineering effort

- Advanced workflows depend on correct integration of tokenization and detokenization services

Best For

Large enterprises needing governed tokenization across sensitive data systems

IBM Guardium Data Encryption and Tokenization

DLP-adjacent tokenizationDelivers data encryption and tokenization controls that help protect sensitive data while enforcing access and monitoring policies.

Guardium-integrated tokenization policy and key governance for controlled token lifecycle

IBM Guardium Data Encryption and Tokenization focuses on protecting structured data with tokenization tightly integrated with broader Guardium data security workflows. It supports tokenization and format-preserving behaviors for common databases and applications, plus encryption for data at rest and in motion through Guardium deployments. The solution is designed to reduce exposure by substituting sensitive values with tokens that can be managed consistently across systems. It also aligns with enterprise governance needs by leveraging centralized key and policy control patterns commonly used in Guardium environments.

Pros

- Strong integration with Guardium security workflows for token lifecycle governance

- Tokenization designed to support consistent protection across database and application access paths

- Centralized policy and key management patterns fit enterprise security operating models

Cons

- Deployment and tuning inside Guardium environments can require specialized security engineering

- Tokenization can complicate application testing when formats and lookups must match expectations

- Best outcomes depend on accurate discovery of sensitive fields and end-to-end data flows

Best For

Enterprises using Guardium that need governed tokenization across critical database workloads

Google Cloud Data Loss Prevention with tokenization workflows

cloud security workflowsSupports detection and protection workflows that can integrate tokenization patterns to minimize cleartext exposure for sensitive data.

DLP tokenization transformation actions driven by inspection of sensitive data

Google Cloud Data Loss Prevention stands out with integrated tokenization workflows built for protecting sensitive data across Google Cloud and supported hybrid environments. It supports discovery, inspection, and transformation so sensitive fields can be replaced with tokens before data lands in storage or downstream systems. Tokenization can be driven by content inspection policies and applied consistently across sources that feed into Dataflow and storage destinations. Strong auditing and incident reporting help teams trace where protected data was detected and how it was transformed.

Pros

- Tokenization workflows connect with inspection policies and transformations in one DLP system

- Strong support for discovery, classification, and action enforcement for sensitive data

- Audit trails and findings support governance and incident analysis across processing stages

Cons

- Tokenization requires careful pipeline design to ensure consistent schemas and replacements

- Complex policy tuning is needed to reduce false positives and missed findings

- Operational setup across services takes time compared with single-product tokenization tools

Best For

Enterprises securing structured and semi-structured data with policy-driven tokenization

More related reading

Microsoft Purview

governance-integrated protectionProvides data discovery and classification capabilities plus protection workflows that can support tokenization strategies for sensitive data governance.

Information Protection and DLP integration that ties classification to protection actions

Microsoft Purview stands out for combining governance, risk, and compliance with built-in data discovery across Microsoft 365 and Azure workloads. Its data classification and policy enforcement capabilities support tokenization workflows through integrated information protection and data management features. Purview also ties together monitoring and audit evidence so tokenized data handling can be reviewed alongside broader compliance controls. Tokenization coverage is strongest when data sources and processing are already standardized in the Purview-supported ecosystem.

Pros

- Unified classification and governance policies across Microsoft workloads

- Auditing and reporting that connect token handling to compliance evidence

- Works well for tokenization programs driven by standardized data inventories

Cons

- Tokenization specifics are less direct than dedicated tokenization products

- Setup and governance tuning require skilled administration to avoid noisy results

- Cross-platform tokenization beyond Purview-aligned sources is limited

Best For

Enterprises needing governance-led tokenization across Microsoft 365 and Azure

AWS Encryption SDK

encryption building blocksOffers client-side encryption and key wrapping primitives that commonly underpin tokenization systems by protecting tokens and associated keys.

Message encryption via envelope keys using a configurable keyring and AWS KMS integration

AWS Encryption SDK focuses on data encryption and decryption that fit into existing applications without forcing a token format. It provides a client-side cryptography library that can wrap and unwrap data keys using AWS KMS and a keyring abstraction. It supports common encryption patterns for structured data and streaming use cases through envelope encryption and built-in primitives.

Pros

- Client-side envelope encryption with AWS KMS key wrapping

- Keyring abstraction supports multiple master keys and discovery patterns

- Fine-grained field encryption support for structured data

Cons

- Requires cryptography and key management knowledge to configure safely

- Operational complexity increases when rotating keys or migrating formats

- Not a dedicated tokenization product with token lifecycle workflows

Best For

Teams needing cryptographic tokenization-like protection for AWS data paths

More related reading

Oracle Cloud Infrastructure Vault

vault and key managementProvides managed vault services for storing cryptographic secrets used to secure tokenization keys and encryption material.

OCI Vault key management with HSM-backed protections and policy-enforced access

Oracle Cloud Infrastructure Vault stands out by centering tokenization and key management on OCI security primitives like HSM-backed key services. It supports data protection workflows that integrate with OCI services so encryption and tokenization can be enforced at the storage and application layers. Strong operational controls like access policies and audit trails help govern who can use keys and perform tokenization-related operations. Tokenization capabilities are most effective when applications run within or tightly integrate with OCI components.

Pros

- HSM-backed key protection strengthens the trust chain for tokenization workflows

- Tight OCI integration supports consistent encryption and token handling across services

- Granular IAM policies and audit logs improve governance and traceability

Cons

- Best results require OCI-centric architecture and service integration

- Configuring governance and key policies can be complex for app teams

- Tokenization setup lacks the turnkey developer experience of specialist tokenization products

Best For

Enterprises standardizing security controls on OCI for governed tokenization

Amazon Aurora encryption integration

managed database protectionSupports encryption for data at rest in managed databases used in tokenization pipelines, reducing exposure of sensitive artifacts.

Use of AWS KMS customer-managed keys for Aurora at-rest encryption

Amazon Aurora encryption integration brings database-level encryption options that help protect data at rest and during storage operations. It supports AWS Key Management Service key management for controlling who can use which keys and how long access should persist. For tokenization-focused use cases, it pairs well with external tokenization workflows because Aurora encryption alone does not provide format-preserving tokenization or reversible token vault APIs.

Pros

- Integrates with KMS for customer-managed keys and access control

- Encrypts data at rest in Aurora storage volumes

- Works cleanly in standard Aurora operational workflows

Cons

- Provides encryption, not tokenization or token vault functionality

- Requires external logic to generate, store, and map tokens to originals

- Key rotation and migration planning can add operational overhead

Best For

Teams securing Aurora databases and pairing external tokenization workflows

Conclusion

After evaluating 10 cybersecurity information security, TokenEx stands out as our overall top pick — it scored highest across our combined criteria of features, ease of use, and value, which is why it sits at #1 in the rankings above.

Use the comparison table and detailed reviews above to validate the fit against your own requirements before committing to a tool.

How to Choose the Right Data Tokenization Software

This buyer's guide explains how to evaluate data tokenization software for protecting sensitive fields like payment card data and PII across enterprise systems. Coverage includes TokenEx, Entrust nShield Connect, Thales CipherTrust, Protegrity, IBM Guardium Data Encryption and Tokenization, Google Cloud Data Loss Prevention with tokenization workflows, Microsoft Purview, AWS Encryption SDK, Oracle Cloud Infrastructure Vault, and Amazon Aurora encryption integration. The guide focuses on choosing solutions that preserve application compatibility, centralize key and policy governance, and support controlled detokenization paths.

What Is Data Tokenization Software?

Data tokenization software replaces sensitive values with tokens so downstream storage and applications handle protected data instead of cleartext. Many solutions also provide detokenization workflows that enforce authorized recovery paths for regulated use cases. TokenEx and Protegrity both emphasize format-preserving tokenization that keeps legacy schemas compatible while sensitive fields route through tokenization and detokenization services. Entrust nShield Connect and Thales CipherTrust extend tokenization with HSM-backed or enterprise key management using controlled policies for auditable cryptographic operations.

Key Features to Look For

Feature fit determines whether tokenization works cleanly in production without breaking application behavior or creating unsafe detokenization access paths.

Format-preserving tokenization for compatibility

Format-preserving tokenization keeps downstream data constraints working without requiring major application schema changes. TokenEx is built around format-preserving tokenization for maintaining legacy integration compatibility. Entrust nShield Connect and Thales CipherTrust also support format-preserving patterns to keep regulated data usable for compatible storage and processing.

Centralized token vault or controlled token storage model

Token vault designs separate stored tokens from original sensitive source data to reduce exposure during processing and storage. TokenEx uses a centralized token vault approach that limits access to original sensitive data during tokenized workflows. Protegrity provides an enterprise vault model that governs where sensitive fields are tokenized and how detokenization is authorized.

HSM-backed key isolation and hardware-trust boundaries

HSM-backed key isolation strengthens the trust boundary for tokenization cryptographic workflows. Entrust nShield Connect centers tokenization around nShield hardware key protection and controlled cryptographic key policies. Oracle Cloud Infrastructure Vault provides OCI Vault key management with HSM-backed protections and policy-enforced access that fits OCI-centric architectures.

Centralized key management and policy-controlled token usage

Centralized key and policy control enforces consistent protection across environments and reduces per-application cryptography burden. Thales CipherTrust uses CipherTrust Manager-driven tokenization policies tied to centralized key management. IBM Guardium Data Encryption and Tokenization aligns tokenization policy and key governance with Guardium security operating workflows for controlled token lifecycle management.

Detokenization workflows with authorized recovery paths

Detokenization needs tight authorization controls so only approved systems can recover original values when required. TokenEx supports detokenization controls that route authorized retrieval paths. Protegrity and Thales CipherTrust both emphasize controlled detokenization workflows tied to governance and policy enforcement.

Integrated discovery, inspection, and protection actions

Discovery and inspection reduce tokenization gaps by driving tokenization transformations based on detected sensitive content. Google Cloud Data Loss Prevention with tokenization workflows applies tokenization transformations driven by inspection policies and supports consistent replacements across sources. Microsoft Purview ties information protection and DLP actions to classification and audit evidence so token handling is reviewed alongside compliance controls.

How to Choose the Right Data Tokenization Software

Choosing the right solution requires mapping tokenization requirements to the way each tool handles compatibility, key governance, and detokenization access control.

Validate schema and integration compatibility needs

If legacy systems require the same data shape, prioritize format-preserving tokenization. TokenEx is designed for enterprises modernizing payment and sensitive-data systems without major schema changes by keeping downstream data constraints intact. Entrust nShield Connect, Thales CipherTrust, and Protegrity also support format-preserving tokenization patterns for compatible schemas.

Match key custody and hardware trust requirements

Regulated environments that require strong key isolation should evaluate HSM-backed tokenization. Entrust nShield Connect uses nShield hardware key material for cryptographic operations inside a controlled trust boundary. Thales CipherTrust centralizes key control through CipherTrust Manager policies, while Oracle Cloud Infrastructure Vault provides OCI Vault with HSM-backed protections for OCI-centric deployments.

Align token lifecycle governance with existing security operations

Tokenization works best when it plugs into existing operational controls for auditing, policy enforcement, and lifecycle management. IBM Guardium Data Encryption and Tokenization integrates tokenization and key governance into Guardium data security workflows for controlled token lifecycle management. TokenEx also emphasizes centralized policy controls and a vault model that supports enterprise governance patterns across high-volume processing.

Assess how tokenization is triggered in data pipelines

If tokenization must be driven by inspection and content discovery, evaluate integrated DLP or governance workflow tools. Google Cloud Data Loss Prevention with tokenization workflows combines discovery, inspection, and transformation so sensitive fields are replaced with tokens before data lands in storage or downstream processing. Microsoft Purview supports classification and information protection enforcement that connects token handling to audit and compliance evidence.

Confirm detokenization control model and operational ownership

The detokenization access pattern is a deciding factor for controlled recovery. TokenEx provides detokenization controls that define authorized retrieval paths, and Protegrity governs which applications can access detokenization. Thales CipherTrust also supports authorized detokenization workflows tied to centralized key governance, while AWS Encryption SDK focuses on encryption primitives and message-level envelope encryption rather than token vault detokenization workflows.

Who Needs Data Tokenization Software?

Different teams need different tokenization models based on compliance drivers, system architecture, and where sensitive data is discovered and transformed.

Enterprises modernizing payment and sensitive-data systems without major schema changes

TokenEx fits this scenario because it delivers format-preserving tokenization plus a centralized token vault model that limits access to original sensitive data. The platform also supports workflow routing for tokenization and detokenization services that reduces legacy integration breakage.

Enterprises tokenizing regulated identifiers that require HSM-backed security and format compatibility

Entrust nShield Connect is a strong match because it uses nShield hardware key protection and controlled key policies for tokenization cryptographic workflows. It also supports format-preserving tokenization patterns that keep regulated identifiers compatible with downstream storage schemas.

Large enterprises standardizing tokenization and key governance across many systems

Thales CipherTrust aligns with this need through CipherTrust Manager-driven tokenization policies tied to centralized key management. It supports consistent protection across databases, applications, and data stores with authorized detokenization workflows.

Large enterprises needing governed tokenization across sensitive data systems and apps

Protegrity targets governed tokenization with centralized policy management that controls tokenization scope, access, and detokenization authorization. It is built for enterprise deployment that governs where sensitive fields are tokenized and which applications can recover originals.

Common Mistakes to Avoid

Tokenization failures usually come from mismatched architecture expectations, insufficient governance ownership, or treating encryption as if it were token vault detokenization.

Choosing encryption-only primitives without a token lifecycle workflow

AWS Encryption SDK provides envelope encryption with AWS KMS key wrapping and a keyring abstraction, but it does not deliver a dedicated token vault and detokenization lifecycle like TokenEx or Protegrity. Aurora encryption integration also encrypts data at rest in managed database storage and requires external logic to generate and map tokens to originals.

Underestimating integration and field-mapping effort for complex applications

TokenEx can require substantial integration work for complex application field mapping, and Protegrity can require significant security and data engineering effort across multiple data stores and apps. Thales CipherTrust and IBM Guardium Data Encryption and Tokenization also require specialized implementation and tuning effort to cover many data sources or Guardium workflows end-to-end.

Building tokenization without a clear detokenization authorization model

Detokenization needs explicit access controls, and solutions like TokenEx and Thales CipherTrust define authorized recovery paths tied to policy and key governance. Protegrity also governs which applications can access detokenization, while solutions that only handle protection actions can create uncertainty if detokenization ownership is not designed.

Relying on policy-driven detection without planning pipeline consistency

Google Cloud Data Loss Prevention tokenization workflows require careful pipeline design so schemas and replacements stay consistent across sources and destinations. Microsoft Purview tokenization strategies depend on standardized data inventories and skilled governance tuning to avoid noisy results and cross-platform limitations.

How We Selected and Ranked These Tools

We evaluated every tool on three sub-dimensions with weighted scoring, using features with weight 0.4, ease of use with weight 0.3, and value with weight 0.3. The overall rating is computed as overall = 0.40 × features + 0.30 × ease of use + 0.30 × value. TokenEx separated from lower-ranked tools mainly through its feature strength in format-preserving tokenization combined with a centralized token vault design that limits access to original sensitive data while keeping downstream compatibility. Lower-ranked products like Amazon Aurora encryption integration focused on at-rest encryption with external token generation and mapping needs, which reduced tokenization workflow completeness in the features dimension.

Frequently Asked Questions About Data Tokenization Software

How do TokenEx and Protegrity differ in token storage and detokenization control?

TokenEx separates stored tokens from source data with token vault capabilities, which reduces exposure during processing and storage. Protegrity uses centralized policy controls to define where sensitive fields are tokenized, how keys are managed, and which applications can access detokenization.

Which platform best fits payments and regulated data when format-preserving tokens must stay compatible with downstream systems?

TokenEx supports format-preserving tokenization designed to maintain downstream application compatibility while routing sensitive fields through tokenization and detokenization services. Entrust nShield Connect also emphasizes format-preserving tokenization for payment and regulated data while tying cryptographic operations to nShield key material.

What makes CipherTrust and nShield Connect stand out for HSM-backed trust boundaries?

Entrust nShield Connect uses an HSM-backed trust boundary and performs cryptographic operations using nShield key material under auditable key policies. Thales CipherTrust centralizes key control through CipherTrust Manager and enforces policy-based access for consistent protection across environments.

Which solution supports tokenization across databases and applications with centralized key governance at enterprise scale?

Thales CipherTrust is built to standardize tokenization and key governance across many systems through CipherTrust Manager-driven policies and enterprise workflows. Protegrity similarly provides governed tokenization across sensitive data systems using centralized policy management for tokenization scope, access, and detokenization.

How do IBM Guardium Data Encryption and Tokenization and AWS Encryption SDK handle encryption workflows inside existing systems?

IBM Guardium Data Encryption and Tokenization integrates tokenization into Guardium data security workflows and supports format-preserving behavior for common database and application patterns. AWS Encryption SDK focuses on client-side envelope encryption using AWS KMS and a keyring abstraction, which avoids forcing a dedicated token format.

Which tools are better suited for policy-driven tokenization transformations triggered by sensitive data discovery?

Google Cloud Data Loss Prevention uses discovery and inspection to drive tokenization transformation actions before data lands in storage or downstream systems. Microsoft Purview ties data classification and DLP enforcement to protection actions so tokenization workflows can follow standardized sources across Microsoft 365 and Azure.

What integration model best supports streaming or application messaging when the goal is reversible protection without a strict token-vault API?

AWS Encryption SDK supports streaming-oriented encryption patterns using envelope keys and built-in primitives that wrap and unwrap data keys. TokenEx and Protegrity focus on token vault and detokenization service workflows, which fit applications that can route through centralized tokenization endpoints.

Why does Oracle Cloud Infrastructure Vault matter when the requirement includes auditable access policies tied to key operations?

Oracle Cloud Infrastructure Vault centers key management on OCI security primitives like HSM-backed key services and enforces access policies with audit trails for tokenization-related operations. This approach is most effective when applications run within or tightly integrate with OCI services to keep tokenization aligned with key usage controls.

When should teams avoid relying on Amazon Aurora encryption alone and instead use external tokenization?

Amazon Aurora encryption integration provides at-rest and storage-operation protection with AWS KMS key management, but it does not provide format-preserving tokenization or reversible token vault APIs. TokenEx, Protegrity, or Thales CipherTrust can supply tokenization and detokenization workflows that maintain data compatibility and enable controlled token lifecycle operations.

Tools reviewed

Referenced in the comparison table and product reviews above.

Keep exploring

Comparing two specific tools?

Software Alternatives

See head-to-head software comparisons with feature breakdowns, pricing, and our recommendation for each use case.

Explore software alternatives→In this category

Cybersecurity Information Security alternatives

See side-by-side comparisons of cybersecurity information security tools and pick the right one for your stack.

Compare cybersecurity information security tools→FOR SOFTWARE VENDORS

Not on this list? Let’s fix that.

Our best-of pages are how many teams discover and compare tools in this space. If you think your product belongs in this lineup, we’d like to hear from you—we’ll walk you through fit and what an editorial entry looks like.

Apply for a ListingWHAT THIS INCLUDES

Where buyers compare

Readers come to these pages to shortlist software—your product shows up in that moment, not in a random sidebar.

Editorial write-up

We describe your product in our own words and check the facts before anything goes live.

On-page brand presence

You appear in the roundup the same way as other tools we cover: name, positioning, and a clear next step for readers who want to learn more.

Kept up to date

We refresh lists on a regular rhythm so the category page stays useful as products and pricing change.