GITNUXSOFTWARE ADVICE

Data Science AnalyticsTop 10 Best Data Preparation Software of 2026

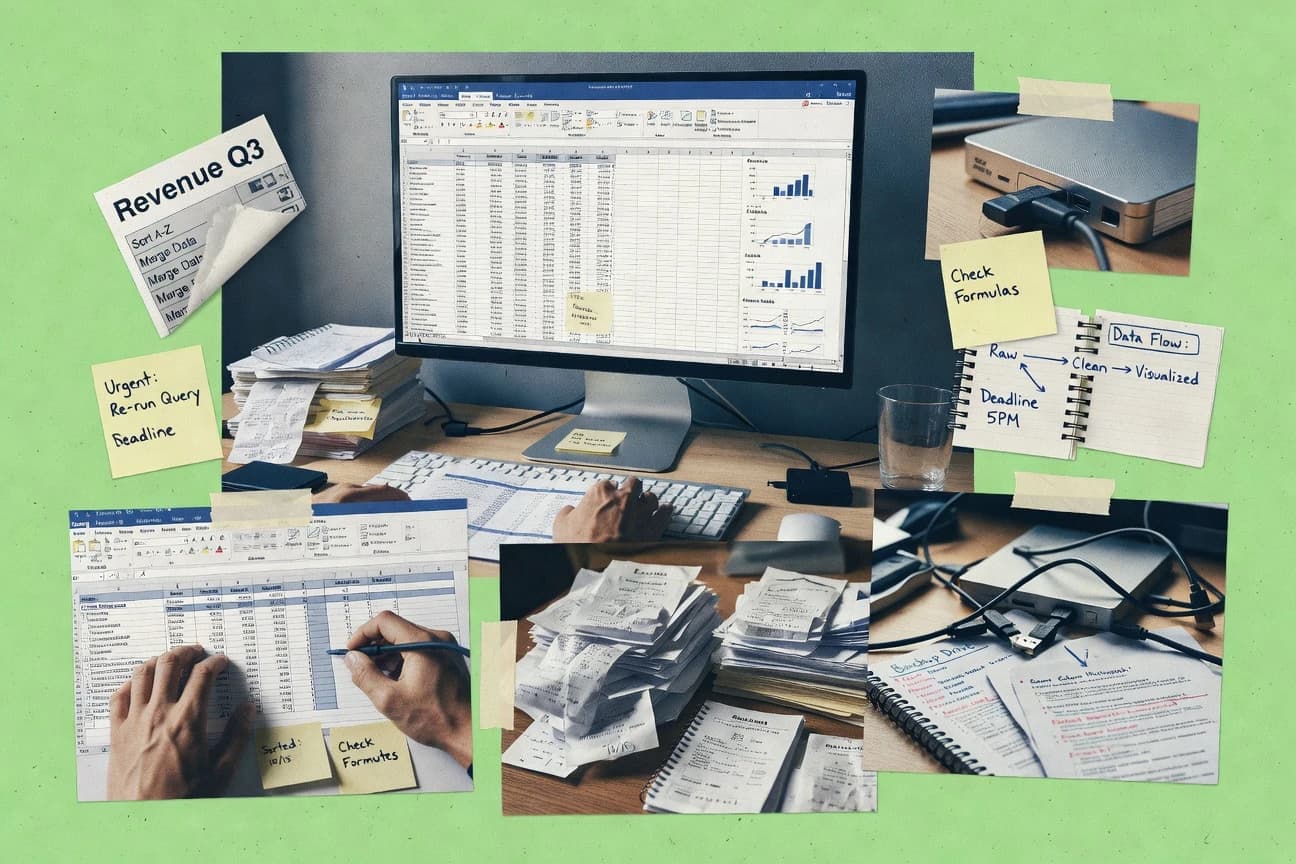

Find the best data prep tools to streamline workflows. Compare features and pick the ideal for efficient data prep today.

How we ranked these tools

Core product claims cross-referenced against official documentation, changelogs, and independent technical reviews.

Analyzed video reviews and hundreds of written evaluations to capture real-world user experiences with each tool.

AI persona simulations modeled how different user types would experience each tool across common use cases and workflows.

Final rankings reviewed and approved by our editorial team with authority to override AI-generated scores based on domain expertise.

Score: Features 40% · Ease 30% · Value 30%

Gitnux may earn a commission through links on this page — this does not influence rankings. Editorial policy

Editor picks

Three quick recommendations before you dive into the full comparison below — each one leads on a different dimension.

Trifacta

Smart parsing and transformation suggestions that generate reusable recipes from interactive edits

Built for teams standardizing messy tabular data with interactive, repeatable transformation workflows.

Alteryx

Fuzzy matching and record linkage for deduplication and entity resolution inside visual workflows

Built for analytics and data teams standardizing complex data preparation without extensive coding.

Dataiku

Prepare recipes with visual transformation steps plus dataset lineage and data quality checks

Built for data science teams needing governed visual prep and feature engineering pipelines.

Related reading

Comparison Table

This comparison table contrasts leading data preparation tools such as Trifacta, Alteryx, Dataiku, Microsoft Azure Data Factory, and Amazon Glue across core capabilities like data profiling, transformation, automation, and orchestration. Use it to compare how each platform supports visual and code-based workflows, integrates with data sources and warehouses, and fits into repeatable pipelines for cleaning and shaping analytics-ready datasets.

| # | Tool | Category | Overall | Features | Ease of Use | Value |

|---|---|---|---|---|---|---|

| 1 | Trifacta Trifacta Wrangler uses interactive transformations and AI-assisted suggestions to clean, standardize, and prepare data for analytics and machine learning. | AI-assisted | 9.2/10 | 9.4/10 | 8.7/10 | 8.3/10 |

| 2 | Alteryx Alteryx Designer provides a visual drag-and-drop workflow for data blending, cleansing, and preparation across spreadsheets, databases, and files. | visual workflow | 8.6/10 | 9.2/10 | 7.8/10 | 7.9/10 |

| 3 | Dataiku Dataiku prepares data with recipe-based cleaning, transformations, and governance features that support end-to-end analytics and machine learning. | data engineering | 8.6/10 | 9.3/10 | 7.9/10 | 7.8/10 |

| 4 | Microsoft Azure Data Factory Azure Data Factory orchestrates data ingestion and transformation pipelines using mapping data flows to support data preparation at scale. | ETL orchestration | 8.2/10 | 8.8/10 | 7.4/10 | 7.8/10 |

| 5 | Amazon Glue AWS Glue uses Spark-based ETL jobs and Glue Data Catalog capabilities to automate data preparation from multiple sources. | serverless ETL | 8.3/10 | 9.0/10 | 7.6/10 | 8.0/10 |

| 6 | Google Cloud Dataflow Google Cloud Dataflow runs data processing pipelines for streaming and batch preparation with Apache Beam transforms. | pipeline processing | 7.4/10 | 8.5/10 | 6.8/10 | 6.9/10 |

| 7 | Apache Spark Apache Spark enables high-performance data preparation using distributed transformations for cleansing, reshaping, and feature engineering. | open-source engine | 7.4/10 | 8.6/10 | 6.8/10 | 7.8/10 |

| 8 | dbt Core dbt Core uses SQL-based transformations and testing to prepare analytics-ready datasets with version control and lineage. | SQL transformations | 7.4/10 | 8.1/10 | 7.0/10 | 7.6/10 |

| 9 | Keboola Keboola provides a modular data preparation workspace that connects to sources, transforms data, and publishes outputs to destinations. | cloud workspace | 7.6/10 | 8.3/10 | 7.2/10 | 7.0/10 |

| 10 | Mage AI Mage AI is an open-source data preparation platform that builds and schedules pipelines for transforming data into analytics-ready datasets. | open-source pipelines | 6.8/10 | 7.2/10 | 6.4/10 | 7.1/10 |

Trifacta Wrangler uses interactive transformations and AI-assisted suggestions to clean, standardize, and prepare data for analytics and machine learning.

Alteryx Designer provides a visual drag-and-drop workflow for data blending, cleansing, and preparation across spreadsheets, databases, and files.

Dataiku prepares data with recipe-based cleaning, transformations, and governance features that support end-to-end analytics and machine learning.

Azure Data Factory orchestrates data ingestion and transformation pipelines using mapping data flows to support data preparation at scale.

AWS Glue uses Spark-based ETL jobs and Glue Data Catalog capabilities to automate data preparation from multiple sources.

Google Cloud Dataflow runs data processing pipelines for streaming and batch preparation with Apache Beam transforms.

Apache Spark enables high-performance data preparation using distributed transformations for cleansing, reshaping, and feature engineering.

dbt Core uses SQL-based transformations and testing to prepare analytics-ready datasets with version control and lineage.

Keboola provides a modular data preparation workspace that connects to sources, transforms data, and publishes outputs to destinations.

Mage AI is an open-source data preparation platform that builds and schedules pipelines for transforming data into analytics-ready datasets.

Trifacta

AI-assistedTrifacta Wrangler uses interactive transformations and AI-assisted suggestions to clean, standardize, and prepare data for analytics and machine learning.

Smart parsing and transformation suggestions that generate reusable recipes from interactive edits

Trifacta stands out for its interactive, visualization-driven approach to data wrangling that turns transformations into reusable steps. It provides recipe-based transformation workflows for cleaning, standardizing, and shaping tabular data before analytics or loading into warehouses and lakes. Its pattern recognition and “smart” suggestions speed up common cleanup tasks like parsing, normalization, and column type and value adjustments. The platform also supports governance-style controls through workspaces, user permissions, and auditability of transformation logic.

Pros

- Interactive wrangling UI shows transformations against sample data instantly

- Recipe-based workflows make repeatable data cleaning and shaping straightforward

- Pattern-based suggestions accelerate parsing, normalization, and standardization tasks

- Lineage-style visibility helps track transformation steps across datasets

- Supports collaboration via controlled workspaces and role-based access

Cons

- Advanced outcomes often require tuning transformation logic and rules

- Setup and governance configuration can add overhead for small teams

- Best results depend on data profiling quality and representative samples

Best For

Teams standardizing messy tabular data with interactive, repeatable transformation workflows

More related reading

Alteryx

visual workflowAlteryx Designer provides a visual drag-and-drop workflow for data blending, cleansing, and preparation across spreadsheets, databases, and files.

Fuzzy matching and record linkage for deduplication and entity resolution inside visual workflows

Alteryx stands out with a visual drag-and-drop workflow builder that turns messy data prep into repeatable, documented processes. It combines data cleansing, transformation, joins, and spatial analytics in one environment with built-in connectors for common sources and targets. You can schedule and automate workflows through Alteryx Server and run them at scale with controlled permissions and governed access. Strong governance tools and macro-based reusability help teams standardize data prep across business units.

Pros

- Visual workflow designer accelerates joins, cleaning, and transformation work

- Extensive built-in tools cover parsing, fuzzy matching, and data standardization

- Macros and reusable templates reduce duplicated prep logic across projects

- Scheduling and server execution support governed automation for repeat runs

Cons

- Advanced workflows require learning many operators and configuration options

- Licensing and server costs can feel high for small teams or single users

- Collaboration and versioning can be weaker than code-first data engineering stacks

- Scaling large cloud pipelines can demand extra architecture and administration

Best For

Analytics and data teams standardizing complex data preparation without extensive coding

Dataiku

data engineeringDataiku prepares data with recipe-based cleaning, transformations, and governance features that support end-to-end analytics and machine learning.

Prepare recipes with visual transformation steps plus dataset lineage and data quality checks

Dataiku stands out with an end-to-end visual data preparation and analytics workflow that connects to modeling and deployment. Its Prepare recipe framework supports data cleaning, feature engineering, and reproducible transformations with lineage tracking across steps. Dataiku also provides managed connectors for common sources and a collaborative project workspace for sharing datasets, schemas, and wrangling logic. Automated profiling and quality checks help teams spot issues before downstream training or reporting.

Pros

- Visual recipe-based data preparation with built-in lineage tracking

- Strong connectors for common data sources and destinations

- Automated profiling and data quality checks for early issue detection

- Collaboration features support shared datasets and reusable transformations

Cons

- Advanced projects require training to manage recipes and governance

- Compute and platform costs can rise with large datasets and automation

- Some low-level custom logic still needs external scripting

Best For

Data science teams needing governed visual prep and feature engineering pipelines

More related reading

Microsoft Azure Data Factory

ETL orchestrationAzure Data Factory orchestrates data ingestion and transformation pipelines using mapping data flows to support data preparation at scale.

Mapping Data Flows for graphical, schema-aware transformations inside Azure Data Factory pipelines

Azure Data Factory stands out with its managed data orchestration that connects to many sources and targets through built-in connectors and integration runtimes. It supports visual pipeline building with activity-based transformations, scheduled triggers, and parameterized workflows for repeatable data preparation. You can orchestrate both simple copy and more advanced wrangling using mapping data flows, custom activities, and notebook-based steps. Strong governance features like lineage and monitoring help teams track data movement end to end across environments.

Pros

- Visual pipeline designer with parameterized orchestration for reusable data prep jobs

- Built-in connectors plus integration runtimes for hybrid and on-prem data movement

- Mapping data flows provide structured transformations without hand-coding ETL logic

- Lineage, monitoring, and retries make failures easier to diagnose during runs

Cons

- Configuring integration runtimes for hybrid setups can be time-consuming

- Advanced transformations can require multiple tools and careful debugging

- Cost can rise with data flow usage and frequent high-volume refresh schedules

Best For

Teams building scheduled, governed ETL and ELT pipelines across cloud and on-prem sources

Amazon Glue

serverless ETLAWS Glue uses Spark-based ETL jobs and Glue Data Catalog capabilities to automate data preparation from multiple sources.

AWS Glue Data Catalog schema and metadata management for lake-wide discovery

AWS Glue stands out for turning semi-structured data into curated datasets using serverless ETL jobs tightly integrated with the AWS data stack. It provides schema discovery, a visual job authoring experience for common transformations, and an AWS Glue Data Catalog that centralizes tables and partitions across S3-based data lakes. It also supports workflow automation via Glue workflows and can run Spark-based transformations for scalable data preparation at low operational overhead. For teams already standardized on AWS, it reduces glue-work by managing job orchestration, metadata updates, and dependency handling.

Pros

- Serverless Spark ETL jobs reduce infrastructure management overhead

- Glue Data Catalog centralizes schemas for S3 and downstream analytics tools

- Schema discovery helps generate and maintain table definitions quickly

- Glue workflows automate multi-step preparation pipelines reliably

- IAM and VPC integration fit enterprise security and network controls

Cons

- Tuning Spark performance requires expertise and iterative testing

- Debugging failed ETL tasks can be slower than local transformation tools

- Costs can rise with frequent job runs and heavy Spark workloads

- Visual authoring covers common tasks but limits advanced transformation control

- Data preparation outside AWS storage and governance needs extra wiring

Best For

AWS-first teams building serverless ETL pipelines for S3 data lakes

Google Cloud Dataflow

pipeline processingGoogle Cloud Dataflow runs data processing pipelines for streaming and batch preparation with Apache Beam transforms.

Apache Beam with windowing and triggers for streaming-ready data preparation

Google Cloud Dataflow stands out for running Apache Beam pipelines on managed Google Cloud infrastructure with strong streaming and batch support. It transforms and prepares data through Beam SDKs, windowing, and exactly-once processing options for event streams. You build data preparation logic in code and deploy it to Dataflow to automate scaling, retries, and checkpointing for large datasets.

Pros

- Apache Beam support enables reusable batch and streaming data transforms

- Managed autoscaling reduces operational work for long-running pipelines

- Exactly-once options support reliable event processing for preparation steps

Cons

- Code-first development limits usability for non-developers

- Debugging distributed pipelines requires Beam and streaming operational expertise

- Cost can rise with high-throughput streaming and large shuffle operations

Best For

Teams building code-based streaming and batch preparation pipelines on GCP

More related reading

Apache Spark

open-source engineApache Spark enables high-performance data preparation using distributed transformations for cleansing, reshaping, and feature engineering.

Structured Streaming with DataFrame operations enables continuous data preparation and transformation.

Apache Spark stands out for turning large-scale data preparation into distributed, code-driven transformations with tight integration to batch and streaming. It provides SQL, DataFrame, and Dataset APIs that support joins, aggregations, cleansing, and feature-style transformations across structured and semi-structured data. Its MLlib and structured streaming workflows let teams prepare training data pipelines that run continuously, not only as one-off jobs. Spark also integrates with common storage and metastore tools, which helps standardize data preparation across environments.

Pros

- Distributed DataFrame and SQL transformations scale for large datasets

- Structured streaming supports continuous preparation and feature generation

- Strong ecosystem integration with Hadoop, object storage, and metastore catalogs

- Unified APIs for batch and streaming reduce pipeline rewrites

Cons

- Requires engineering effort to tune partitions, shuffles, and job execution

- Limited built-in visual data prep compared to workflow-first tools

- Debugging complex Spark jobs can be slower than single-node ETL tools

Best For

Teams building code-based data preparation pipelines at scale

dbt Core

SQL transformationsdbt Core uses SQL-based transformations and testing to prepare analytics-ready datasets with version control and lineage.

Incremental models that rebuild only changed partitions or keys with deterministic SQL logic

dbt Core stands out for transforming analytics SQL into versioned, testable data models using a code-first workflow. It compiles SQL to run on warehouses like Snowflake, BigQuery, and Databricks while managing model dependencies, variables, and incremental logic. The ecosystem adds documentation, data lineage, and testing patterns through packages and tooling rather than a fully visual interface. Teams use it to standardize transformations, enforce quality checks, and scale repeatable ELT pipelines with minimal abstraction over SQL.

Pros

- SQL-first modeling with macros for reusable transformation logic

- Built-in dependency graph for correct run order across models

- Comprehensive data quality tests including unique, not-null, and custom tests

Cons

- Requires engineering practices like Git workflows and CI for reliable collaboration

- Local orchestration is limited compared with full data pipeline platforms

- Warehouse permissions and CI setup often take time for new teams

Best For

Analytics teams using SQL for ELT who want tested, versioned transformations

More related reading

Keboola

cloud workspaceKeboola provides a modular data preparation workspace that connects to sources, transforms data, and publishes outputs to destinations.

Connector-based pipeline building with orchestrated transformations for repeatable data prep

Keboola stands out with a connector-driven data preparation workflow that loads, transforms, and orchestrates pipelines without forcing you into custom ETL code. You can model data in connected sources, standardize schemas with repeatable transformations, and manage jobs through a web-based orchestration layer. The platform’s strength is turning messy datasets into structured analytics-ready tables using reusable components and environment-friendly deployments. It also supports governance around where data lands and how transformations run across projects.

Pros

- Connector ecosystem accelerates ingestion from common databases and SaaS sources

- Reusable transformation blocks help standardize data cleaning across projects

- Pipeline orchestration manages scheduled and event-driven processing steps

- Project-based environments support safer deployments across stages

Cons

- Visual and connector tooling can feel heavy for small one-off prep tasks

- Complex workflows require more setup than simple spreadsheet cleaning

- Fine-grained customization often pushes work toward platform-specific conventions

Best For

Teams building repeatable ETL and data prep pipelines across multiple systems

Mage AI

open-source pipelinesMage AI is an open-source data preparation platform that builds and schedules pipelines for transforming data into analytics-ready datasets.

Notebook-to-pipeline data transformations with Mage’s reusable block workflow

Mage AI stands out with a notebook-driven data preparation workflow that mixes Python code and visual pipelines in one place. It supports batch and real-time style orchestration for extracting, transforming, and loading data into multiple destinations. You can reuse components across projects and parameterize pipelines for repeatable data preparation runs. Built-in connectors and dataset views make it practical for cleaning data and generating analysis-ready tables.

Pros

- Notebook-first workflow for transforming data with code and pipeline context

- Reusable pipeline components support consistent preparation across datasets

- Built-in dataset views and connectors speed up data profiling

Cons

- Complex orchestration can feel harder to manage than dedicated ETL tools

- Real-time style processing setup requires more engineering than standard batch ETL

- Debugging failed pipeline runs can take time compared with lighter tools

Best For

Teams building customizable data preparation pipelines with notebook-driven transformations

Conclusion

After evaluating 10 data science analytics, Trifacta stands out as our overall top pick — it scored highest across our combined criteria of features, ease of use, and value, which is why it sits at #1 in the rankings above.

Use the comparison table and detailed reviews above to validate the fit against your own requirements before committing to a tool.

How to Choose the Right Data Preparation Software

This buyer's guide helps you match data preparation workflows to real tool capabilities from Trifacta, Alteryx, Dataiku, Azure Data Factory, AWS Glue, Google Cloud Dataflow, Apache Spark, dbt Core, Keboola, and Mage AI. Use it to compare interactive and governed visual prep, orchestrated pipeline ETL, and code-first transformation frameworks. The sections below focus on which features matter and which team patterns fit each tool.

What Is Data Preparation Software?

Data Preparation Software transforms messy source data into analytics-ready tables or machine-learning-ready features through cleansing, normalization, reshaping, and validation. It solves problems like inconsistent column types, broken parsing rules, repeated transformation steps, and missing quality checks before downstream analytics. Teams use it for repeatable preparation runs and for lineage visibility into how output datasets are produced. Trifacta demonstrates an interactive transformation workflow for tabular wrangling, while Azure Data Factory demonstrates orchestrated pipelines with mapping data flows for scheduled and governed ETL and ELT.

Key Features to Look For

These capabilities separate tools that speed up preparation from tools that reliably scale it across teams and pipelines.

Interactive, transformation-driven wrangling

Choose tools that let you edit transformations against sample data so you can clean and standardize quickly. Trifacta uses an interactive UI where changes are visible immediately and transformation logic turns into reusable recipes, which accelerates parsing and normalization tasks.

Recipe workflows with lineage and reusable logic

Prioritize recipe-based workflows that preserve the same transformation steps across datasets and runs. Dataiku builds prepare recipes with visual transformation steps and lineage tracking, while Trifacta turns interactive edits into reusable recipes with lineage-style visibility.

Governance controls and end-to-end monitoring

Look for workspace permissions, lineage visibility, and run monitoring so data prep can be audited and failures can be diagnosed. Alteryx supports governed automation through Alteryx Server with controlled permissions, and Azure Data Factory adds lineage and monitoring plus retries for pipeline failures.

Data quality checks before modeling or reporting

Select tools that run profiling and data quality checks as part of preparation rather than leaving validation to downstream consumers. Dataiku provides automated profiling and quality checks to catch issues before training or reporting, and dbt Core adds comprehensive data quality tests like unique and not-null with custom tests.

Structured transformations for joins, deduplication, and entity resolution

If your preparation includes matching records across messy sources, prioritize built-in fuzzy matching and linkage. Alteryx includes fuzzy matching and record linkage for deduplication and entity resolution inside visual workflows, which reduces the need for custom scripting.

Scalable pipeline execution for batch and streaming

Pick tools that can execute preparation logic reliably at scale and support the workload type you run. Azure Data Factory uses mapping data flows for graphical, schema-aware transformations, AWS Glue runs serverless Spark ETL for S3 lake preparation with Glue Data Catalog metadata management, and Google Cloud Dataflow supports Beam transforms with windowing and triggers for streaming-ready preparation.

How to Choose the Right Data Preparation Software

Match your workload and team skills to the execution model that each tool uses, from interactive recipes to orchestrated pipelines and code-first transformations.

Pick the workflow style that matches how your team builds transformations

If analysts need to transform tabular data with immediate feedback, choose Trifacta because its interactive wrangling UI applies transformations against sample data and generates reusable recipes from your edits. If you want a visual drag-and-drop workflow for joins and cleansing across files and databases, choose Alteryx because it combines cleansing, transformation, joins, and spatial analytics in one visual environment.

Ensure repeatability with recipes or models you can run again and again

If you want visual preparation that can connect to modeling and keep lineage intact, choose Dataiku because Prepare recipes include visual transformation steps and lineage tracking across steps. If your transformations should be versioned and tested as SQL artifacts, choose dbt Core because it compiles SQL to run on warehouses while managing dependencies and incremental logic.

Decide how you will orchestrate and schedule preparation jobs

If you need scheduled, governed ETL and ELT across cloud and on-prem sources, choose Azure Data Factory because parameterized workflows plus mapping data flows enable reusable data prep jobs. If you are AWS-first and you want serverless orchestration for S3 lake workflows, choose AWS Glue because Glue workflows automate multi-step preparation and Glue Data Catalog centralizes schemas for tables and partitions.

Match scaling and streaming requirements to the right execution engine

If you run continuous feature preparation on streaming inputs, choose Apache Spark because structured streaming uses DataFrame operations for continuous preparation and transformation. If you need an event-stream processing model with Exactly-once options, choose Google Cloud Dataflow because Apache Beam supports windowing, triggers, and exactly-once processing for reliable preparation steps.

Validate that the tool covers your data sources, destinations, and integration model

If you need connector-driven ingestion and publishing with environment-friendly deployments, choose Keboola because it uses reusable transformation blocks in a connector-based workspace and orchestrates jobs through a web layer. If you want flexible notebook-driven customization that mixes Python code and pipeline context, choose Mage AI because it supports reusable pipeline components, notebook-to-pipeline transformations, and built-in connectors.

Who Needs Data Preparation Software?

Data Preparation Software fits teams that spend time fixing messy inputs, standardizing schemas, and making transformations run reliably for analytics and machine learning.

Teams standardizing messy tabular data with interactive, repeatable transformation workflows

Trifacta fits this team because its smart parsing and transformation suggestions generate reusable recipes from interactive edits. Alteryx also fits this segment because its visual workflow designer accelerates joins, cleaning, and transformation work without requiring deep code-first development.

Data science teams needing governed visual prep and feature engineering pipelines

Dataiku fits because Prepare recipes support visual transformation steps with dataset lineage and data quality checks. Azure Data Factory fits when those teams also require scheduled, governed pipelines across cloud and on-prem systems using mapping data flows and monitoring.

Analytics and data teams standardizing complex preparation without extensive coding

Alteryx fits because fuzzy matching and record linkage for deduplication and entity resolution are built into visual workflows. dbt Core fits analytics teams that prefer SQL-first tested models because it includes data quality tests and deterministic incremental models.

Engineering teams building scalable ETL and streaming-ready preparation pipelines

Azure Data Factory fits teams building scheduled, governed ETL and ELT with graphical schema-aware mapping data flows. Google Cloud Dataflow and Apache Spark fit teams that need streaming and continuous transformations using Beam windowing and triggers or Spark structured streaming DataFrame operations.

Common Mistakes to Avoid

Common selection failures come from choosing the wrong execution model, underestimating setup governance effort, or building transformations without repeatability and checks.

Choosing a tool with weak repeatability for repeated cleaning tasks

Avoid treating one-off edits as if they will scale, because Trifacta and Dataiku both emphasize recipe-based workflows that turn transformations into reusable steps. If you need deterministic repeat runs in SQL, dbt Core provides incremental models that rebuild only changed partitions or keys.

Building complex transformations without the right matching and deduplication capabilities

If your preparation includes entity resolution, Alteryx fits because fuzzy matching and record linkage are built into the workflow for deduplication and matching. Avoid patching this with ad hoc logic in a workflow that does not include these features, since Spark and Beam require more custom implementation effort for matching semantics.

Skipping governance and lineage visibility for multi-step pipelines

If multiple teams rely on prepared datasets, prioritize lineage and monitoring so failures are diagnosable and changes are auditable. Azure Data Factory provides lineage, monitoring, and retries, and Trifacta and Dataiku provide lineage-style visibility into transformation steps.

Selecting a code-first engine when your team needs visual transformation authoring

If non-developers must build transformations quickly, avoid making Apache Spark or Google Cloud Dataflow the only authoring surface. Use Trifacta, Alteryx, or Dataiku to keep preparation visual while you reserve Spark, Beam, or dbt Core for the parts that require code-first control.

How We Selected and Ranked These Tools

We evaluated each tool on overall capability, features coverage, ease of use, and value for practical data preparation work across messy inputs, repeatable transformations, and pipeline execution. We separated Trifacta from lower-ranked tools by emphasizing smart parsing and transformation suggestions that generate reusable recipes from interactive edits, which directly reduces time spent on parsing and standardization. We also weighed how strongly each platform supports governed execution and lineage visibility, such as Azure Data Factory mapping data flows with lineage and monitoring, or Dataiku Prepare recipes with dataset lineage and data quality checks. We accounted for how the primary workflow model affects adoption, like Trifacta’s interactive wrangling UI compared with Mage AI’s notebook-first workflow that can require more engineering for orchestration and debugging.

Frequently Asked Questions About Data Preparation Software

Which data preparation tool is best for interactive, reusable transformations on messy tables?

Trifacta is designed for interactive wrangling where you edit data with visualization and then reuse the resulting transformation logic as recipes. Its smart parsing and suggestions help standardize column types and values while keeping the transformation steps repeatable.

How do Alteryx and Azure Data Factory differ for building repeatable workflows and scheduling?

Alteryx uses a drag-and-drop workflow builder and relies on Alteryx Server to schedule and run the same cleansing and joining logic at scale. Azure Data Factory builds activity-based pipelines with mapping data flows, parameterized triggers, and end-to-end monitoring for governed ETL and ELT.

What should teams choose when they need governed visual preparation plus feature engineering for machine learning?

Dataiku supports governed visual preparation with Prepare recipes that include cleaning, feature engineering, and dataset lineage across steps. It also runs quality checks and profiling so issues show up before downstream training or reporting.

Which tool is strongest for serverless ETL from semi-structured data into a curated S3 lake?

Amazon Glue focuses on serverless ETL jobs for schema discovery and transformation of semi-structured inputs. Its Glue Data Catalog centralizes tables and partitions so curated datasets stay discoverable across the lake.

If my preparation must handle streaming with exactly-once behavior, which platform fits best?

Google Cloud Dataflow runs Apache Beam pipelines with windowing and triggers suited for streaming and batch preparation. It also supports exactly-once processing options so event-based preparation logic can scale reliably.

When should teams switch from ETL tools to code-first distributed transformations with Spark?

Apache Spark is a strong fit when you need code-driven data preparation across large datasets using SQL, DataFrame, or Dataset APIs. Structured streaming in Spark lets you keep preparation running continuously instead of rebuilding one-off jobs.

How does dbt Core support testing and change-safe incremental transformations for ELT?

dbt Core transforms analytics SQL into versioned models that are dependency-aware and testable. It supports incremental models that rebuild only changed partitions or keys with deterministic SQL logic.

What’s the right choice for connector-driven prep and orchestration without writing custom ETL code?

Keboola emphasizes connector-based pipeline building that loads and transforms data through reusable components. It also provides web-based job orchestration so teams can standardize how data lands and how transformations run across projects.

How can teams combine notebook-based transformations with visual pipeline reuse?

Mage AI supports notebook-driven workflows where you mix Python code with visual pipelines. It lets you reuse blocks across projects and parameterize pipeline runs for repeatable batch and real-time style preparation.

Tools reviewed

Referenced in the comparison table and product reviews above.

Keep exploring

Comparing two specific tools?

Software Alternatives

See head-to-head software comparisons with feature breakdowns, pricing, and our recommendation for each use case.

Explore software alternatives→In this category

Data Science Analytics alternatives

See side-by-side comparisons of data science analytics tools and pick the right one for your stack.

Compare data science analytics tools→FOR SOFTWARE VENDORS

Not on this list? Let’s fix that.

Our best-of pages are how many teams discover and compare tools in this space. If you think your product belongs in this lineup, we’d like to hear from you—we’ll walk you through fit and what an editorial entry looks like.

Apply for a ListingWHAT THIS INCLUDES

Where buyers compare

Readers come to these pages to shortlist software—your product shows up in that moment, not in a random sidebar.

Editorial write-up

We describe your product in our own words and check the facts before anything goes live.

On-page brand presence

You appear in the roundup the same way as other tools we cover: name, positioning, and a clear next step for readers who want to learn more.

Kept up to date

We refresh lists on a regular rhythm so the category page stays useful as products and pricing change.