GITNUXSOFTWARE ADVICE

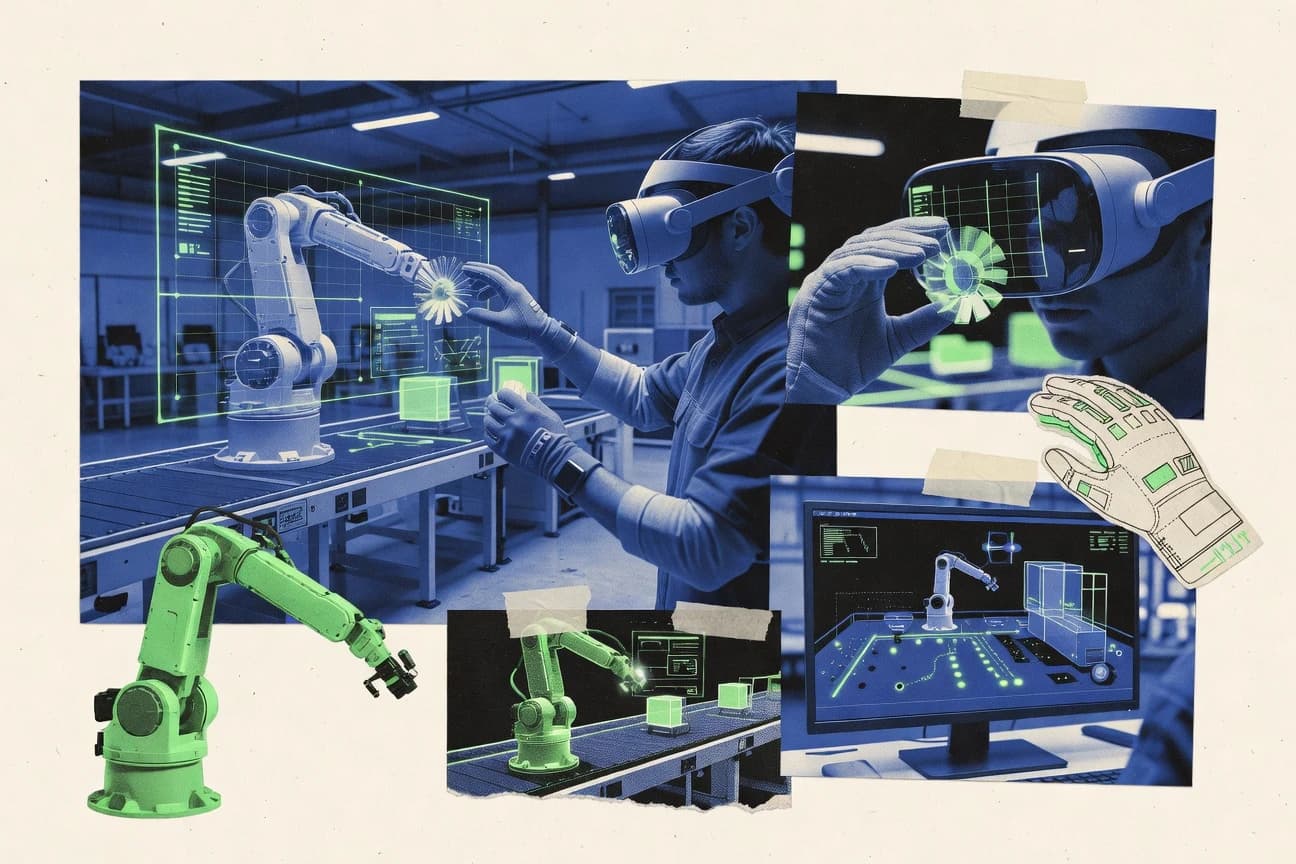

AI In IndustryTop 10 Best Virtual Reality Simulation Software of 2026

Explore the top 10 best virtual reality simulation software for learning, training & creativity.

How we ranked these tools

Core product claims cross-referenced against official documentation, changelogs, and independent technical reviews.

Analyzed video reviews and hundreds of written evaluations to capture real-world user experiences with each tool.

AI persona simulations modeled how different user types would experience each tool across common use cases and workflows.

Final rankings reviewed and approved by our editorial team with authority to override AI-generated scores based on domain expertise.

Score: Features 40% · Ease 30% · Value 30%

Gitnux may earn a commission through links on this page — this does not influence rankings. Editorial policy

Editor’s top 3 picks

Three quick recommendations before you dive into the full comparison below — each one leads on a different dimension.

Unity 3D

XR Interaction Toolkit for controller-based interaction patterns in VR scenes

Built for teams building interactive VR training simulations with reusable modules and custom interactions.

Unreal Engine

Blueprints visual scripting for VR interaction logic and training scenario behavior

Built for teams building high-fidelity VR training simulations with complex interactions.

HoloLens and Mixed Reality Toolkit

Mixed Reality Toolkit HandInteraction and gaze-based UX components

Built for teams building environment-aware mixed-reality training simulations for spatial hardware.

Related reading

Comparison Table

This comparison table maps leading virtual reality simulation tools across core criteria like supported headsets, development workflow, and suitability for training or creative prototyping. It covers engines and VR frameworks such as Unity 3D, Unreal Engine, Mixed Reality Toolkit, HoloLens, VIVE XR Elite SDK, and SteamVR, plus additional options for building immersive scenarios.

| # | Tool | Category | Overall | Features | Ease of Use | Value |

|---|---|---|---|---|---|---|

| 1 | Unity 3D Unity builds interactive VR training and simulation applications using real-time rendering, physics, and device input integration. | game-engine | 8.7/10 | 9.1/10 | 8.2/10 | 8.6/10 |

| 2 | Unreal Engine Unreal Engine creates high-fidelity VR simulation experiences for training and industrial digital twins using advanced rendering and simulation workflows. | game-engine | 8.2/10 | 8.8/10 | 7.3/10 | 8.2/10 |

| 3 | HoloLens and Mixed Reality Toolkit Microsoft Mixed Reality tools help teams develop VR and mixed-reality training apps with UI components, interaction patterns, and device support. | mixed-reality-dev | 8.0/10 | 8.6/10 | 7.7/10 | 7.6/10 |

| 4 | VIVE XR Elite SDK HTC VIVE development kits provide VR interaction frameworks and content integration for training and simulation apps on VIVE headsets. | device-sdk | 8.0/10 | 8.3/10 | 7.6/10 | 8.1/10 |

| 5 | SteamVR SteamVR delivers a runtime and device layer that enables VR simulation and training software to run across supported PCVR hardware. | vr-runtime | 8.1/10 | 8.7/10 | 7.6/10 | 7.9/10 |

| 6 | A-Frame A-Frame uses a declarative WebVR-style component model to build lightweight VR simulation scenes for browser-based training prototypes. | web-vr | 8.2/10 | 8.4/10 | 8.7/10 | 7.5/10 |

| 7 | Blender Blender supports VR content creation and animation workflows using modeling, rigging, and rendering features for simulation assets. | 3d-content-creation | 7.5/10 | 7.6/10 | 6.8/10 | 8.0/10 |

| 8 | Blippar Studio Blippar Studio enables interactive AR and spatial experiences that can be used to produce training simulations with guided visual interactions. | spatial-content | 7.1/10 | 7.2/10 | 7.6/10 | 6.6/10 |

| 9 | Strivr STRIVR provides enterprise VR training content and a platform for distributing, managing, and measuring immersive learning. | enterprise-learning | 8.1/10 | 8.5/10 | 8.2/10 | 7.6/10 |

| 10 | Virtonomics VR Virtonomics supports business simulation and decision-training experiences that can be adapted for immersive VR learning scenarios. | simulation-business | 7.1/10 | 6.8/10 | 7.4/10 | 7.2/10 |

Unity builds interactive VR training and simulation applications using real-time rendering, physics, and device input integration.

Unreal Engine creates high-fidelity VR simulation experiences for training and industrial digital twins using advanced rendering and simulation workflows.

Microsoft Mixed Reality tools help teams develop VR and mixed-reality training apps with UI components, interaction patterns, and device support.

HTC VIVE development kits provide VR interaction frameworks and content integration for training and simulation apps on VIVE headsets.

SteamVR delivers a runtime and device layer that enables VR simulation and training software to run across supported PCVR hardware.

A-Frame uses a declarative WebVR-style component model to build lightweight VR simulation scenes for browser-based training prototypes.

Blender supports VR content creation and animation workflows using modeling, rigging, and rendering features for simulation assets.

Blippar Studio enables interactive AR and spatial experiences that can be used to produce training simulations with guided visual interactions.

STRIVR provides enterprise VR training content and a platform for distributing, managing, and measuring immersive learning.

Virtonomics supports business simulation and decision-training experiences that can be adapted for immersive VR learning scenarios.

Unity 3D

game-engineUnity builds interactive VR training and simulation applications using real-time rendering, physics, and device input integration.

XR Interaction Toolkit for controller-based interaction patterns in VR scenes

Unity 3D stands out for its single authoring workflow that targets many VR runtimes and device classes from one project. It supports VR simulation through a real-time 3D engine, physics, animation tooling, and scene streaming for interactive training scenarios. Core XR integration includes input, cameras, and XR device management so teams can build locomotion, interaction, and UI tailored to head-mounted displays. It also supports prefab-based modular content so large training environments can be maintained as reusable systems.

Pros

- Strong real-time renderer with lighting workflows for convincing VR training scenes

- XR tooling covers headset, controller input, and XR camera setup

- Physics, animation, and scripting enable interactive simulations with complex behaviors

- Prefab and component architecture supports reusable training modules at scale

- Robust asset and scene pipeline speeds iteration on large environments

Cons

- VR interaction patterns often require custom scripting and careful optimization

- Performance tuning can be time-consuming for higher-fidelity VR scenes

- Team workflows can get complex when mixing advanced graphics and XR features

- Debugging across headset hardware and target runtimes can slow validation

Best For

Teams building interactive VR training simulations with reusable modules and custom interactions

More related reading

Unreal Engine

game-engineUnreal Engine creates high-fidelity VR simulation experiences for training and industrial digital twins using advanced rendering and simulation workflows.

Blueprints visual scripting for VR interaction logic and training scenario behavior

Unreal Engine stands out for delivering high-fidelity real-time rendering with production-grade tools for interactive worlds. It supports VR development workflows using motion-controller input, VR preview, and platform-ready performance profiling. The engine also enables physics, animation, and Blueprint visual scripting to prototype training simulations quickly and iterate on behavior. Large asset pipelines and rendering tools help teams build detailed environments for safety, industrial, and procedural practice scenarios.

Pros

- High-end rendering tools support realistic VR environments and lighting

- Blueprint visual scripting accelerates VR simulation logic without heavy coding

- Strong physics and animation systems improve interactive training fidelity

- Built-in VR preview and profiling help find performance issues early

Cons

- VR performance tuning requires significant engine and profiling expertise

- Project setup and asset pipeline management can be complex for small teams

- Iteration speed depends on build times and content optimization discipline

Best For

Teams building high-fidelity VR training simulations with complex interactions

HoloLens and Mixed Reality Toolkit

mixed-reality-devMicrosoft Mixed Reality tools help teams develop VR and mixed-reality training apps with UI components, interaction patterns, and device support.

Mixed Reality Toolkit HandInteraction and gaze-based UX components

HoloLens delivers mixed-reality simulations directly inside wearable spatial hardware, blending digital content with real environments. Mixed Reality Toolkit provides reusable UI, input, spatial mapping helpers, and scene components for building these simulations in Unity or with Microsoft tooling. The combination supports hand and gaze interactions, spatial anchors, and hologram rendering techniques that map well to training and walkthrough scenarios. Developers can iterate on spatial interaction logic while previewing and deploying experiences to the device for realistic presence tests.

Pros

- Mixed Reality Toolkit accelerates spatial UI, input, and interaction patterns

- HoloLens hardware enables real-world scale cues and embodied training context

- Spatial mapping and anchors support persistent, environment-aware simulations

- Unity integration fits common simulation and visualization pipelines

Cons

- Hand and spatial input design requires careful tuning to avoid confusion

- Scene performance can degrade with complex geometry and heavy effects

- Hardware constraints limit target fidelity for simulation-heavy use cases

- Deploy and iteration cycles add friction versus desktop-only simulation

Best For

Teams building environment-aware mixed-reality training simulations for spatial hardware

More related reading

VIVE XR Elite SDK

device-sdkHTC VIVE development kits provide VR interaction frameworks and content integration for training and simulation apps on VIVE headsets.

Hand tracking input pipeline for interactive VR simulations on VIVE XR Elite

VIVE XR Elite SDK focuses on building and deploying VR simulation experiences for VIVE XR Elite devices with a practical toolchain for spatial interaction. It provides platform-focused APIs for hand tracking, passthrough use cases, and controller input so simulation logic can react to real user movement. The SDK also supports common XR rendering and scene lifecycle patterns that help teams turn prototypes into repeatable training scenarios. Integration depth with the VIVE XR Elite ecosystem makes it a strong fit for device-targeted simulations rather than cross-headset experimentation.

Pros

- Device-focused XR APIs streamline VR simulation integration on VIVE XR Elite headsets

- Hand and controller input support fits interactive training and procedural walkthroughs

- Spatial and rendering lifecycle tools reduce glue code in simulation projects

Cons

- Tight ecosystem targeting limits portability to non-VIVE headsets

- Advanced simulation features require more engineering than turnkey training platforms

- Debugging XR performance can take significant iteration during scene optimization

Best For

Teams building interactive VR training simulations for VIVE XR Elite headsets

SteamVR

vr-runtimeSteamVR delivers a runtime and device layer that enables VR simulation and training software to run across supported PCVR hardware.

SteamVR Tracking and Room Setup with Steam Input controller mapping

SteamVR stands out for turning supported VR headsets into a common runtime with broad device and controller coverage. It provides core capabilities like room-scale tracking integration, Steam Input mapping, and access to VR titles through the Steam library. It also supports VR performance tuning via frame timing tools and a large ecosystem of compatible hardware and software.

Pros

- Strong headset and controller compatibility across many VR devices

- Steam Input enables flexible controller and input remapping

- Built-in VR performance overlays help diagnose frame timing issues

- Large library access streamlines finding simulation-ready VR content

Cons

- Setup and troubleshooting can be complex across mixed hardware

- SteamVR ecosystem dependence can limit non-Steam workflow integration

- Performance tuning requires tweaking settings for stable simulation runs

Best For

VR simulation users needing broad headset support and Steam Input mapping

A-Frame

web-vrA-Frame uses a declarative WebVR-style component model to build lightweight VR simulation scenes for browser-based training prototypes.

Entity-Component-System authoring model using HTML tags for VR scene composition

A-Frame stands out for building VR scenes with web technologies like HTML and JavaScript. The framework provides a declarative entity-component system for entities such as cameras, lighting, and interactive objects. It supports 3D asset loading, event handling, and optional AR features through WebXR-compatible workflows. This combination makes it well suited for browser-based VR simulations and prototypes.

Pros

- Declarative HTML entity-component model speeds up VR scene creation

- Strong WebXR and browser deployment support for cross-device testing

- Built-in event system enables interactions without heavy engine tooling

Cons

- Performance tuning is manual for complex scenes and large asset sets

- Physics and advanced simulation tooling require external libraries

- VR-specific asset pipelines can become cumbersome for large productions

Best For

Teams prototyping web-based VR simulations with interactive 3D scenes

More related reading

Blender

3d-content-creationBlender supports VR content creation and animation workflows using modeling, rigging, and rendering features for simulation assets.

VR Viewport mode for stereoscopic scene inspection with tracked controller input

Blender stands out for combining open asset creation with simulation-oriented tooling in one workstation workflow. Core capabilities include physically based rendering, animation, rigging, and mesh modeling plus optional physics features for realistic motion and interactions. VR support enables stereoscopic viewing and motion controller input for reviewing scenes and iterating on spatial layouts. For virtual reality simulation, it works best when VR is used as a preview and interaction layer rather than a full real-time physics engine replacement.

Pros

- Strong modeling, rigging, animation, and rendering for VR scene authoring

- VR viewport supports stereoscopic viewing and tracked controller interaction

- Physics and simulation add-ons support iterative motion and effects testing

Cons

- VR simulation workflows require more setup than dedicated VR simulators

- Complex scenes can demand optimization for stable VR frame rates

- Learning curve is steep for accurate VR scene and interaction configuration

Best For

Studios prototyping VR environments with custom assets and animation

Blippar Studio

spatial-contentBlippar Studio enables interactive AR and spatial experiences that can be used to produce training simulations with guided visual interactions.

Blippar Studio’s recognition-driven interaction builder for camera-based triggers and 3D overlays

Blippar Studio stands out for combining image-based capture with interactive, simulation-style content authoring rather than building VR from scratch. Core capabilities center on creating AR experiences that can include 3D assets, interactive triggers, and device-targeted behavior for simulated training or product walkthroughs. The tool supports publishing and deployment workflows that focus on running the experience on consumer cameras and sensors, which can approximate VR training scenarios when used with compatible headsets. VR-specific production features like room-scale setup, motion controller input mapping, and native comfort tooling are not the primary emphasis compared with dedicated VR authoring suites.

Pros

- Fast authoring for interactive visual simulations built around camera recognition

- Strong support for 3D asset integration with triggers and staged interactions

- Publishing workflow aligns with running experiences on real devices

Cons

- VR simulation controls are limited compared with VR-first authoring platforms

- Less coverage for motion controller mapping and room-scale configuration

- Comfort and telemetry tooling for VR training is not a core focus

Best For

Teams creating mixed camera-driven simulations that need light VR compatibility

More related reading

Strivr

enterprise-learningSTRIVR provides enterprise VR training content and a platform for distributing, managing, and measuring immersive learning.

Learning Path builder that sequences VR simulations into guided training journeys

Strivr stands out for browser- and mobile-friendly access to VR training content backed by guided simulation journeys. It supports learning paths, scenario-based modules, and analytics that track learner progress across VR experiences. The platform is built for enterprise training deployment where standardized practice matters more than custom code-heavy development. Content delivery emphasizes headset-agnostic playback, with creation tools that focus on rapid authoring of training scenes.

Pros

- VR training journeys with structured modules for repeatable practice

- Progress analytics track completion and performance within learning paths

- Simplified content publishing workflow reduces friction for rollout

Cons

- Advanced custom simulation logic needs more platform support than DIY

- Authoring flexibility can be limited for highly bespoke VR interactions

- Reporting is strong for training completion but thinner for deep diagnostics

Best For

Enterprise teams deploying standardized VR training modules with analytics

Virtonomics VR

simulation-businessVirtonomics supports business simulation and decision-training experiences that can be adapted for immersive VR learning scenarios.

Immersive visualization of business simulation states tied to management actions

Virtonomics VR distinguishes itself by bringing an existing business simulation workflow into a virtual reality experience focused on operational decision-making. The tool centers on managing simulated enterprises and observing business outcomes through immersive, interactive views. It supports scenario-driven exploration of production, trade, and management levers rather than VR-first construction tools. The result fits teams that want spatial visualization of complex business systems more than custom VR world building.

Pros

- Business simulation controls mapped to immersive visual feedback

- Clear focus on operational decision loops like production and trading

- VR interface makes system states easier to scan quickly

- Works well for training-style walkthroughs of management logic

Cons

- Limited VR customization for building new simulation spaces

- VR experience depth depends on the underlying simulation content

- Fewer advanced VR interaction patterns than dedicated VR platforms

- Not a general-purpose VR simulation authoring environment

Best For

Teams using business process simulations that benefit from immersive visualization

Conclusion

After evaluating 10 ai in industry, Unity 3D stands out as our overall top pick — it scored highest across our combined criteria of features, ease of use, and value, which is why it sits at #1 in the rankings above.

Use the comparison table and detailed reviews above to validate the fit against your own requirements before committing to a tool.

How to Choose the Right Virtual Reality Simulation Software

This buyer’s guide explains how to choose Virtual Reality Simulation Software using concrete capabilities from Unity 3D, Unreal Engine, Mixed Reality Toolkit for HoloLens, VIVE XR Elite SDK, and SteamVR. It also covers web-based and content-centric options like A-Frame and Blender, plus training and simulation platforms like Strivr and Virtonomics VR, and camera-driven simulation authoring with Blippar Studio. The guide maps tools to specific training, visualization, interaction, and deployment needs.

What Is Virtual Reality Simulation Software?

Virtual Reality Simulation Software builds interactive VR experiences that teach skills, rehearse procedures, or visualize systems in an immersive headset environment. It solves problems like repeatable practice, safe scenario exposure, and interactive learning feedback using device input, real-time rendering, and scenario logic. Teams use it to create controller or hand-tracking interactions in environments, as shown by Unity 3D’s XR Interaction Toolkit support and Unreal Engine’s Blueprint visual scripting for VR behavior. Some solutions also target MR hardware directly, like HoloLens with Mixed Reality Toolkit hand and gaze interaction components.

Key Features to Look For

The right feature set determines whether a tool can deliver the specific interaction fidelity, deployment path, and authoring workflow needed for VR training or simulation.

XR interaction patterns built for headsets and controllers

Unity 3D supports VR interaction patterns through its XR Interaction Toolkit, which helps teams implement controller-based interaction behaviors without building every input flow from scratch. Unreal Engine pairs VR motion-controller input with Blueprint visual scripting so training logic can connect directly to interaction events.

Visual scripting for VR training logic

Unreal Engine’s Blueprint visual scripting enables teams to prototype VR simulation logic for training scenarios quickly and iterate behavior without heavy coding. This approach complements production-grade VR development workflows that include VR preview and performance profiling.

Hand tracking and passthrough-aware input for device-specific XR

VIVE XR Elite SDK provides a hand tracking input pipeline and platform-focused APIs that fit interactive training and procedural walkthroughs on VIVE XR Elite headsets. Teams targeting that device ecosystem get integration depth that supports interaction logic reacting to real movement.

Mixed-reality UI and gaze or hand interaction components

Mixed Reality Toolkit for HoloLens supplies reusable UI, hand and gaze interaction patterns, spatial anchors, and spatial mapping helpers that match environment-aware training needs. HoloLens hardware adds embodied scale cues that make walkthrough and presence tests more realistic than desktop-only previews.

Runtime and device compatibility with controller mapping

SteamVR turns supported PCVR headsets into a common runtime and includes Steam Input controller remapping for flexible input mapping across devices. SteamVR also includes tracking and room setup tools plus performance overlays for diagnosing frame timing issues during VR simulation runs.

Authoring workflow for scalable assets and reusable simulation modules

Unity 3D’s prefab and component architecture supports reusable training modules and large environment maintenance, which helps teams scale content iteration. Unreal Engine supports large asset pipelines and detailed rendering workflows for high-fidelity environments, which is useful when training scenes require complex environments and lighting workflows.

Web-based VR scene composition with declarative components

A-Frame uses a declarative HTML entity-component model to compose VR scenes and build interactive objects with event handling. This workflow supports browser and WebXR-style deployment for cross-device testing of lightweight VR training prototypes.

Stereoscopic VR asset inspection and tracked-controller review

Blender provides a VR Viewport mode for stereoscopic viewing and tracked controller input, which helps studios inspect custom models and animation-driven environments. Blender works best as a content authoring and review layer rather than a full VR simulation engine replacement.

Enterprise learning journeys with structured modules and progress analytics

Strivr sequences VR practice into guided learning paths and records completion and learner progress across VR experiences. This structure supports standardized training delivery when repeatable scenario modules matter more than bespoke simulation logic.

Immersive visualization of business simulation decisions

Virtonomics VR maps operational decision controls like production and trading into immersive, interactive visual feedback. This focus suits teams that want spatial scanning of business state changes rather than general-purpose VR world building.

Camera recognition-based interactive triggers for mixed camera-driven simulations

Blippar Studio uses recognition-driven interaction building with camera-based triggers and 3D overlays to author interactive visual simulations. It supports a publishing workflow that targets running experiences on real devices, which helps teams approximate VR training scenarios with lighter VR-first authoring requirements.

How to Choose the Right Virtual Reality Simulation Software

Selection should start with target hardware and interaction needs, then match authoring workflow, simulation depth, and deployment type to the training scenario requirements.

Match the tool to the target device and interaction modality

For VIVE XR Elite headsets, choose VIVE XR Elite SDK because it provides platform-focused APIs and a hand tracking input pipeline designed for device integration. For controller-driven VR training across many PCVR headsets, choose SteamVR because it provides common runtime coverage plus Steam Input controller mapping and tracking room setup.

Pick the authoring workflow based on how simulation logic will be built

If training logic needs rapid prototyping with visual flow, Unreal Engine fits because it supports Blueprint visual scripting for VR interaction logic and training behavior. If the project needs a single authoring workflow across many VR runtimes using modular reusable content, Unity 3D fits because it targets multiple XR runtimes from one project with XR device management and prefab-based modules.

Use MR-specific components only when HoloLens environment awareness is required

Choose Mixed Reality Toolkit with HoloLens when training requires mixed-reality presence, spatial anchors, and gaze or hand UX components for environment-aware walkthroughs. This setup also expects careful hand and spatial input tuning to prevent confusion during interaction-heavy scenarios.

Decide whether the goal is a training platform or a custom simulation build

Choose Strivr when the primary need is enterprise delivery with structured learning paths and progress analytics across VR experiences. Choose Unity 3D, Unreal Engine, or A-Frame when the need is bespoke simulation construction with custom interaction patterns and environment logic, since A-Frame focuses on declarative WebXR-style scene composition and Unity and Unreal focus on real-time rendering and complex behaviors.

Plan for performance tuning and scene complexity early

If the project needs high-fidelity rendering and complex environments, pick Unreal Engine or Unity 3D, but plan for VR performance tuning and profiling to maintain stable frame rates. If the project is a lightweight browser prototype, A-Frame can speed iteration with its entity-component model, but physics and advanced simulation tooling will require external libraries for deeper simulation.

Who Needs Virtual Reality Simulation Software?

Virtual Reality Simulation Software serves training teams, simulation builders, and visualization-focused organizations that need immersive interaction, scenario repetition, or spatial decision feedback.

Teams building interactive VR training simulations with reusable modules and custom interactions

Unity 3D fits because prefab and component architecture support reusable training modules at scale and XR tooling covers headset, controller input, and XR camera setup. Unreal Engine fits when high-fidelity visuals and Blueprint-based training logic matter for complex interactions.

Teams building high-fidelity VR training simulations with complex interaction logic

Unreal Engine fits because it combines advanced rendering tools with Blueprint visual scripting for VR scenario behavior and built-in VR preview and profiling to find performance issues early. Unity 3D also fits when physics, animation tooling, and scripting enable complex interactive behaviors with strong real-time rendering.

Teams creating environment-aware mixed-reality training experiences for wearable spatial hardware

Mixed Reality Toolkit for HoloLens fits because it provides reusable UI, hand and gaze interaction components, spatial mapping helpers, and spatial anchors for persistent environment-aware simulations. HoloLens hardware supports real-world scale cues that make walkthrough and presence testing more grounded than desktop VR.

Teams targeting VIVE XR Elite headsets for hand-tracking-driven interactive training

VIVE XR Elite SDK fits because it focuses on device-targeted XR APIs and includes a hand tracking input pipeline plus controller input support for procedural walkthroughs. The ecosystem focus helps reduce glue code for simulation lifecycle patterns on VIVE XR Elite.

VR simulation users who need broad headset support and flexible controller mapping

SteamVR fits because it delivers a runtime and device layer for supported PCVR hardware plus Steam Input controller remapping across devices. Built-in tracking and room setup tools support stable simulation runs when multiple headsets are involved.

Teams prototyping VR simulations in the browser for cross-device testing

A-Frame fits because it uses a declarative HTML entity-component model to build VR scenes quickly and provides WebXR-compatible workflows. Event handling and interactive object composition are built in, which supports fast iteration for training prototypes.

Studios prototyping VR environments with custom assets, rigs, and animations

Blender fits because it supports VR Viewport mode for stereoscopic inspection with tracked controller input and includes modeling, rigging, animation, and rendering workflows for simulation assets. It works best as an asset creation and review layer rather than a dedicated VR simulation authoring environment.

Enterprise teams deploying standardized VR training modules with measurable learning progress

Strivr fits because it provides guided simulation journeys with a Learning Path builder plus progress analytics for completion and performance across learning paths. This makes it suitable when repeatability and reporting matter more than deep custom simulation logic.

Teams using business process simulations that benefit from immersive decision visualization

Virtonomics VR fits because it immerses business simulation controls into interactive visual feedback focused on operational decision loops like production and trading. It prioritizes visualization of business system state changes over building new VR spaces from scratch.

Teams creating camera-driven interactive simulations with lightweight VR compatibility

Blippar Studio fits because it builds recognition-driven interactions using camera triggers and 3D overlays and supports publishing to run on consumer devices. It approximates VR training scenarios using device cameras, while VR-specific room-scale and controller mapping are not the primary emphasis.

Common Mistakes to Avoid

Common pitfalls come from mismatching authoring workflow to hardware targets, underestimating VR performance tuning effort, and choosing a platform built for learning delivery when bespoke interaction depth is required.

Choosing a VR authoring engine without planning for interaction customization

Unity 3D can require custom scripting and careful optimization for VR interaction patterns, so interaction-heavy training needs engineering time. Unreal Engine can also require significant VR performance tuning expertise to hit stable frame timing.

Targeting the wrong interaction input stack for the selected hardware

VIVE XR Elite SDK is tightly targeted to VIVE XR Elite headsets, so using it for non-VIVE experiences creates portability friction. Mixed Reality Toolkit requires careful hand and spatial input design tuning to avoid confusing mixed-reality UX behavior.

Assuming the runtime layer will solve controller and tracking setup issues automatically

SteamVR can simplify device compatibility, but setup and troubleshooting can become complex across mixed hardware. SteamVR performance tuning still requires tweaking settings to keep simulation runs stable.

Using web declarative tooling for deep physics-based simulation without planning for external tooling

A-Frame supports event handling and interactive 3D scene composition, but physics and advanced simulation tooling require external libraries for complex simulation behavior. Blender VR Viewport supports inspection, but it is not a dedicated VR simulation engine replacement.

Picking a training delivery platform when highly bespoke simulation logic is the goal

Strivr is optimized for structured learning paths and progress analytics, so advanced custom simulation logic depends on platform support. Virtonomics VR is focused on immersive visualization of business decision loops, so it is not a general-purpose VR world building environment for custom interaction libraries.

How We Selected and Ranked These Tools

We evaluated every tool on three sub-dimensions with explicit weights. Features carry 0.40 of the overall score. Ease of use carries 0.30 of the overall score. Value carries 0.30 of the overall score. Overall equals 0.40 × features plus 0.30 × ease of use plus 0.30 × value. Unity 3D separated itself through strong features tied to XR development workflows, including XR device management, controller interaction tooling via XR Interaction Toolkit, physics and animation support, and prefab-based reusable modules, while still maintaining a workable ease of use score for teams building interactive training environments.

Frequently Asked Questions About Virtual Reality Simulation Software

Which tool is best for building reusable interactive VR training modules without rewriting the whole project per headset?

Unity 3D fits this workflow because a single authoring project targets many VR runtimes and device classes, using XR device management, input, and camera controls. Prefab-based modular content helps teams maintain large training environments as reusable systems. Unreal Engine can also scale for complex interactions, but Unity’s XR Interaction Toolkit patterns often reduce custom glue for controller interactions.

What choice gives the highest visual fidelity for VR training scenes with complex interactions?

Unreal Engine is built for high-fidelity real-time rendering with production-grade tools, including VR preview and performance profiling for motion-controller input. Blueprint visual scripting helps prototype training scenario behavior quickly and iterate without deep code changes. Unity 3D also supports real-time rendering, but Unreal Engine’s rendering pipeline and asset workflows typically emphasize scene realism for interactive worlds.

How do developers create mixed-reality simulations that blend digital content with the user’s real environment?

HoloLens and Mixed Reality Toolkit support environment-aware simulation by combining spatial mapping helpers, spatial anchors, and hologram rendering in wearable hardware. HandInteraction and gaze-based UX components help build training walkthroughs that rely on spatial presence rather than a fully virtual stage. Unity 3D can implement MR experiences, but the Mixed Reality Toolkit accelerates device-specific UI and input patterns for HoloLens.

Which software is best when the target device is a VIVE XR Elite headset with hand tracking and passthrough use cases?

VIVE XR Elite SDK is the most direct fit because its platform-focused APIs cover hand tracking input, controller input, and passthrough scenarios. The SDK also provides common XR rendering and scene lifecycle patterns so prototypes become repeatable training scenarios on that device family. SteamVR can run on many headsets, but it is less device-specific than an SDK built around VIVE XR Elite capabilities.

What runtime helps teams support many headsets and controllers with consistent setup and controller mapping?

SteamVR provides a common runtime with broad device coverage plus Steam Input mapping to standardize controller behavior across supported hardware. Tracking and room setup tooling helps stabilize room-scale placement for VR simulations. Unity 3D and Unreal Engine target builds, but SteamVR is the runtime layer that often determines how controllers map in practice.

Which option fits browser-based VR simulations where the team wants HTML and JavaScript workflows?

A-Frame supports browser-native authoring using an entity-component system with declarative HTML tags for cameras, lighting, and interactive objects. It loads 3D assets and handles events for interactive scenes, and it aligns with WebXR-compatible workflows for optional AR features. Blender can export assets, but A-Frame is the authoring layer designed for web-based VR scene composition.

How can teams use Blender effectively for VR simulation work without treating it as the primary real-time VR engine?

Blender works best as an environment creation and animation workstation where VR is used for stereoscopic inspection and tracked-controller review. VR Viewport mode helps teams iterate on spatial layouts and interaction blocking before implementing logic in a real-time VR engine. Unity 3D and Unreal Engine then handle runtime physics, XR interaction patterns, and scene streaming for the finished training simulation.

What tool supports camera-driven interactive simulations that approximate VR training when room-scale VR is not the focus?

Blippar Studio focuses on image-capture and recognition-driven interactions that can include 3D overlays and interactive triggers. It is suited for simulated training or product walkthroughs that run on consumer cameras and sensors, while VR-specific room-scale controller comfort tooling is not the primary emphasis. Strivr can deliver headset-based training journeys with analytics, but Blippar Studio targets camera-first interaction patterns.

Which platform is designed for enterprise VR training with learning paths and progress analytics instead of heavy custom development?

Strivr emphasizes guided simulation journeys with learning paths, scenario-based modules, and analytics that track learner progress across VR experiences. Content delivery is built for standardized practice where sequencing matters more than code-heavy customization. Unity 3D and Unreal Engine support bespoke training builders, but Strivr is built specifically around enterprise deployment and measurement.

Which software is best for immersive business simulation where decisions drive changes to system state rather than building a VR world from scratch?

Virtonomics VR targets operational decision-making by immersing users in interactive views of a simulated enterprise. It supports scenario-driven exploration of production, trade, and management levers tied to observable outcomes. Unity 3D and Unreal Engine can render custom simulations, but Virtonomics VR provides a business simulation workflow designed for spatial visualization of enterprise state changes.

Tools reviewed

Referenced in the comparison table and product reviews above.

Keep exploring

Comparing two specific tools?

Software Alternatives

See head-to-head software comparisons with feature breakdowns, pricing, and our recommendation for each use case.

Explore software alternatives→In this category

AI In Industry alternatives

See side-by-side comparisons of ai in industry tools and pick the right one for your stack.

Compare ai in industry tools→FOR SOFTWARE VENDORS

Not on this list? Let’s fix that.

Our best-of pages are how many teams discover and compare tools in this space. If you think your product belongs in this lineup, we’d like to hear from you—we’ll walk you through fit and what an editorial entry looks like.

Apply for a ListingWHAT THIS INCLUDES

Where buyers compare

Readers come to these pages to shortlist software—your product shows up in that moment, not in a random sidebar.

Editorial write-up

We describe your product in our own words and check the facts before anything goes live.

On-page brand presence

You appear in the roundup the same way as other tools we cover: name, positioning, and a clear next step for readers who want to learn more.

Kept up to date

We refresh lists on a regular rhythm so the category page stays useful as products and pricing change.