GITNUXSOFTWARE ADVICE

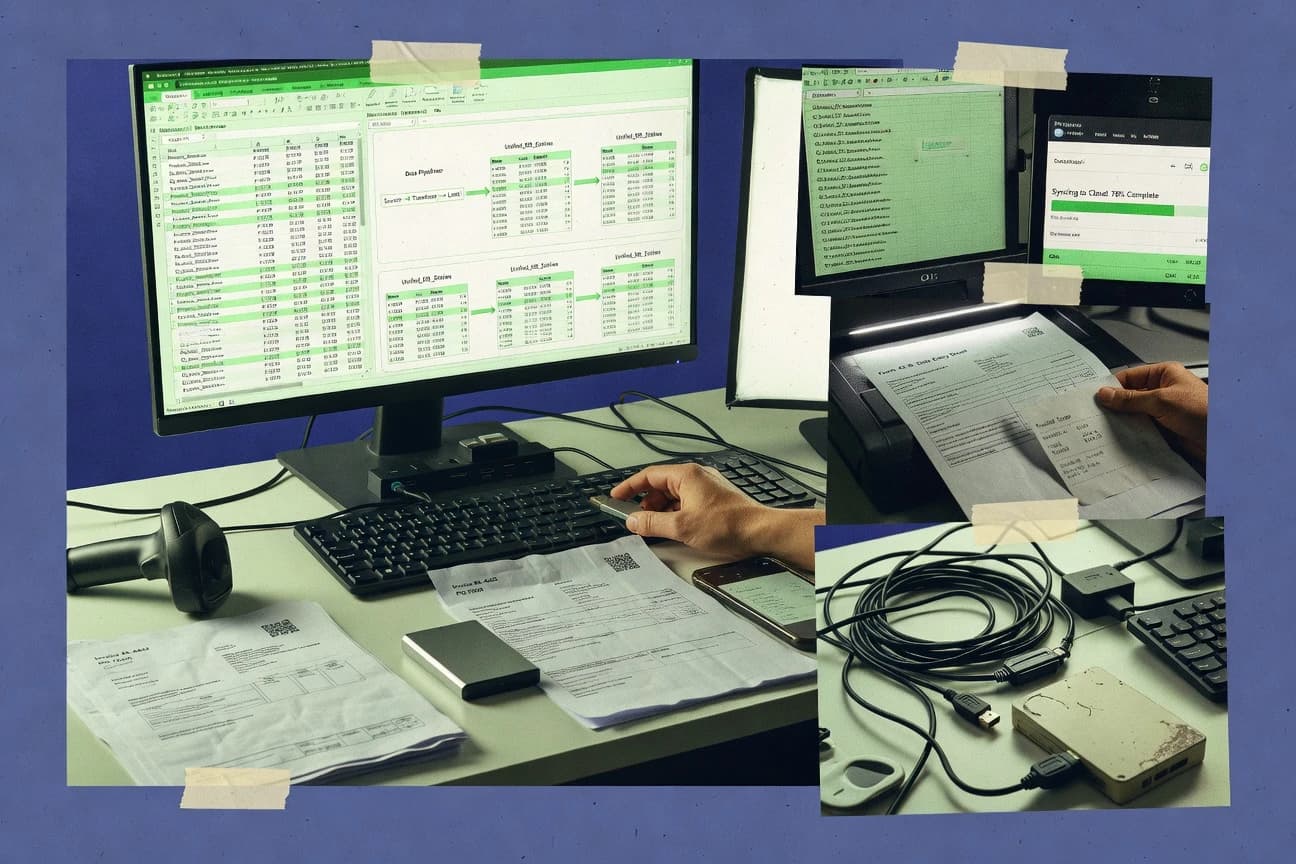

Data Science AnalyticsTop 8 Best Data Import Software of 2026

Explore the top 10 data import tools to streamline workflows—seamless integration for your needs.

How we ranked these tools

Core product claims cross-referenced against official documentation, changelogs, and independent technical reviews.

Analyzed video reviews and hundreds of written evaluations to capture real-world user experiences with each tool.

AI persona simulations modeled how different user types would experience each tool across common use cases and workflows.

Final rankings reviewed and approved by our editorial team with authority to override AI-generated scores based on domain expertise.

Score: Features 40% · Ease 30% · Value 30%

Gitnux may earn a commission through links on this page — this does not influence rankings. Editorial policy

Editor picks

Three quick recommendations before you dive into the full comparison below — each one leads on a different dimension.

Fivetran

Continuous sync via prebuilt connectors with automated schema change handling

Built for teams needing low-maintenance, continuous data ingestion into analytics warehouses.

Stitch

Incremental replication with automated schema handling for continuous data sync

Built for analytics teams automating ongoing SaaS to warehouse data imports.

Azure Data Factory

Mapping Data Flows for declarative, scalable transformation within ADF pipelines

Built for azure-centric teams needing governed ETL orchestration and scalable imports.

Related reading

Comparison Table

This comparison table evaluates data import software used to move data into warehouses and lakes, including Fivetran, Stitch, Azure Data Factory, AWS Glue, and Google Cloud Data Fusion. It highlights how each tool handles source connections, ingestion patterns, transformation support, scaling controls, and operational features so teams can match capabilities to their migration and ETL requirements.

| # | Tool | Category | Overall | Features | Ease of Use | Value |

|---|---|---|---|---|---|---|

| 1 | Fivetran Automated data connectors continuously sync data from SaaS apps and databases into analytics warehouses with managed schemas and built-in monitoring. | managed connectors | 9.0/10 | 9.3/10 | 8.8/10 | 8.9/10 |

| 2 | Stitch Self-service and managed ingestion connects operational databases and SaaS sources to a data warehouse using batch and streaming sync modes. | ELT ingestion | 8.2/10 | 8.6/10 | 7.6/10 | 8.2/10 |

| 3 | Azure Data Factory Orchestrates data movement and transformation by importing from many source systems through linked services and integration runtimes into target stores. | ETL orchestration | 7.9/10 | 8.4/10 | 7.4/10 | 7.6/10 |

| 4 | AWS Glue Runs managed ETL jobs that crawl source schemas and import data into S3, data lakes, and analytics targets using Spark-based transforms. | managed ETL | 8.1/10 | 8.6/10 | 7.8/10 | 7.9/10 |

| 5 | Google Cloud Data Fusion Provides a visual pipeline builder that imports and transforms data using prebuilt connectors and manages secure execution on Google Cloud. | visual data integration | 8.4/10 | 8.7/10 | 7.9/10 | 8.4/10 |

| 6 | Talend Data Integration Imports, transforms, and loads data from heterogeneous systems using developer-built jobs or governed integrations into analytics destinations. | enterprise ETL | 8.2/10 | 8.7/10 | 7.6/10 | 8.0/10 |

| 7 | Airbyte Runs connectors to extract data from many sources and load it into warehouses and lakes with incremental sync and schema mapping. | open-source ELT | 8.0/10 | 8.5/10 | 7.8/10 | 7.6/10 |

| 8 | Apache NiFi Imports data via configurable processors and routes it through flows with backpressure, retry handling, and storage for analytics pipelines. | dataflow automation | 7.7/10 | 8.3/10 | 7.0/10 | 7.7/10 |

Automated data connectors continuously sync data from SaaS apps and databases into analytics warehouses with managed schemas and built-in monitoring.

Self-service and managed ingestion connects operational databases and SaaS sources to a data warehouse using batch and streaming sync modes.

Orchestrates data movement and transformation by importing from many source systems through linked services and integration runtimes into target stores.

Runs managed ETL jobs that crawl source schemas and import data into S3, data lakes, and analytics targets using Spark-based transforms.

Provides a visual pipeline builder that imports and transforms data using prebuilt connectors and manages secure execution on Google Cloud.

Imports, transforms, and loads data from heterogeneous systems using developer-built jobs or governed integrations into analytics destinations.

Runs connectors to extract data from many sources and load it into warehouses and lakes with incremental sync and schema mapping.

Imports data via configurable processors and routes it through flows with backpressure, retry handling, and storage for analytics pipelines.

Fivetran

managed connectorsAutomated data connectors continuously sync data from SaaS apps and databases into analytics warehouses with managed schemas and built-in monitoring.

Continuous sync via prebuilt connectors with automated schema change handling

Fivetran stands out for automated data ingestion with prebuilt connectors that continuously sync data into analytics warehouses. It supports schema handling for common sources and destinations, including structured and semi-structured data pipelines. Users can manage sync schedules, backfills, and transformations with built-in tooling and integration-friendly output for downstream BI.

Pros

- Large library of ready-to-use connectors for popular SaaS and databases

- Continuous syncing with automatic change detection reduces pipeline maintenance

- Strong warehouse-native workflow with predictable landing tables and schemas

Cons

- Limited flexibility for highly custom extraction logic compared to hand-built ETL

- Complex connector sets can require careful monitoring to avoid failed jobs

- Transformations can feel restrictive for advanced modeling workflows

Best For

Teams needing low-maintenance, continuous data ingestion into analytics warehouses

More related reading

Stitch

ELT ingestionSelf-service and managed ingestion connects operational databases and SaaS sources to a data warehouse using batch and streaming sync modes.

Incremental replication with automated schema handling for continuous data sync

Stitch focuses specifically on moving data between systems using prebuilt connectors and schema-aware syncing. It supports both recurring replication and one-time backfills so imported datasets stay current without manual export jobs. The platform emphasizes normalization and mapping so data lands in analytics tools with consistent fields.

Pros

- Prebuilt connectors cover common SaaS and databases for faster setup

- Incremental syncing reduces repeated loads and supports near real-time updates

- Schema and field mapping help keep destination datasets consistent

- Backfills support rebuilding history when source schemas evolve

Cons

- Complex transformations often require external staging and custom logic

- Debugging failed sync jobs can be slow without strong observability

- Large schema migrations can need careful planning and coordination

Best For

Analytics teams automating ongoing SaaS to warehouse data imports

Azure Data Factory

ETL orchestrationOrchestrates data movement and transformation by importing from many source systems through linked services and integration runtimes into target stores.

Mapping Data Flows for declarative, scalable transformation within ADF pipelines

Azure Data Factory stands out for orchestrating data movement and transformation using a managed visual pipeline builder plus code-friendly JSON definitions. It supports importing from common sources and routing data into Azure data stores using built-in connectors, plus custom logic via mapping data flows and compute activities. Cross-workflow scheduling, dependency handling, and monitoring integrate with Azure operations to track pipeline runs and failures. For teams building repeatable ETL and ELT workflows, it offers a stronger governance and orchestration layer than many basic import tools.

Pros

- Visual pipeline designer maps import workflows into repeatable activities

- Rich source and sink connectors cover many import scenarios

- Managed orchestration supports schedules, dependencies, and retries

- Mapping data flows support scalable transformations without hand-coded ETL

Cons

- Design-time complexity rises when pipelines mix control flow and data flows

- Operational troubleshooting can require Azure-native monitoring know-how

- Fine-grained tuning of performance often needs deeper Spark and throughput knowledge

Best For

Azure-centric teams needing governed ETL orchestration and scalable imports

AWS Glue

managed ETLRuns managed ETL jobs that crawl source schemas and import data into S3, data lakes, and analytics targets using Spark-based transforms.

Glue Crawlers that infer schemas and populate the Glue Data Catalog automatically

AWS Glue stands out by combining managed ETL jobs with a centralized data catalog for importing and transforming data across AWS analytics services. It supports schema discovery and automatic job generation using Glue crawlers and development endpoints, which speeds onboarding for new sources. Glue also integrates tightly with S3 and common ingestion patterns like batching from databases, streaming through Kinesis, and writing curated outputs back to S3 in optimized formats.

Pros

- Managed Spark ETL jobs reduce infrastructure work

- Glue Data Catalog centralizes schemas for imported datasets

- Crawlers automate schema inference for many source types

- Strong AWS integration for S3-based staging and curated outputs

- Built-in connectors for common databases and streaming sources

Cons

- Job tuning for performance often requires Spark and data-shape expertise

- Catalog and crawler setup can be complex for fast-changing schemas

- Local testing and debugging can be slower than code-first pipelines

Best For

AWS-centric teams importing and transforming data into S3 for analytics

Google Cloud Data Fusion

visual data integrationProvides a visual pipeline builder that imports and transforms data using prebuilt connectors and manages secure execution on Google Cloud.

Visual pipeline designer that compiles into scalable Spark-based ETL with connectors

Google Cloud Data Fusion stands out for its visual ETL workflow builder that generates pipelines for cloud data movement. It supports batch and streaming ingestion with a library of prebuilt connectors and transformation stages for common enterprise sources. Users can design, validate, and run data pipelines through a web UI and operationalize them in managed environments.

Pros

- Visual pipeline builder accelerates ETL development with reusable stages

- Broad connector catalog supports common cloud and on-prem data sources

- Built-in data quality and schema handling reduces manual validation work

- Supports both batch and streaming pipeline patterns

- Production-grade orchestration simplifies scheduling and job management

Cons

- Complex workflows can outgrow the visual model and need tuning

- Some advanced transformations require deeper understanding of pipeline semantics

- Debugging distributed pipeline failures can be slower than code-first ETL tools

Best For

Teams needing managed visual ETL with connectors for batch and streaming imports

Talend Data Integration

enterprise ETLImports, transforms, and loads data from heterogeneous systems using developer-built jobs or governed integrations into analytics destinations.

Data Quality components that embed validation and survivorship checks into ETL imports

Talend Data Integration stands out for its visual, code-optional ETL and data integration design, backed by a component-based pipeline builder. It supports importing and transforming data across common sources using connectors, then routing results into target systems with scheduling and job management. Strong governance features like data quality rules and metadata-driven development help teams keep imports consistent across environments.

Pros

- Visual ETL design with reusable components for fast import pipeline creation

- Broad connector coverage for ingesting from common databases and file formats

- Built-in data quality capabilities to validate records during import

Cons

- Project complexity rises quickly when many jobs and mappings share assets

- Requires platform and runtime tuning for reliable high-volume imports

- Enterprise governance features can add overhead for small import scenarios

Best For

Enterprises building repeatable ETL imports with data quality and governance needs

Airbyte

open-source ELTRuns connectors to extract data from many sources and load it into warehouses and lakes with incremental sync and schema mapping.

Incremental sync with stateful replication and replayable Airbyte runs

Airbyte stands out for its connector ecosystem and its visual connector builder approach that reduces integration work for common sources and targets. It supports scheduled and incremental data replication with replayable runs, so imports can be managed like repeatable pipelines. Transformations are handled via built-in normalization patterns and optional downstream processing, which keeps ingestion focused on reliable extraction and loading.

Pros

- Large connector catalog for sources and destinations across analytics stacks

- Incremental sync support reduces load and speeds up repeated imports

- Job orchestration with logs and retryable runs improves operational reliability

- Schema discovery and mapping tools cut time to first successful sync

Cons

- Advanced transformations often require external tooling after ingestion

- Connector-specific settings can create friction when sources behave inconsistently

- Large-scale deployments need careful tuning of resources and concurrency

Best For

Teams building reliable ELT ingestion pipelines with many heterogeneous data sources

Apache NiFi

dataflow automationImports data via configurable processors and routes it through flows with backpressure, retry handling, and storage for analytics pipelines.

Provenance tracking that records per-record history across the dataflow

Apache NiFi stands out for building data ingestion pipelines with a visual drag-and-drop workflow plus strong operational controls like backpressure and prioritization. It supports many source and destination integrations through processors, enabling batch and streaming imports with transformation steps in the same flow. Its dataflow model and observability features like provenance records make it easier to trace failures and data movement across complex import paths.

Pros

- Visual workflow design with processors for diverse import sources

- Built-in backpressure and flow control prevent overload during ingestion

- Provenance records trace data lineage through each processor

- Flexible transformations with scripting and built-in data formats

Cons

- Large workflows require operational tuning and careful processor configuration

- Debugging can be slower when failures span multiple queues and connections

- Schema management and type validation rely on careful mapping in flows

Best For

Teams building complex, monitored data import pipelines across systems

Conclusion

After evaluating 8 data science analytics, Fivetran stands out as our overall top pick — it scored highest across our combined criteria of features, ease of use, and value, which is why it sits at #1 in the rankings above.

Use the comparison table and detailed reviews above to validate the fit against your own requirements before committing to a tool.

How to Choose the Right Data Import Software

This buyer’s guide explains how to choose Data Import Software for continuous replication, batch and streaming ingestion, and governed ETL workflows. The guide covers tools including Fivetran, Stitch, Azure Data Factory, AWS Glue, Google Cloud Data Fusion, Talend Data Integration, Airbyte, Apache NiFi, and two additional ingestion platforms from the same shortlist. It focuses on concrete evaluation criteria drawn from how these tools import, transform, monitor, and recover data pipelines.

What Is Data Import Software?

Data Import Software connects source systems like SaaS apps, operational databases, and event streams to analytics targets like data warehouses and lakes. It solves repetitive data movement, schema mapping, and pipeline reliability problems that break reporting when extraction jobs drift. Tools like Fivetran and Stitch automate continuous syncing with schema-aware handling so downstream analytics keep a stable structure. For teams that need orchestration and transformation control, Azure Data Factory and AWS Glue provide governed pipeline execution with repeatable workflows.

Key Features to Look For

The right feature set determines whether imports stay reliable under schema change, high volume, and multi-step transformation requirements.

Continuous sync with automated schema change handling

Fivetran excels when prebuilt connectors continuously sync data while automatically handling schema changes so pipelines require less manual intervention. Stitch also supports schema and field mapping with incremental replication so imported datasets stay consistent as sources evolve.

Incremental replication with replayable runs and stateful sync

Airbyte supports incremental sync with stateful replication and replayable runs so failed imports can be rerun without rebuilding everything. Stitch delivers incremental syncing and supports one-time backfills so teams can rebuild history when source schemas evolve.

Declarative orchestration with governed pipeline scheduling and monitoring

Azure Data Factory provides a managed orchestration layer with scheduling, dependency handling, retries, and monitoring tied to Azure operations. This matters for repeatable ETL and ELT workflows where control flow and transformation stages must be coordinated.

Managed visual ETL that compiles to scalable execution

Google Cloud Data Fusion uses a visual pipeline designer that compiles into scalable Spark-based ETL with prebuilt connectors. This helps teams build batch and streaming ingestion patterns with reusable transformation stages while keeping execution managed.

Schema discovery and centralized cataloging for imported datasets

AWS Glue stands out with Glue Crawlers that infer schemas and populate the Glue Data Catalog automatically. This is useful when onboarding new sources or maintaining consistent schema definitions across many jobs.

Operational observability for complex ingestion flows

Apache NiFi provides provenance records that trace data movement and per-record history through each processor. This matters for debugging multi-queue failures in batch and streaming flows where tracing each step prevents silent data loss.

How to Choose the Right Data Import Software

Selection should start with how ingestion must run and evolve, then match transformation depth, observability, and governance to the chosen platform.

Choose the sync pattern: continuous replication or controlled ETL schedules

If the goal is low-maintenance continuous ingestion into analytics warehouses, Fivetran is built around continuous sync via prebuilt connectors with automated schema handling. If the goal is incremental replication plus one-time backfills, Stitch supports recurring replication and rebuild history when schemas evolve. If the goal is governed, scheduled pipelines inside Azure, Azure Data Factory provides orchestration with dependency handling and monitoring tied to pipeline runs.

Match transformation depth to the platform model

If transformations need to be warehouse-native and connector-driven, Fivetran provides an integration-friendly workflow with landing tables and schemas designed for downstream BI. If transformations must be declarative and scalable inside an orchestration layer, Azure Data Factory supports mapping data flows that scale transformations within pipelines. If Spark-based ETL with schema catalog support fits the target architecture, AWS Glue pairs managed Spark ETL jobs with Glue Crawlers that populate the Data Catalog.

Plan for schema evolution and field consistency

For frequent upstream schema changes, prioritize tools that automate schema change handling like Fivetran and Stitch. If schema discovery and centralized definitions reduce onboarding friction, AWS Glue’s Glue Crawlers infer schemas and populate the Glue Data Catalog to keep datasets consistent across jobs. If incremental mapping and consistent fields are required for many sources, Airbyte provides schema discovery and mapping tools that speed time to first successful sync.

Validate operational reliability with logs, retries, and traceability

If replayability and operational reliability matter for ingestion at scale, Airbyte supports replayable runs with orchestration logs and retryable execution. If traceability through each step is the priority for complex flows, Apache NiFi records provenance and per-record history across processors. For managed orchestration with scheduling and retries, Azure Data Factory supports monitoring of pipeline runs and failure handling.

Select based on team workflow: visual, code-friendly, or hybrid governance

Teams that prefer visual ETL design should compare Google Cloud Data Fusion and Talend Data Integration, since both provide visual pipeline building with reusable stages or components. Teams that want stronger orchestration and repeatable governance inside Azure should prioritize Azure Data Factory. Teams that need componentized ETL with embedded data validation should evaluate Talend Data Integration because it includes data quality rules and survivorship checks during imports.

Who Needs Data Import Software?

Data Import Software fits teams that must move and shape data from operational systems to analytics targets on a repeatable schedule or continuously.

Teams needing low-maintenance continuous ingestion into analytics warehouses

Fivetran is the best match because prebuilt connectors continuously sync data and automate schema change handling for landing tables and schemas. This approach reduces pipeline maintenance compared with manual extraction logic and helps keep downstream BI stable.

Analytics teams automating ongoing SaaS to warehouse imports with incremental updates

Stitch fits teams that want incremental replication and automated schema handling so datasets stay current without manual export jobs. Stitch also supports backfills to rebuild history when source schemas evolve.

Azure-centric teams that need governed ETL orchestration with scalable transformation

Azure Data Factory fits teams that require scheduling, dependency handling, retries, and monitoring integrated into Azure operations. It also supports mapping data flows for declarative transformations inside pipelines.

Enterprises that must embed data quality validation into repeatable ETL imports

Talend Data Integration is a fit for environments that need governance features like data quality rules and metadata-driven development. It embeds validation and survivorship checks into ETL imports so bad records can be controlled during ingestion.

Common Mistakes to Avoid

Common failures cluster around schema change handling gaps, insufficient observability, and choosing the wrong transformation model for the operational workflow.

Building complex custom extraction logic when connector-based sync is the priority

Fivetran is designed for continuous sync with prebuilt connectors and automated schema change handling, which reduces reliance on highly custom extraction logic. Stitch also focuses on incremental replication and schema-aware syncing so teams avoid brittle manual export jobs.

Assuming visual pipelines cover advanced transformation needs without additional tooling

Stitch notes that complex transformations often require external staging and custom logic, which affects projects that expect all transformation to happen inside the importer. Google Cloud Data Fusion and Apache NiFi support transformation stages, but large workflows can still require deeper tuning and careful processor configuration.

Underestimating operational debugging complexity in multi-step ingestion systems

Apache NiFi’s provenance records help trace failures across processors, which reduces guesswork during debugging of distributed ingestion paths. Airbyte provides logs, retryable runs, and replayability that also reduce time spent diagnosing failed sync jobs.

Skipping schema discovery and catalog management when adding many sources

AWS Glue’s Glue Crawlers automate schema inference and populate the Glue Data Catalog, which prevents schema drift across newly added sources. Without this kind of cataloging, teams using generic import flows often spend more time reconciling field definitions across jobs.

How We Selected and Ranked These Tools

We evaluated every tool on three sub-dimensions with features weighted at 0.4, ease of use weighted at 0.3, and value weighted at 0.3. The overall rating is the weighted average computed as overall = 0.40 × features + 0.30 × ease of use + 0.30 × value. Fivetran separated itself from lower-ranked options by combining connector-driven continuous sync with automated schema change handling, which scored strongly in the features dimension while still keeping ease of use high through managed connector behavior. Tools that required more hands-on transformation work or more complex operational tuning landed lower when compared against connector-native ingestion and schema automation.

Frequently Asked Questions About Data Import Software

Which data import software best supports continuous, low-maintenance syncing into a warehouse?

Fivetran is built for continuous ingestion using prebuilt connectors that keep datasets synchronized without manual export jobs. Stitch also supports ongoing replication with schema-aware syncing, but Fivetran emphasizes continuous sync into analytics warehouses as a primary workflow.

How do Fivetran and Stitch differ for incremental replication and schema changes?

Fivetran delivers continuous sync and handles common schema changes automatically for downstream analytics consumption. Stitch focuses on incremental replication with schema-aware mappings so imported datasets land with consistent fields while staying current.

Which tool fits governed ETL orchestration with dependency handling and monitoring?

Azure Data Factory provides pipeline orchestration with scheduled workflows, dependency management, and monitoring integrated into Azure operations. Talend Data Integration supports repeatable ETL with scheduling and job management, but Azure Data Factory is the stronger option for Azure-centric governance around pipeline runs.

What is the best option for AWS-based imports into S3 with schema discovery?

AWS Glue stands out for AWS-centric data imports that use Glue crawlers to infer schemas and populate the Glue Data Catalog. It also supports common ingestion patterns like batching from databases and streaming through Kinesis into S3-based analytics outputs.

Which platform is strongest for visual ETL building with both batch and streaming connectors?

Google Cloud Data Fusion provides a web UI to design, validate, and run batch and streaming pipelines using prebuilt connectors and transformation stages. Apache NiFi is also visual, but it focuses on operational control and dataflow tracing in a graph model rather than managed Spark-based pipeline compilation.

Which tool is best when data import needs built-in data quality validation inside the pipeline?

Talend Data Integration includes data quality components that embed validation and survivorship checks into import jobs. Apache NiFi can add validation steps in the flow using processors, but Talend integrates validation as part of the ETL component model.

What tool supports replayable ingestion runs and stateful incremental replication?

Airbyte supports incremental sync with stateful replication and replayable runs, which makes it easier to re-run imports after fixes. Fivetran also manages continuous sync, but Airbyte’s replay and state management is a central workflow feature.

How do NiFi and Data Fusion differ for monitoring and operational observability during imports?

Apache NiFi provides provenance records that track per-record history across the dataflow, making failures and movement easier to trace. Google Cloud Data Fusion offers operationalized pipeline runs through its managed execution model, but NiFi’s provenance is more granular at the flow level.

Which tool helps teams normalize and map heterogeneous source data into consistent analytics fields?

Stitch emphasizes normalization and mapping so imported datasets land in analytics tools with consistent fields. Airbyte also supports transformation via normalization patterns, but Stitch is specifically oriented around schema-aware replication with consistent field layouts.

What should teams choose when they need end-to-end transformation plus ingestion in the same workflow?

Azure Data Factory supports mapping data flows and compute activities that combine transformation and orchestration for repeatable ETL and ELT workflows. Apache NiFi can run transformation steps inside the same drag-and-drop dataflow, using processors for batch and streaming imports with strong operational controls.

Tools reviewed

Referenced in the comparison table and product reviews above.

Keep exploring

Comparing two specific tools?

Software Alternatives

See head-to-head software comparisons with feature breakdowns, pricing, and our recommendation for each use case.

Explore software alternatives→In this category

Data Science Analytics alternatives

See side-by-side comparisons of data science analytics tools and pick the right one for your stack.

Compare data science analytics tools→FOR SOFTWARE VENDORS

Not on this list? Let’s fix that.

Our best-of pages are how many teams discover and compare tools in this space. If you think your product belongs in this lineup, we’d like to hear from you—we’ll walk you through fit and what an editorial entry looks like.

Apply for a ListingWHAT THIS INCLUDES

Where buyers compare

Readers come to these pages to shortlist software—your product shows up in that moment, not in a random sidebar.

Editorial write-up

We describe your product in our own words and check the facts before anything goes live.

On-page brand presence

You appear in the roundup the same way as other tools we cover: name, positioning, and a clear next step for readers who want to learn more.

Kept up to date

We refresh lists on a regular rhythm so the category page stays useful as products and pricing change.