GITNUXSOFTWARE ADVICE

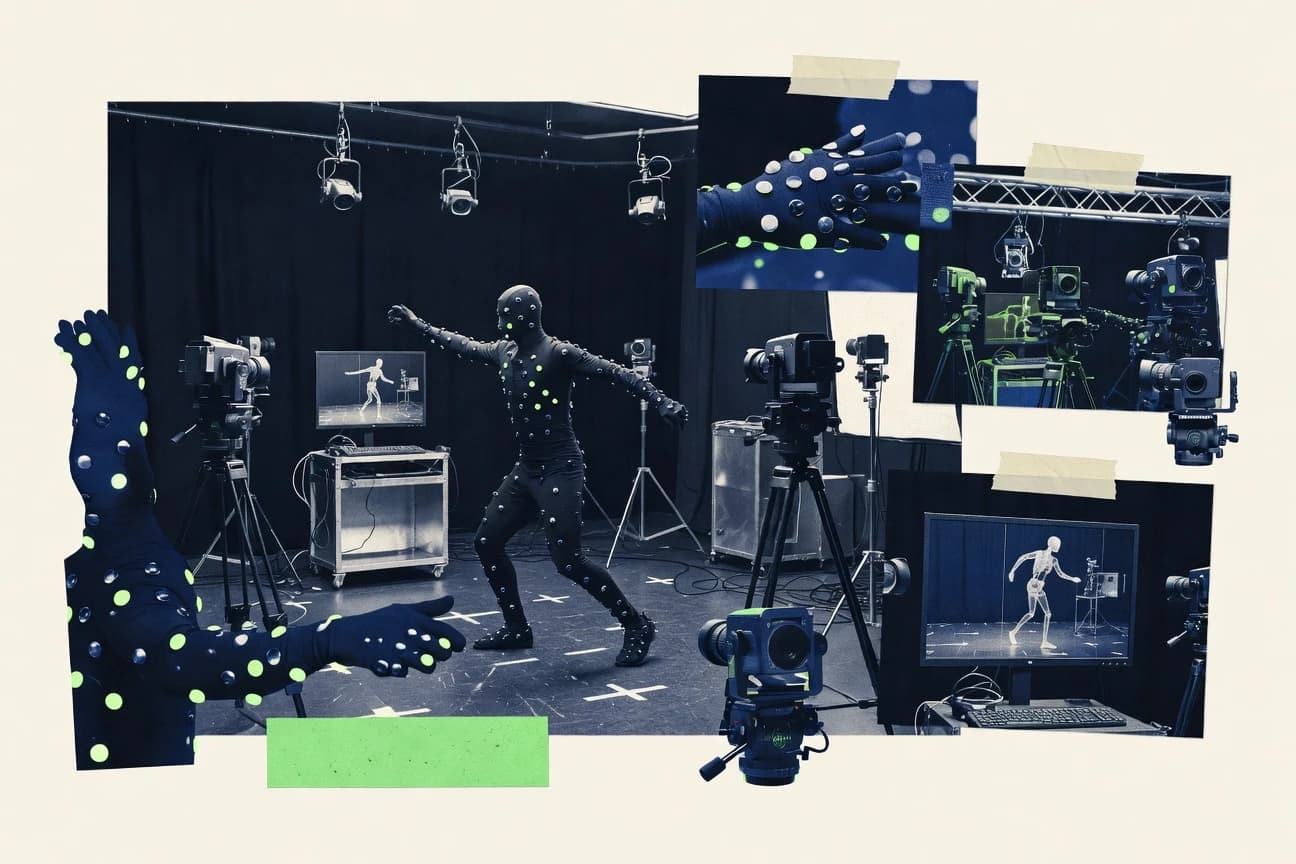

Technology Digital MediaTop 10 Best Motion Capture Software of 2026

Discover the top 10 best motion capture software tools for animations, gaming, and more. Find the perfect solution—explore now.

How we ranked these tools

Core product claims cross-referenced against official documentation, changelogs, and independent technical reviews.

Analyzed video reviews and hundreds of written evaluations to capture real-world user experiences with each tool.

AI persona simulations modeled how different user types would experience each tool across common use cases and workflows.

Final rankings reviewed and approved by our editorial team with authority to override AI-generated scores based on domain expertise.

Score: Features 40% · Ease 30% · Value 30%

Gitnux may earn a commission through links on this page — this does not influence rankings. Editorial policy

Editor picks

Three quick recommendations before you dive into the full comparison below — each one leads on a different dimension.

OptiTrack

Real-time mocap streaming and calibration across OptiTrack camera systems

Built for research labs and studios needing precise marker mocap exports with dependable sync.

Vicon

Vicon calibration and capture workflow tooling for consistent optical accuracy

Built for studios and biomechanics labs needing accurate optical motion capture workflows.

Xsens MVN

Inertial MVN suit full-body joint capture with real-time data streaming and export

Built for studios and labs needing repeatable full-body capture without multi-camera setups.

Related reading

Comparison Table

This comparison table evaluates motion capture software and systems used for character animation, robotics research, and real-time performance capture, including OptiTrack, Vicon, Xsens MVN, Noitom Perception Neuron, and Rokoko Studio. You will compare hardware and software workflows, capture modes, calibration and setup effort, tracking accuracy signals, and export paths into common animation and simulation pipelines.

| # | Tool | Category | Overall | Features | Ease of Use | Value |

|---|---|---|---|---|---|---|

| 1 | OptiTrack Real-time marker-based motion capture uses the OptiTrack camera system with the Motive software for high-precision tracking of human and object movement. | marker-based | 9.4/10 | 9.6/10 | 7.9/10 | 8.2/10 |

| 2 | Vicon Vicon motion capture delivers marker-based performance capture with the Vicon software suite for calibration, real-time capture, and biomechanics-ready outputs. | marker-based | 8.6/10 | 9.2/10 | 7.6/10 | 7.8/10 |

| 3 | Xsens MVN Xsens MVN uses inertial sensors to capture full-body motion for animation and analysis with MVN Analyze and MVN Awinda-style workflows. | inertial | 8.2/10 | 9.0/10 | 7.6/10 | 7.4/10 |

| 4 | Noitom Perception Neuron Perception Neuron provides inertial motion capture for performers with capture software and exports that support animation pipelines. | inertial | 7.4/10 | 7.8/10 | 7.0/10 | 7.9/10 |

| 5 | Rokoko Studio Rokoko Studio captures motion with marker-based Rokoko hardware and provides real-time preview plus retargeting workflows to common character rigs. | cloud-ready | 8.1/10 | 8.6/10 | 8.8/10 | 7.4/10 |

| 6 | Rokoko Vision Rokoko Vision uses body pose estimation from cameras to generate motion capture data without optical marker setups. | camera-based | 7.7/10 | 8.2/10 | 8.6/10 | 7.0/10 |

| 7 | DeepMotion DeepMotion delivers AI motion capture from video with model-driven body reconstruction and exports for animation retargeting. | AI-video | 7.6/10 | 8.1/10 | 7.1/10 | 7.8/10 |

| 8 | Mixamo Mixamo converts human motion captured from reference animations into rigged character movements with retargeting outputs usable in animation software. | retargeting | 7.6/10 | 7.4/10 | 8.6/10 | 8.2/10 |

| 9 | Blender Add-on Motion Capture (Manuel Bastionon Scripts) GitHub-hosted Blender tooling supports common motion capture processing and importing workflows for retargeting and animation cleanup inside Blender. | open-source | 6.8/10 | 7.1/10 | 6.4/10 | 7.3/10 |

| 10 | ROS motion capture packages (mocap toolchain) ROS-compatible mocap packages support streaming, parsing, and transforming motion capture data for robotics and animation pipelines. | open-source | 6.6/10 | 7.0/10 | 5.6/10 | 7.2/10 |

Real-time marker-based motion capture uses the OptiTrack camera system with the Motive software for high-precision tracking of human and object movement.

Vicon motion capture delivers marker-based performance capture with the Vicon software suite for calibration, real-time capture, and biomechanics-ready outputs.

Xsens MVN uses inertial sensors to capture full-body motion for animation and analysis with MVN Analyze and MVN Awinda-style workflows.

Perception Neuron provides inertial motion capture for performers with capture software and exports that support animation pipelines.

Rokoko Studio captures motion with marker-based Rokoko hardware and provides real-time preview plus retargeting workflows to common character rigs.

Rokoko Vision uses body pose estimation from cameras to generate motion capture data without optical marker setups.

DeepMotion delivers AI motion capture from video with model-driven body reconstruction and exports for animation retargeting.

Mixamo converts human motion captured from reference animations into rigged character movements with retargeting outputs usable in animation software.

GitHub-hosted Blender tooling supports common motion capture processing and importing workflows for retargeting and animation cleanup inside Blender.

ROS-compatible mocap packages support streaming, parsing, and transforming motion capture data for robotics and animation pipelines.

OptiTrack

marker-basedReal-time marker-based motion capture uses the OptiTrack camera system with the Motive software for high-precision tracking of human and object movement.

Real-time mocap streaming and calibration across OptiTrack camera systems

OptiTrack stands out for high-precision marker-based motion capture built on dedicated camera tracking and synchronization hardware. It supports real-time and offline mocap workflows with skeleton retargeting, rigid body tracking, and robust calibration for repeatable capture sessions. Use-case coverage spans biomechanics and robotics testing through tools that export motion data for downstream animation and analysis pipelines.

Pros

- High-accuracy marker-based tracking with strong sub-millimeter stability

- Dedicated capture ecosystem enables reliable timing and synchronization

- Broad export-ready data workflows for animation and scientific analysis

Cons

- Hardware-centric setup requires careful calibration and room planning

- Workflow complexity increases with multi-camera and multi-system synchronization needs

- Total cost of ownership is high for small teams

Best For

Research labs and studios needing precise marker mocap exports with dependable sync

More related reading

Vicon

marker-basedVicon motion capture delivers marker-based performance capture with the Vicon software suite for calibration, real-time capture, and biomechanics-ready outputs.

Vicon calibration and capture workflow tooling for consistent optical accuracy

Vicon stands out with a long-established motion capture stack built around high-precision optical capture and mature calibration workflows. It supports detailed 3D marker-based tracking, robust subject tracking, and production-grade capture-to-analytics pipelines for biomechanics and animation. Vicon data management and interoperability are strong because outputs map cleanly into downstream tools and custom workflows. It also benefits from a large ecosystem of training and integration patterns used in research labs and studios.

Pros

- High-precision optical tracking with dependable marker labeling performance

- Strong calibration and capture workflow tools for consistent repeatability

- Comprehensive data pipeline supports biomechanical analysis and animation use

- Interoperable outputs integrate well with downstream DCC and research tooling

Cons

- Hardware and software setup requires specialized operators and planning

- Licensing and system costs can outweigh needs for casual capture projects

- Marker-based workflows can struggle with occlusion in complex scenes

Best For

Studios and biomechanics labs needing accurate optical motion capture workflows

Xsens MVN

inertialXsens MVN uses inertial sensors to capture full-body motion for animation and analysis with MVN Analyze and MVN Awinda-style workflows.

Inertial MVN suit full-body joint capture with real-time data streaming and export

Xsens MVN stands out for its suit-based inertial motion capture that produces real-time body joint kinematics without cameras. It supports full-body tracking with calibration workflows, standardized skeleton outputs, and motion export for animation and analysis pipelines. Capture Studio and MVN components focus on streaming, recording, and post-processing trajectories for downstream tools. The system is best when you need consistent wearable capture across spaces with limited or obstructed camera views.

Pros

- Wearable inertial tracking enables indoor and outdoor capture without cameras

- Full-body joint output supports animation rigs and biomechanics workflows

- Real-time streaming workflows help drive live visualization and analysis

Cons

- Suit fitting and calibration take training to achieve repeatable results

- Inertial sensors can drift without proper calibration and setup discipline

- Software licensing cost can outweigh value for small teams

Best For

Studios and labs needing repeatable full-body capture without multi-camera setups

More related reading

Noitom Perception Neuron

inertialPerception Neuron provides inertial motion capture for performers with capture software and exports that support animation pipelines.

Perception Neuron live inertial capture streams 3D full-body motion for real-time character testing

Noitom Perception Neuron stands out for turning suit-based inertial sensor motion capture into a full-body workflow without optical stage cameras. It captures 3D human motion from multiple inertial IMUs, then streams or records data for retargeting to character rigs. The software focuses on calibration, recording, and exported motion formats for animation and analysis. Live capture and low-latency monitoring make it practical for rehearsals and rapid iteration.

Pros

- Inertial full-body capture avoids camera setup and occlusion issues

- Live capture supports quick rehearsal and iteration workflows

- Export-ready motion data fits common animation and analysis pipelines

- Calibration tools help align sensor output to a usable body model

Cons

- Inertial drift can accumulate during long takes

- Marker-free sensor capture can struggle with fast or subtle motions

- Setup and tuning still require time for reliable results

- Advanced cleanup and editing depend on downstream tools

Best For

Indie animators and small teams needing full-body capture without optical stages

Rokoko Studio

cloud-readyRokoko Studio captures motion with marker-based Rokoko hardware and provides real-time preview plus retargeting workflows to common character rigs.

Live capture preview with one-pass retargeting and export for animation pipelines

Rokoko Studio stands out for its fast motion-capture workflow that starts with live performer data from Rokoko hardware and IP streaming sources. It delivers real-time preview, marker-based cleanup, retargeting, and export for common animation pipelines. The software supports organizing takes, batch processing, and applying consistent skeleton mappings across sessions. It is strongest for producing usable character motion quickly, with less focus on deeply customizable mocap physics and tracking hardware tuning.

Pros

- Real-time preview speeds capture decisions and reduces reshoots

- Marker cleanup and retargeting create usable animation quickly

- Take organization and export streamline production handoffs

- Works well with Rokoko capture devices and common pipelines

Cons

- Advanced tracking calibration controls are limited compared to pro suites

- Higher-end facial and full-body workflows can require extra tools

- Retargeting quality depends heavily on consistent performer setup

Best For

Studios needing fast retargeting and exports from Rokoko capture

Rokoko Vision

camera-basedRokoko Vision uses body pose estimation from cameras to generate motion capture data without optical marker setups.

Real-time webcam motion capture that streams performance data for immediate animation use

Rokoko Vision stands out with a real-time, webcam-based motion capture workflow that prioritizes speed to usable animation. It captures body motion from a camera feed and streams results into common animation pipelines, reducing the setup time compared to traditional full-sensor stage systems. The software focuses on quick iteration for animators and content teams who need motion data immediately for blocking, retargeting, and animation refinement. Its output quality is strong for human performance, but it relies on clear visibility and consistent camera framing to avoid tracking gaps.

Pros

- Real-time webcam capture speeds up first animation output.

- Works well for body performance blocking and rapid iteration.

- Fewer setup steps than multi-sensor capture rigs.

- Integrates into common motion-to-animation workflows.

Cons

- Tracking degrades when hands, occlusions, or fast motion leave view.

- Camera placement and lighting strongly affect capture stability.

- Less suitable for high-precision face and finger capture compared to dedicated systems.

Best For

Small teams needing fast body motion capture for animation previews and iteration

More related reading

DeepMotion

AI-videoDeepMotion delivers AI motion capture from video with model-driven body reconstruction and exports for animation retargeting.

AI video-to-motion capture that turns footage into retargetable animation data

DeepMotion stands out for production-focused motion capture output that converts real video into usable animation data. It provides AI-driven capture, character motion retargeting to rigs, and export options for common animation and game workflows. The workflow emphasizes speed from footage to animation rather than live capture control. Its strengths show up most when you need consistent motion for character animation and can iterate on results using retargeting and cleanup tools.

Pros

- AI video-to-motion capture that reduces manual keyframing time

- Retargeting tools designed for transferring motion onto character rigs

- Exports support animation and game pipelines without full rebuilds

Cons

- Quality depends heavily on input footage clarity and pose coverage

- Rig setup and cleanup steps can add friction for new projects

- Advanced refinement controls are less direct than full mocap suites

Best For

Teams needing fast AI motion capture to retarget character animations

Mixamo

retargetingMixamo converts human motion captured from reference animations into rigged character movements with retargeting outputs usable in animation software.

Auto-rigging plus one-click motion retargeting for uploaded characters

Mixamo stands out for turning uploaded character rigs and captured motion into downloadable animations without requiring you to build a capture pipeline. It provides a library of pre-made motions and a retargeting workflow that generates animation for your characters with common joint mappings. You can upload a character in supported formats, select motions, and export FBX or related formats for common game and DCC tools. It is strongest for animation retargeting and workflow speed rather than live-stream or high-end optical capture capture fidelity.

Pros

- Fast retargeting from Mixamo motion to uploaded character skeletons

- Large motion library covers walking, running, combat, and gestures

- Exports standard FBX files for common DCC and game pipelines

Cons

- Limited support for custom capture setups and hardware integration

- Facial capture and finger-level fidelity are not its focus

- Animation quality depends on character rig alignment and proportion

Best For

Teams needing quick character animation retargeting for games and DCC work

More related reading

Blender Add-on Motion Capture (Manuel Bastionon Scripts)

open-sourceGitHub-hosted Blender tooling supports common motion capture processing and importing workflows for retargeting and animation cleanup inside Blender.

Blender add-on motion capture scripts that import data and drive Blender rigs.

Blender Add-on Motion Capture from Manuel Bastionon Scripts focuses on bringing motion capture workflows directly into Blender via add-ons rather than standalone capture suites. It provides tools to import and process motion data into Blender scenes so you can retarget and refine animation. The scripts are built for Blender-centric pipelines, with functionality tuned for working in the same environment from capture data to rig animation. Expect a technical, integration-heavy workflow that trades broad device support for tight Blender compatibility.

Pros

- Blender-native motion capture workflow reduces scene and animation handoffs

- Add-on scripts support importing motion data into Blender for direct rig work

- Retargeting and cleanup tools align with character animation iteration

Cons

- Best results depend on knowing Blender rigging and animation conventions

- Limited out-of-the-box guidance compared with full capture platforms

- Device and file format coverage is narrower than dedicated mocap software

Best For

Blender-first studios needing mocap import and rig retargeting without extra tools

ROS motion capture packages (mocap toolchain)

open-sourceROS-compatible mocap packages support streaming, parsing, and transforming motion capture data for robotics and animation pipelines.

ROS TF frame publication for real-time skeleton pose integration into existing systems

ROS motion capture packages stand out by integrating mocap capture, calibration, and streaming into ROS message pipelines so robotics stacks can consume poses directly. Core capabilities typically include marker and skeleton processing, TF frame publication, and time-synchronized data flows for downstream tracking, control, and logging. Many toolchains also support hardware interoperability by bridging common mocap sensors into ROS topics, bags, and real-time visualization workflows. The main limitation is that setup and integration effort is high, since you assemble components and tune configuration to your exact sensor and calibration needs.

Pros

- ROS-native pose streaming integrates directly with robotics perception pipelines

- Time-synchronized TF frames make multi-system fusion easier than file-based exports

- Open component ecosystem supports custom mocap hardware bridging

Cons

- Component assembly and calibration tuning require strong ROS and robotics skills

- User interfaces for capture review and cleanup are limited versus dedicated vendors

- Real-time stability depends on driver quality and message timing configuration

Best For

Robotics teams integrating mocap into ROS for real-time tracking and recording

Conclusion

After evaluating 10 technology digital media, OptiTrack stands out as our overall top pick — it scored highest across our combined criteria of features, ease of use, and value, which is why it sits at #1 in the rankings above.

Use the comparison table and detailed reviews above to validate the fit against your own requirements before committing to a tool.

How to Choose the Right Motion Capture Software

This buyer’s guide helps you match your capture goals to Motion Capture Software workflows across OptiTrack, Vicon, Xsens MVN, Noitom Perception Neuron, Rokoko Studio, Rokoko Vision, DeepMotion, Mixamo, Blender Add-on Motion Capture from Manuel Bastionon Scripts, and ROS motion capture packages. You will learn which tool strengths map to precision capture, fast animation iteration, wearable inertial workflows, AI video-to-motion conversion, Blender-centric rigging, and ROS real-time integration. You will also get a checklist of key features and the common setup mistakes that derail results across marker, inertial, webcam, AI, and pipeline toolchains.

What Is Motion Capture Software?

Motion Capture Software converts human or object movement into time-synchronized motion data for animation, biomechanics, robotics, or analysis. It typically handles capture streaming or recording, calibration and subject tracking, and export into downstream formats for rigs, tools, or robotics message pipelines. Tools like OptiTrack and Vicon focus on marker-based optical capture workflows that produce precise 3D trajectories and consistent calibration results. Tools like Xsens MVN and Noitom Perception Neuron focus on inertial suit workflows that deliver full-body joint kinematics without camera stages.

Key Features to Look For

These features determine whether your motion data will be accurate, usable in your pipeline, and repeatable across sessions.

Real-time capture streaming with calibration support

If you need live monitoring, OptiTrack provides real-time mocap streaming and calibration across OptiTrack camera systems. Vicon also emphasizes calibration and capture workflow tooling that supports consistent optical accuracy during real-time capture.

Marker-based optical accuracy with reliable subject tracking

For studios and biomechanics labs that depend on dependable marker labeling, Vicon is built around high-precision optical tracking and mature calibration workflows. OptiTrack complements this with sub-millimeter stability and robust calibration for repeatable capture sessions.

Wearable full-body inertial suit outputs with standardized skeleton data

If you want full-body joint kinematics without multi-camera setup, Xsens MVN delivers an inertial MVN suit workflow that streams real-time body joint data. Noitom Perception Neuron delivers a similar inertial approach that streams 3D full-body motion for live character testing without optical stage cameras.

Live capture preview that accelerates retargeting

If your priority is speed from capture to playable animation, Rokoko Studio provides live capture preview with one-pass retargeting and export for animation pipelines. This reduces reshoots by letting you validate motion while you are capturing.

Webcam-based body pose estimation for rapid iteration

For teams that need motion data immediately for blocking and iteration, Rokoko Vision uses real-time webcam motion capture to stream performance data into common animation workflows. It is designed to avoid the setup steps of traditional stage systems but depends on clear visibility and stable framing.

AI video-to-motion conversion and rig retargeting

If your workflow starts with footage rather than a controlled capture stage, DeepMotion focuses on AI video-to-motion capture and exports that support character motion retargeting. Mixamo complements fast rig animation by offering auto-rigging and one-click motion retargeting from a motion library onto uploaded character rigs.

How to Choose the Right Motion Capture Software

Match your capture environment, precision needs, and downstream pipeline requirements to the tool whose workflow is already optimized for those constraints.

Choose the capture modality that fits your physical setup

Pick marker-based optical systems like OptiTrack or Vicon when you can plan a capture space and manage multi-camera calibration for repeatability. Pick inertial suit workflows like Xsens MVN or Noitom Perception Neuron when you need full-body capture indoors or outdoors without camera stages and you can tolerate drift control through disciplined calibration.

Decide whether you need real-time streaming or offline conversion

If you need to see motion as it happens, OptiTrack and Vicon emphasize real-time capture workflows tied to their calibration and subject tracking tooling. If you need to convert content to usable motion data faster than live control, DeepMotion focuses on AI video-to-motion capture and retargeting, while Mixamo focuses on retargeting motions onto uploaded rigs for quick animation output.

Plan your retargeting and export path around your target software

If your pipeline is animation-first with common character rigs, Rokoko Studio’s marker-based cleanup and retargeting workflow is built to produce usable character motion quickly. If you are Blender-first, Blender Add-on Motion Capture from Manuel Bastionon Scripts is designed to import and drive Blender rigs directly from motion capture workflows.

Validate how the tool handles occlusion and fast movement in your scenes

Marker-based systems like Vicon can struggle with occlusion in complex scenes because marker labeling depends on visibility during capture. Webcam-based workflows like Rokoko Vision degrade when hands or fast movement leave view, so you should plan camera placement and lighting around your performer’s motion path.

For robotics or research pipelines, prioritize time-synchronized pose integration

If your end goal is real-time skeleton pose integration into robotics software, ROS motion capture packages publish time-synchronized TF frames and integrate mocap outputs into ROS message pipelines. This approach supports multi-system fusion in robotics because it provides TF frames for downstream tracking, control, and logging.

Who Needs Motion Capture Software?

Motion Capture Software targets teams that need accurate motion data generation and retargeting for animation, analysis, or real-time tracking systems.

Research labs and studios chasing precision marker mocap exports with dependable synchronization

OptiTrack fits this need because it delivers real-time mocap streaming and calibration across OptiTrack camera systems with sub-millimeter stability. Vicon also fits because its calibration and capture workflow tooling supports consistent optical accuracy and biomechanical-ready outputs.

Studios and biomechanics labs that require dependable optical tracking workflows and capture-to-analytics pipelines

Vicon is a strong match because it emphasizes robust subject tracking, comprehensive data pipeline interoperability, and outputs designed for biomechanics analysis and animation. OptiTrack is also a fit when you want a dedicated capture ecosystem that focuses on repeatable capture sessions through robust calibration.

Studios and labs that need repeatable full-body motion capture without multi-camera setups

Xsens MVN matches this requirement with a suit-based inertial workflow that produces real-time full-body joint kinematics for animation and analysis. Noitom Perception Neuron also fits because it streams 3D full-body motion for live character testing without optical stage cameras.

Indie animators and small teams that want full-body motion capture without building an optical stage

Noitom Perception Neuron fits because it provides inertial full-body capture with live capture and low-latency monitoring for rehearsal and rapid iteration. Xsens MVN fits when you need full-body joint outputs with real-time streaming and standardized skeleton exports.

Studios focused on fast character retargeting and production handoffs

Rokoko Studio fits because it combines live capture preview with marker cleanup and retargeting and it supports organizing takes and exporting in animation pipelines. Mixamo fits when your main goal is fast retargeting using auto-rigging and one-click motion retargeting onto uploaded character skeletons.

Small teams that need quick body motion capture for blocking and animation iteration

Rokoko Vision fits because it uses real-time webcam motion capture to stream performance data immediately for animation workflows. DeepMotion fits when you want fast AI video-to-motion conversion so you can iterate on character motion via retargeting and cleanup rather than live stage capture.

Teams producing character animation from existing video rather than running a live capture session

DeepMotion fits because it converts real video into usable motion data through AI-driven capture and rig retargeting exports. Mixamo fits when you want to turn motion into downloadable animations quickly through uploaded character rigs and a large motion library.

Blender-first studios that want motion capture import and rig retargeting inside Blender

Blender Add-on Motion Capture from Manuel Bastionon Scripts fits because it provides Blender add-on tooling to import and process motion data into Blender scenes for direct rig work. This approach reduces handoff friction by keeping rig retargeting and refinement inside Blender.

Robotics teams integrating motion capture into real-time tracking, control, and logging systems

ROS motion capture packages fit because they integrate mocap streaming and parsing into ROS message pipelines with TF frame publication. This design supports multi-system fusion because it publishes time-synchronized skeleton pose frames that robotics stacks can consume directly.

Common Mistakes to Avoid

Several patterns repeatedly block results across capture types, calibration workflows, and pipeline integration methods.

Underplanning calibration and space constraints for optical marker capture

OptiTrack and Vicon require careful calibration and room planning because hardware and software setup needs specialized operators and planning. If you cannot manage multi-camera synchronization or visibility, you will spend more time troubleshooting than capturing motion.

Expecting inertial suits to stay stable on long takes without setup discipline

Xsens MVN and Noitom Perception Neuron both can drift without proper calibration and setup discipline. Long takes increase the risk that drift accumulates unless you run calibration workflows consistently and keep performance setup repeatable.

Using webcam pose estimation in scenes that block visibility

Rokoko Vision depends on clear visibility and consistent camera framing because tracking degrades when hands and occlusions leave view. If your choreography regularly hides limbs, you should expect gaps and reduced stability.

Assuming AI video-to-motion outputs will match precision capture without input quality

DeepMotion quality depends heavily on input footage clarity and pose coverage because AI reconstructions require usable visuals. If your footage has motion blur or missing body coverage, retargeting refinement will require more manual cleanup.

Building a Blender retargeting workflow without matching rig conventions

Blender Add-on Motion Capture from Manuel Bastionon Scripts can require knowing Blender rigging and animation conventions for best results. If your rigs do not align with the add-on’s retargeting expectations, cleanup and refinement time increases.

How We Selected and Ranked These Tools

We evaluated each tool by overall capability, feature depth, ease of use, and value for its intended workflow. We separated OptiTrack from lower-ranked options by emphasizing dependable calibration and real-time mocap streaming across OptiTrack camera systems that support sub-millimeter stability and repeatable capture sessions. We also used ease-of-use differences created by workflow complexity, such as marker-based setup demands in OptiTrack and Vicon versus wearable inertial workflows in Xsens MVN and Noitom Perception Neuron. We treated pipeline fit as a feature-level factor by comparing retargeting speed and export readiness in Rokoko Studio and Rokoko Vision with rig retargeting and AI conversion strengths in DeepMotion and Mixamo.

Frequently Asked Questions About Motion Capture Software

Which motion capture software is best for marker-based optical accuracy in a lab setup?

OptiTrack is built for high-precision marker mocap using dedicated camera tracking and synchronization hardware. Vicon also targets optical accuracy with mature calibration workflows and production-grade capture-to-analytics pipelines.

How do inertial suit systems compare to optical marker systems for full-body capture when cameras are obstructed?

Xsens MVN uses an inertial suit to output real-time body joint kinematics without multi-camera stages. Noitom Perception Neuron serves a similar purpose with multiple IMUs that stream or record 3D human motion for retargeting.

What should I choose for live, low-latency capture and immediate character retargeting during rehearsals?

OptiTrack supports real-time mocap streaming alongside calibration across OptiTrack camera systems. Perception Neuron focuses on live inertial capture with low-latency monitoring that supports rapid rig testing.

Which tools are designed for fast production workflows that prioritize usable animation output over deep capture tuning?

Rokoko Studio emphasizes fast marker-based cleanup, retargeting, and export from Rokoko hardware and IP streaming sources. DeepMotion focuses on AI video-to-motion conversion and retargeting so you can iterate on character animation results quickly.

If I want webcam-based motion capture without a full sensor stage, which software fits that workflow?

Rokoko Vision performs real-time webcam motion capture and streams results into common animation pipelines for quick blocking and iteration. Its tracking quality depends on clear visibility and consistent camera framing to avoid tracking gaps.

What is the best option for converting mocap or motion into a Blender rig without building a separate capture pipeline?

The Blender Add-on Motion Capture by Manuel Bastionon Scripts brings mocap import and rig retargeting directly into Blender. This approach trades broad device support for a Blender-first workflow using the imported motion data to drive rigs.

Which solution fits a robotics team that needs time-synchronized mocap poses inside an existing ROS system?

ROS motion capture packages are built to publish mocap-derived skeleton poses through ROS messaging, often using TF frame publication. This enables time-synchronized pose consumption by tracking, control, and logging components, but it requires integration effort to match your sensor and calibration needs.

What should I use when I already have a character rig and I mainly need motion retargeting and export, not a capture pipeline?

Mixamo focuses on uploaded character rigs and motion retargeting workflows that export animations for common game and DCC tools. It prioritizes workflow speed with auto-rigging and one-click motion retargeting rather than capture fidelity.

How do capture-to-analytics pipelines differ between optical systems and inertial systems once you have recorded motion data?

Vicon emphasizes detailed optical marker tracking plus capture workflow tooling that maps outputs cleanly into downstream tools and custom analytics. Xsens MVN emphasizes suit-based joint trajectories with standardized skeleton outputs that you export for animation and analysis pipelines.

What common setup issues should I plan for when moving from capture to retargeting and export?

OptiTrack and Vicon both rely on repeatable calibration, because calibration consistency directly affects downstream retargeting stability. Rokoko Studio and Rokoko Vision can also produce tracking gaps when visibility or camera framing fails, which you typically correct during cleanup and take organization.

Tools reviewed

Referenced in the comparison table and product reviews above.

Keep exploring

Comparing two specific tools?

Software Alternatives

See head-to-head software comparisons with feature breakdowns, pricing, and our recommendation for each use case.

Explore software alternatives→In this category

Technology Digital Media alternatives

See side-by-side comparisons of technology digital media tools and pick the right one for your stack.

Compare technology digital media tools→FOR SOFTWARE VENDORS

Not on this list? Let’s fix that.

Our best-of pages are how many teams discover and compare tools in this space. If you think your product belongs in this lineup, we’d like to hear from you—we’ll walk you through fit and what an editorial entry looks like.

Apply for a ListingWHAT THIS INCLUDES

Where buyers compare

Readers come to these pages to shortlist software—your product shows up in that moment, not in a random sidebar.

Editorial write-up

We describe your product in our own words and check the facts before anything goes live.

On-page brand presence

You appear in the roundup the same way as other tools we cover: name, positioning, and a clear next step for readers who want to learn more.

Kept up to date

We refresh lists on a regular rhythm so the category page stays useful as products and pricing change.