GITNUXSOFTWARE ADVICE

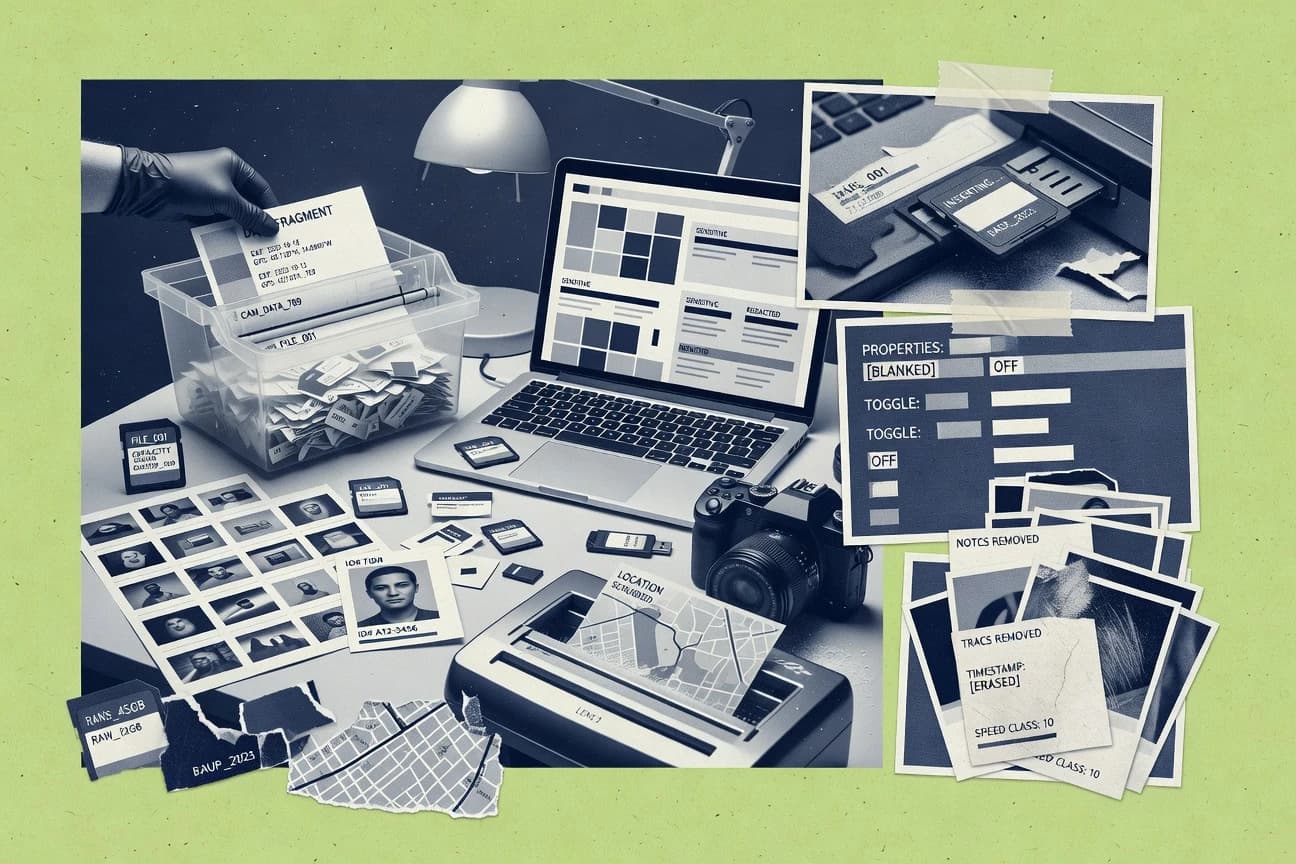

Data Science AnalyticsTop 10 Best Metadata Scrubbing Software of 2026

Discover top 10 metadata scrubbing software tools to clean digital assets.

How we ranked these tools

Core product claims cross-referenced against official documentation, changelogs, and independent technical reviews.

Analyzed video reviews and hundreds of written evaluations to capture real-world user experiences with each tool.

AI persona simulations modeled how different user types would experience each tool across common use cases and workflows.

Final rankings reviewed and approved by our editorial team with authority to override AI-generated scores based on domain expertise.

Score: Features 40% · Ease 30% · Value 30%

Gitnux may earn a commission through links on this page — this does not influence rankings. Editorial policy

Editor’s top 3 picks

Three quick recommendations before you dive into the full comparison below — each one leads on a different dimension.

OpenRefine

Cluster and Merge with interactive labeling to deduplicate and standardize metadata values

Built for metadata stewards cleaning and normalizing tabular records with interactive workflows.

Trifacta Data Wrangler

Autopilot transformation suggestions driven by column profiling in visual wrangling

Built for teams scrubbing messy column metadata into consistent schemas for analytics pipelines.

Databricks Data Quality

Unity Catalog-aware data quality monitoring tied to table and schema changes

Built for teams using Databricks and Unity Catalog to detect and remediate metadata quality issues.

Related reading

Comparison Table

This comparison table evaluates metadata scrubbing tools used to detect, normalize, and remediate inconsistent fields across datasets and digital assets. It covers options such as OpenRefine, Trifacta Data Wrangler, Databricks Data Quality, AWS Glue Data Quality, and Apache NiFi, along with additional tools, so readers can compare core capabilities, integration paths, and operational fit.

| # | Tool | Category | Overall | Features | Ease of Use | Value |

|---|---|---|---|---|---|---|

| 1 | OpenRefine Use data cleaning, transformations, and metadata normalization features to scrub messy datasets before downstream analytics. | open-source data cleaning | 8.4/10 | 8.7/10 | 7.8/10 | 8.6/10 |

| 2 | Trifacta Data Wrangler Apply guided transformations and schema inference to clean and standardize metadata-like fields in preparation for analytics pipelines. | data wrangling | 8.3/10 | 8.6/10 | 7.8/10 | 8.3/10 |

| 3 | Databricks Data Quality Run automated checks and enforce data quality rules to validate and remediate invalid or inconsistent metadata fields in Spark-backed datasets. | enterprise data quality | 8.3/10 | 8.6/10 | 7.8/10 | 8.3/10 |

| 4 | AWS Glue Data Quality Define and run data quality rules and then inspect failing records to correct inconsistent fields that carry metadata for analytics. | managed data quality | 7.3/10 | 7.6/10 | 7.0/10 | 7.3/10 |

| 5 | Apache NiFi Build scrubbing workflows with transform processors to standardize metadata and remove bad attributes across data flows. | workflow-based ETL | 7.8/10 | 8.2/10 | 7.1/10 | 7.8/10 |

| 6 | dbt (Data Build Tool) Codify transformations and tests to normalize metadata-bearing columns and enforce clean schemas for analytics models. | analytics transformations | 7.4/10 | 7.6/10 | 7.0/10 | 7.4/10 |

| 7 | Alation Data Catalog Curate and improve dataset metadata quality with governance workflows that highlight inconsistencies and gaps. | data catalog governance | 7.6/10 | 8.0/10 | 7.0/10 | 7.6/10 |

| 8 | Collibra Data Catalog Manage governance and stewardship workflows that clean, standardize, and approve metadata for trusted analytics usage. | enterprise governance | 8.2/10 | 8.6/10 | 7.9/10 | 7.9/10 |

| 9 | Talend Data Quality Profile, match, and cleanse data to fix inaccurate values in metadata attributes that feed analytics and reporting. | ETL data quality | 7.6/10 | 8.1/10 | 7.4/10 | 7.1/10 |

| 10 | Soda Core Define data tests in YAML to detect and prevent schema and content issues in datasets that act as analytics metadata sources. | test-driven data QA | 7.2/10 | 7.6/10 | 6.8/10 | 7.0/10 |

Use data cleaning, transformations, and metadata normalization features to scrub messy datasets before downstream analytics.

Apply guided transformations and schema inference to clean and standardize metadata-like fields in preparation for analytics pipelines.

Run automated checks and enforce data quality rules to validate and remediate invalid or inconsistent metadata fields in Spark-backed datasets.

Define and run data quality rules and then inspect failing records to correct inconsistent fields that carry metadata for analytics.

Build scrubbing workflows with transform processors to standardize metadata and remove bad attributes across data flows.

Codify transformations and tests to normalize metadata-bearing columns and enforce clean schemas for analytics models.

Curate and improve dataset metadata quality with governance workflows that highlight inconsistencies and gaps.

Manage governance and stewardship workflows that clean, standardize, and approve metadata for trusted analytics usage.

Profile, match, and cleanse data to fix inaccurate values in metadata attributes that feed analytics and reporting.

Define data tests in YAML to detect and prevent schema and content issues in datasets that act as analytics metadata sources.

OpenRefine

open-source data cleaningUse data cleaning, transformations, and metadata normalization features to scrub messy datasets before downstream analytics.

Cluster and Merge with interactive labeling to deduplicate and standardize metadata values

OpenRefine stands out for its interactive, faceted data exploration plus transformation workflow for messy tabular metadata. It supports metadata scrubbing through cluster-and-merge record reconciliation, column parsing and normalization, and expression-based transformations. It can reshape and enrich datasets using built-in reconciliation services and extensible import and export pipelines. The tool is strongest for cleaning metadata inside a browser session rather than building a long-running ETL system.

Pros

- Faceted filtering makes duplicates, typos, and outliers easy to locate and isolate

- Cluster-and-merge rapidly unifies inconsistent strings into clean, reusable identifiers

- Expression-based column transforms enable repeatable scrubbing steps without custom code

- Reconciliation can map values to external authority concepts for normalization

- Flexible import and export supports common metadata formats and downstream workflows

Cons

- Large datasets can feel slow and resource intensive in an interactive UI

- Advanced cleaning often requires learning OpenRefine’s transformation expressions

- Cross-dataset governance and audit trails require manual process discipline

Best For

Metadata stewards cleaning and normalizing tabular records with interactive workflows

More related reading

Trifacta Data Wrangler

data wranglingApply guided transformations and schema inference to clean and standardize metadata-like fields in preparation for analytics pipelines.

Autopilot transformation suggestions driven by column profiling in visual wrangling

Trifacta Data Wrangler stands out for its visual, guided data preparation workflow that focuses on quickly transforming messy fields into analysis-ready columns. For metadata scrubbing, it provides schema and column profiling signals, then generates transformation steps to standardize names, formats, and data types across datasets. Its recipe-based approach supports repeatable cleaning logic, which helps enforce consistent metadata conventions when files arrive with varying column layouts. The tool’s strengths center on column-level transformations rather than deep catalog governance or cross-system metadata lineage.

Pros

- Visual transformation suggestions accelerate metadata standardization workflows

- Recipe-based transformations support repeatable scrubbing across similar datasets

- Column profiling helps detect inconsistencies in types and formats

Cons

- Metadata governance and lineage across a catalog are limited

- Complex multi-dataset normalization needs more manual step design

- Scaling interactive scrubbing into enterprise metadata operations can require extra integration

Best For

Teams scrubbing messy column metadata into consistent schemas for analytics pipelines

Databricks Data Quality

enterprise data qualityRun automated checks and enforce data quality rules to validate and remediate invalid or inconsistent metadata fields in Spark-backed datasets.

Unity Catalog-aware data quality monitoring tied to table and schema changes

Databricks Data Quality stands out by embedding metadata and data quality checks directly into the Databricks and Unity Catalog governance workflow. It provides automated table profiling, constraint and expectation evaluation, and rule-based monitoring that targets schema drift and data integrity issues. It also integrates with Databricks jobs and notebooks so metadata scrubbing actions can be triggered after detection. Compared with standalone metadata scrubbing tools, it is strongest for organizations already standardizing on Databricks for ingestion and governance.

Pros

- Tight integration with Unity Catalog for governance-aware quality checks

- Rule-based monitoring for schema drift and integrity issues across tables

- Automations fit into Databricks jobs and notebook-driven workflows

- Profiling helps identify invalid values and inconsistent metadata patterns

Cons

- Metadata scrubbing workflows require Databricks pipeline familiarity

- Less suited for scrubbing outside Databricks-centered data platforms

- Complex rule sets can be harder to debug than simpler rule engines

Best For

Teams using Databricks and Unity Catalog to detect and remediate metadata quality issues

AWS Glue Data Quality

managed data qualityDefine and run data quality rules and then inspect failing records to correct inconsistent fields that carry metadata for analytics.

Ruleset-based data quality evaluations that leverage the AWS Glue Data Catalog

AWS Glue Data Quality distinguishes itself by building quality checks directly into AWS Glue ETL workflows using the same cataloged metadata that drives schema discovery. It provides rulesets for profiling and validating table and column values, then emits structured results that can be reviewed in AWS. The service can run data quality evaluations as part of batch and streaming-oriented pipeline patterns while tracking outcomes at the dataset level.

Pros

- Integrates data quality checks into Glue ETL using the Data Catalog schema

- Supports rulesets for profiling and validation with reusable, versionable logic

- Produces machine-readable results for downstream reporting and gating

Cons

- Limited to the data types and rule semantics supported by Glue Data Quality

- Operational tuning of rulesets and thresholds can be iterative

- More effective when pipelines are already centered on AWS Glue and the catalog

Best For

Teams standardizing metadata-driven quality checks inside AWS Glue pipelines

Apache NiFi

workflow-based ETLBuild scrubbing workflows with transform processors to standardize metadata and remove bad attributes across data flows.

Provenance and record-based processors like UpdateRecord for controlled metadata rewriting

Apache NiFi stands out with a visual, event-driven dataflow engine that can scrub metadata by orchestrating processors for parsing, transforming, and filtering. It supports schema-aware handling through processors like UpdateRecord and RecordPath operations, letting teams rename fields, remove attributes, and rewrite metadata in-flight. Data lineage is improved by the UI and provenance tracking, which helps audit what metadata changed and when. Scrubbing runs continuously by scheduling flows and handling retries with backpressure controls for resilient processing.

Pros

- Visual drag-and-drop workflow makes metadata scrubbing pipelines easier to design

- Provenance tracking shows what metadata moved through each step

- Record-oriented processors support field-level transforms and removals

Cons

- Large scrubbing flows can become hard to manage and debug

- Some metadata changes require careful schema management across steps

- Operational tuning is needed to balance throughput and backpressure behavior

Best For

Teams automating metadata scrubbing with visual workflows and audit trails

dbt (Data Build Tool)

analytics transformationsCodify transformations and tests to normalize metadata-bearing columns and enforce clean schemas for analytics models.

dbt docs and lineage from compiled artifacts for metadata discovery and impact analysis

dbt stands out as a SQL-first data transformation tool that generates lineage from versioned dbt models. It scrubs metadata by enforcing consistent naming, tagging, and documentation across projects and environments. Catalogs can be published for discovery so downstream teams can understand and standardize sensitive fields and ownership. Metadata governance is achieved through dbt constructs like models, sources, tests, and docs rather than a dedicated standalone scrubbing UI.

Pros

- Lineage-aware documentation ties columns to models and upstream sources

- Tests enforce metadata quality through reusable schema and constraint checks

- Tags and metrics standardize dataset ownership and categorization across projects

Cons

- No dedicated metadata scrubbing dashboard for bulk redaction workflows

- SQL-centric setup slows teams without dbt modeling experience

- Metadata governance depends on consistent project conventions and reviews

Best For

Teams standardizing governed metadata through dbt models, tests, and lineage

More related reading

Alation Data Catalog

data catalog governanceCurate and improve dataset metadata quality with governance workflows that highlight inconsistencies and gaps.

Data Stewardship workflows that enforce review of AI-assisted metadata enrichment

Alation Data Catalog stands out for metadata quality governance that ties scrubbing outcomes to searchable business metadata and stewardship workflows. It supports discovery of data assets across common enterprise systems and then applies rules for standardizing names, classifications, and descriptions so consumers see cleaner metadata. Its ingestion and enrichment pipeline is designed for ongoing monitoring so catalog entries stay consistent as sources change. Metadata scrubbing is delivered as part of a broader catalog and governance loop rather than a standalone cleaning utility.

Pros

- Governance workflows connect scrubbed metadata to owners and approval

- Automated enrichment improves descriptions, classifications, and field labeling

- Broad connector coverage helps normalize metadata across multiple data sources

- Search and lineage views make scrubbed results visible to users

Cons

- Configuration and rule design require strong catalog governance practices

- Scrubbing impact depends on data profiling quality from ingested sources

- Operational overhead rises as scope expands across many pipelines

Best For

Enterprises needing governed metadata scrubbing inside an enterprise data catalog

Collibra Data Catalog

enterprise governanceManage governance and stewardship workflows that clean, standardize, and approve metadata for trusted analytics usage.

Data Quality and governance workflows that route scrubbing actions with full auditability

Collibra Data Catalog differentiates metadata scrubbing through its governance-first workflow and strong relationship mapping between assets, terms, and ownership. It supports automated enrichment via connectors, metadata import, and integration with data quality rules so bad or incomplete fields can be identified and routed for correction. Scrubbing is typically executed through governed processes rather than standalone batch cleaning, with audit trails and role-based controls tied to catalog objects. The result is best for organizations that need catalog accuracy backed by governance and lineage-aware context.

Pros

- Governed scrubbing workflows with audit history tied to catalog assets.

- Rich asset relationships help target incorrect fields with business context.

- Integration-friendly architecture supports metadata ingestion and enrichment pipelines.

Cons

- Configuration and taxonomy alignment take sustained effort for consistent results.

- Scrubbing tends to be workflow-driven, not lightweight standalone cleansing.

- Rule design for edge cases can be complex across multiple metadata sources.

Best For

Enterprises needing governance-driven metadata scrubbing for business-aligned catalogs

Talend Data Quality

ETL data qualityProfile, match, and cleanse data to fix inaccurate values in metadata attributes that feed analytics and reporting.

Rule-based survivorship and matching support for standardizing entity-related metadata

Talend Data Quality focuses on data profiling, matching, standardization, and rule-based remediation that directly improve data quality signals tied to metadata. It can generate and apply metadata-driven quality rules across structured sources by using Talend Studio connectors and transformation logic. The suite also supports column and record-level cleansing workflows, plus reference data management patterns used to correct inconsistent values. Metadata scrubbing is achieved through rule execution, profiling-based detection, and automated updates to offending fields.

Pros

- Rule-based profiling and cleansing workflows for detecting and fixing metadata issues

- Broad connector coverage for applying scrubbing logic across heterogeneous data sources

- Standardization and matching capabilities help normalize inconsistent field values

- Designed around ETL and data integration patterns for repeatable quality automation

Cons

- Metadata-only scrubbing requires building and maintaining rule logic

- Workflow complexity increases when multiple sources and remediation steps are combined

- Governance reporting often depends on how pipelines are implemented

Best For

Enterprises needing automated metadata-driven cleansing inside Talend pipelines

Soda Core

test-driven data QADefine data tests in YAML to detect and prevent schema and content issues in datasets that act as analytics metadata sources.

Rule-driven metadata scrubbing tied to data lineage context in Soda Core

Soda Core focuses on metadata quality by running automated checks and scrubbing across data assets. It links data observability outcomes to schema and lineage context so issues map back to specific fields and upstream sources. The core workflow centers on identifying broken or inconsistent metadata signals, then applying repair actions such as standardization and cleanup. It is a fit for teams that want repeatable metadata hygiene rather than manual spreadsheet auditing.

Pros

- Automates metadata quality checks across schemas and lineage-aware context

- Supports scripted repair actions for common scrubbing and standardization workflows

- Integrates metadata remediation into an operational workflow for repeatability

Cons

- Scrubbing rules require careful setup to avoid noisy or incorrect fixes

- Meaningful results depend on accurate metadata and consistent upstream modeling

- Debugging failed scrubs can take time when issues span multiple assets

Best For

Teams needing automated metadata quality remediation with rule-driven scrubbing

Conclusion

After evaluating 10 data science analytics, OpenRefine stands out as our overall top pick — it scored highest across our combined criteria of features, ease of use, and value, which is why it sits at #1 in the rankings above.

Use the comparison table and detailed reviews above to validate the fit against your own requirements before committing to a tool.

How to Choose the Right Metadata Scrubbing Software

This buyer’s guide helps teams choose metadata scrubbing software by comparing OpenRefine, Trifacta Data Wrangler, Databricks Data Quality, AWS Glue Data Quality, Apache NiFi, dbt, Alation Data Catalog, Collibra Data Catalog, Talend Data Quality, and Soda Core. It maps tool capabilities to scrubbing goals like deduplication, guided standardization, governance-aware validation, auditability, and automated rule-driven repair. The guide focuses on practical selection criteria that align with how these tools actually clean metadata inside data workflows.

What Is Metadata Scrubbing Software?

Metadata scrubbing software detects and fixes inaccurate, inconsistent, duplicate, or missing metadata signals that appear in datasets and catalogs. The software targets problems like inconsistent field values, schema drift, invalid tags, and broken descriptions that impact analytics and governance. OpenRefine shows interactive metadata normalization for messy tabular records using cluster-and-merge and expression-based transformations. Databricks Data Quality shows governance-aware automated checks inside Databricks and Unity Catalog so metadata and data quality issues are detected and remediated in the governed workflow.

Key Features to Look For

Scrubbing projects succeed when the tool’s feature set matches the kind of metadata mess, the workflow environment, and the governance requirements.

Deduplication and normalization with cluster-and-merge

OpenRefine excels at cluster-and-merge with interactive labeling to unify inconsistent strings into clean, reusable identifiers. This feature directly targets duplicates, typos, and outliers in tabular metadata during a browser-based cleaning session.

Guided transformation suggestions driven by column profiling

Trifacta Data Wrangler provides Autopilot transformation suggestions driven by column profiling to standardize names, formats, and data types. This helps teams scrub metadata-like columns quickly without manually designing every conversion step.

Governance-aware data quality checks tied to Unity Catalog changes

Databricks Data Quality integrates with Unity Catalog so rule evaluation can be monitoring-aware for table and schema changes. This capability fits teams that want metadata validation and remediation triggered inside Databricks jobs and notebook workflows.

Ruleset-based validations using the AWS Glue Data Catalog

AWS Glue Data Quality builds quality checks inside AWS Glue ETL workflows using the AWS Glue Data Catalog schema. It supports reusable, versionable rulesets and emits structured results that can gate or report failures across batch and streaming patterns.

Event-driven, auditable scrubbing pipelines with provenance tracking

Apache NiFi supports transform-driven scrubbing using processors like UpdateRecord and RecordPath to rename, remove, and rewrite metadata in-flight. The provenance tracking and record-oriented processing make it easier to audit what metadata changed and when across multi-step flows.

Rule-driven scrubbing and repair actions connected to lineage context

Soda Core centers on automated metadata quality checks and scripted repair actions tied to schema and lineage context. This helps teams implement repeatable metadata hygiene instead of manual spreadsheet-driven cleanup.

How to Choose the Right Metadata Scrubbing Software

The fastest path to a fit is to match scrubbing tasks to the tool type that best fits the workflow environment and governance depth.

Match the scrubbing style to the metadata mess

For messy tabular values that need interactive deduplication, OpenRefine offers cluster-and-merge with interactive labeling so duplicates and typos can be resolved into standardized identifiers. For standardized schema and datatype fixes where column profiling can suggest transformations, Trifacta Data Wrangler can generate repeatable steps using recipe-based transformations and Autopilot guidance.

Anchor quality enforcement in the governance plane already in use

Teams operating in Databricks and Unity Catalog should prioritize Databricks Data Quality because it ties metadata and data quality monitoring to Unity Catalog table and schema changes. Teams centered on AWS Glue and the Glue Data Catalog should prioritize AWS Glue Data Quality because it evaluates rulesets against cataloged schema and produces structured failing-record results inside Glue ETL workflows.

Decide whether scrubbing must be governed in a catalog workflow

If scrubbed metadata must flow through stewardship, approvals, and searchable business context, Alation Data Catalog and Collibra Data Catalog fit because both emphasize governance workflows that connect scrubbing outcomes to owners and audit history. Collibra Data Catalog routes scrubbing actions with full auditability and asset relationship context, while Alation focuses on data stewardship workflows that enforce review of AI-assisted metadata enrichment.

Choose automation infrastructure based on pipeline orchestration needs

If scrubbing must run as continuous, scheduled pipelines with a visual flow and detailed provenance, Apache NiFi supports drag-and-drop orchestration with record-oriented processors like UpdateRecord and provenance tracking. If scrubbing and enforcement should live as versioned SQL models with tests and lineage artifacts, dbt supports governed metadata through models, tests, tags, and dbt docs lineage for impact analysis.

Select rule-driven cleansing tools for enterprise remediation at scale

If metadata problems require entity-aware survivorship and matching logic inside ETL, Talend Data Quality supports rule-based profiling, matching, standardization, and automated updates to offending fields. If repeatable repair actions must be tied to lineage-aware observability signals, Soda Core supports rule-driven metadata scrubbing with scripted repairs connected to schema and lineage context.

Who Needs Metadata Scrubbing Software?

Metadata scrubbing tools fit teams that must protect analytics accuracy and governance consistency from messy metadata values, schema drift, and catalog inconsistencies.

Metadata stewards cleaning tabular metadata interactively

OpenRefine fits metadata stewards because it provides faceted filtering to isolate duplicates, typos, and outliers and then uses cluster-and-merge with interactive labeling to standardize values. This approach is best for teams who want to run scrubbing inside a browser session with expression-based transformations.

Analytics teams standardizing messy column metadata into consistent schemas

Trifacta Data Wrangler fits teams that need schema-ready column cleaning because Autopilot transformation suggestions rely on column profiling. The recipe-based workflow supports repeatable scrubbing logic across datasets with varying column layouts.

Databricks and Unity Catalog teams enforcing governance-aware metadata quality

Databricks Data Quality fits teams already standardizing on Databricks ingestion and governance because it integrates with Unity Catalog for automated checks and monitoring tied to table and schema changes. It also fits notebook- and job-driven workflows that require triggering remediation after detection.

Catalog governance teams requiring stewardship workflows and auditability

Alation Data Catalog and Collibra Data Catalog fit enterprises that want scrubbing delivered inside a governance loop. Alation supports stewardship workflows with review of AI-assisted metadata enrichment, while Collibra routes scrubbing actions with audit history tied to catalog assets and business-aligned relationships.

Common Mistakes to Avoid

Common selection mistakes usually come from choosing a tool that does not match the workflow environment, governance expectations, or operational scaling needs.

Picking an interactive-only cleaning tool for large, always-on scrubbing

OpenRefine can feel slow and resource intensive in an interactive UI on large datasets, which makes it less suitable for large always-on scrubbing pipelines. Apache NiFi and Soda Core better support operational automation with scheduled flows and rule-driven repairs.

Underestimating the governance and debugging effort of complex rule sets

Databricks Data Quality and AWS Glue Data Quality can require pipeline familiarity and can be harder to debug when rule sets grow complex. dbt tests and models can reduce ambiguity because lineage-aware documentation and reusable tests focus enforcement inside versioned transformations.

Assuming metadata-only scrubbing works without maintaining rule logic

Talend Data Quality needs rule logic and profiling workflows to detect and remediate metadata issues, which increases build and maintenance effort for metadata-only scenarios. Soda Core also requires careful setup to avoid noisy or incorrect fixes when rules span multiple assets.

Relying on catalog scrubbing without matching taxonomy, stewardship practices, and profiling quality

Alation Data Catalog and Collibra Data Catalog depend on configuration and rule design that requires strong governance practices, including taxonomy alignment for consistent results. Both tools also require high-quality profiling from ingested sources because scrubbing outcomes depend on what is learned during ingestion and enrichment.

How We Selected and Ranked These Tools

we evaluated every tool on three sub-dimensions with features weighted at 0.4, ease of use weighted at 0.3, and value weighted at 0.3. The overall rating is the weighted average computed as overall = 0.40 × features + 0.30 × ease of use + 0.30 × value. OpenRefine separated itself with a concrete strength in features by offering cluster-and-merge with interactive labeling plus expression-based transformations for repeatable metadata standardization inside an interactive UI.

Frequently Asked Questions About Metadata Scrubbing Software

Which metadata scrubbing tools are best for interactive cleaning of messy tabular fields?

OpenRefine supports cluster-and-merge reconciliation with interactive labeling, which helps deduplicate and standardize metadata values inside a browser session. Trifacta Data Wrangler also supports interactive visual wrangling, but it focuses more on column-level transformations driven by profiling and guided recipes.

What tools handle metadata scrubbing in governed catalog workflows rather than standalone batch cleaning?

Alation Data Catalog and Collibra Data Catalog route scrubbing through stewardship and governance loops so cleaned fields stay aligned with searchable business metadata. Databricks Data Quality and AWS Glue Data Quality also embed remediation into platform governance workflows tied to catalog and ETL metadata.

Which option fits organizations that already run ingestion and governance on Databricks and Unity Catalog?

Databricks Data Quality detects schema drift and evaluates constraints and expectations in Unity Catalog-aware contexts. It can trigger scrubbing actions from Databricks jobs and notebooks after profiling identifies metadata quality issues.

Which tool best integrates metadata scrubbing with AWS Glue ETL pipelines and the AWS Glue Data Catalog?

AWS Glue Data Quality builds rulesets for profiling and validating table and column values using the same cataloged metadata that powers schema discovery. It runs quality evaluations as part of batch and streaming-oriented pipeline patterns and outputs structured results in AWS for review.

Which tool is suited for continuous, auditable in-flight metadata changes across event-driven dataflows?

Apache NiFi excels when scrubbing must run continuously by scheduling flows and managing retries with backpressure controls. Its provenance tracking plus record-based processors such as UpdateRecord and RecordPath help audit what metadata changed and when.

How do dbt projects use metadata scrubbing without a dedicated scrubbing UI?

dbt enforces consistent naming, tagging, and documentation through versioned models and docs artifacts. Downstream teams can discover impact and ownership through dbt lineage and compiled documentation, and tests help keep metadata conventions consistent.

Which tools are strongest for standardizing column names, formats, and data types across incoming files?

Trifacta Data Wrangler generates repeatable transformation steps based on column profiling signals that detect inconsistent schemas across files. Talend Data Quality also supports standardization by applying metadata-driven rules after profiling and value matching identify offending fields.

What should be considered when scrubbing requires lineage-aware repairs tied to upstream sources?

Soda Core links observability outcomes to schema and lineage context so repairs map to specific fields and upstream sources. Apache NiFi can also improve lineage via its UI and provenance, but Soda Core emphasizes rule-driven metadata repair tied to data lineage context.

How can enterprise governance tools ensure scrubbing outcomes become searchable business metadata?

Alation Data Catalog connects scrubbing results to stewardship workflows so cleaned names, classifications, and descriptions are visible to consumers. Collibra Data Catalog similarly emphasizes relationship mapping between assets, terms, and ownership while routing corrections with audit trails and role-based controls.

Tools reviewed

Referenced in the comparison table and product reviews above.

Keep exploring

Comparing two specific tools?

Software Alternatives

See head-to-head software comparisons with feature breakdowns, pricing, and our recommendation for each use case.

Explore software alternatives→In this category

Data Science Analytics alternatives

See side-by-side comparisons of data science analytics tools and pick the right one for your stack.

Compare data science analytics tools→FOR SOFTWARE VENDORS

Not on this list? Let’s fix that.

Our best-of pages are how many teams discover and compare tools in this space. If you think your product belongs in this lineup, we’d like to hear from you—we’ll walk you through fit and what an editorial entry looks like.

Apply for a ListingWHAT THIS INCLUDES

Where buyers compare

Readers come to these pages to shortlist software—your product shows up in that moment, not in a random sidebar.

Editorial write-up

We describe your product in our own words and check the facts before anything goes live.

On-page brand presence

You appear in the roundup the same way as other tools we cover: name, positioning, and a clear next step for readers who want to learn more.

Kept up to date

We refresh lists on a regular rhythm so the category page stays useful as products and pricing change.