GITNUXSOFTWARE ADVICE

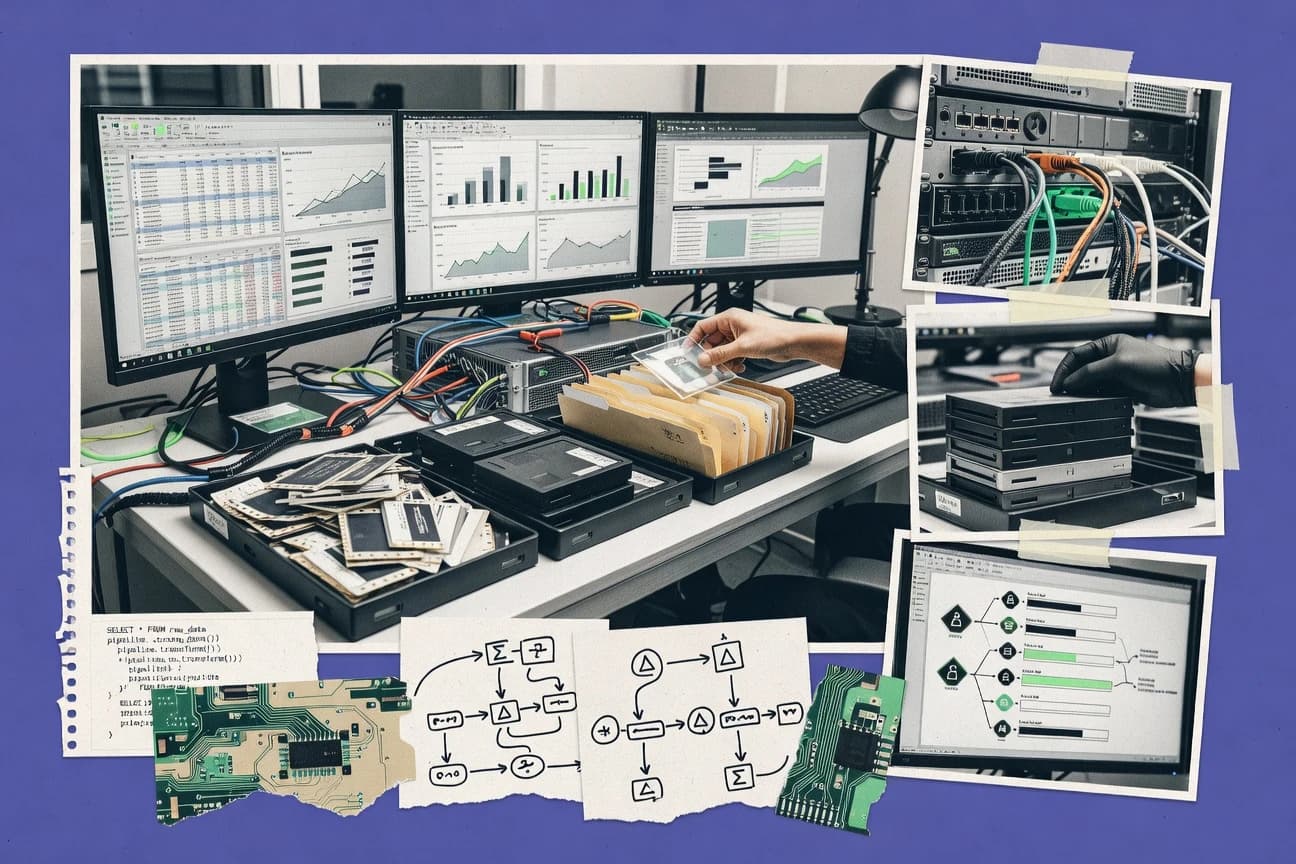

Data Science AnalyticsTop 10 Best Data ETL Software of 2026

Discover top data ETL software solutions for seamless integration. Compare features, choose the best, and optimize your workflow today.

How we ranked these tools

Core product claims cross-referenced against official documentation, changelogs, and independent technical reviews.

Analyzed video reviews and hundreds of written evaluations to capture real-world user experiences with each tool.

AI persona simulations modeled how different user types would experience each tool across common use cases and workflows.

Final rankings reviewed and approved by our editorial team with authority to override AI-generated scores based on domain expertise.

Score: Features 40% · Ease 30% · Value 30%

Gitnux may earn a commission through links on this page — this does not influence rankings. Editorial policy

Editor picks

Three quick recommendations before you dive into the full comparison below — each one leads on a different dimension.

Apache Airflow

DAG-based scheduling with backfills and dependency-aware task execution

Built for teams building maintainable, dependency-rich ETL pipelines with strong observability.

AWS Glue

Glue Data Catalog with crawlers and schema inference powering incremental ETL via job bookmarks

Built for aWS-first teams building scalable Spark ETL with managed catalog and incremental runs.

Azure Data Factory

Integration Runtime enables secure hybrid connectivity for both data transfer and execution.

Built for teams building cloud and hybrid ETL pipelines with strong Azure integration.

Related reading

Comparison Table

This comparison table maps key Data ETL tools side by side so you can evaluate how they design, schedule, and run data pipelines. You’ll compare orchestration and workflow features in platforms like Apache Airflow with managed ingestion and transformation services like AWS Glue, Azure Data Factory, and Google Cloud Dataflow, alongside SaaS connectors from Fivetran. The goal is to help you match each tool’s strengths to your integration, transformation, and operational needs.

| # | Tool | Category | Overall | Features | Ease of Use | Value |

|---|---|---|---|---|---|---|

| 1 | Apache Airflow Orchestrates data pipelines with scheduled and event-driven workflows using DAGs, task retries, and dependency tracking. | open-source orchestrator | 9.1/10 | 9.4/10 | 7.8/10 | 8.7/10 |

| 2 | AWS Glue Runs managed ETL jobs that transform data with Spark-based scripts and integrates with the AWS data catalog. | managed ETL | 8.2/10 | 8.7/10 | 7.8/10 | 7.6/10 |

| 3 | Azure Data Factory Moves and transforms data across sources using visual pipelines, mapping data flows, and orchestration with managed triggers. | cloud ETL orchestration | 8.6/10 | 9.2/10 | 7.8/10 | 8.1/10 |

| 4 | Google Cloud Dataflow Executes batch and streaming ETL transformations using the Apache Beam programming model with autoscaling and managed execution. | streaming ETL | 8.7/10 | 9.2/10 | 7.8/10 | 8.4/10 |

| 5 | Fivetran Continuously replicates data from SaaS and databases into warehouses using connectors and automated schema management. | ELT automation | 8.6/10 | 9.0/10 | 8.9/10 | 7.8/10 |

| 6 | Matillion ETL Builds ELT pipelines for cloud warehouses with a web interface that generates transformations and manages job dependencies. | warehouse ELT | 8.1/10 | 8.6/10 | 7.8/10 | 7.9/10 |

| 7 | dbt Cloud Transforms analytics-ready data in warehouses using SQL models, version control workflows, and CI-friendly runs. | SQL transformations | 8.0/10 | 8.6/10 | 8.2/10 | 7.6/10 |

| 8 | Singer Standardizes ETL taps and targets so connectors can extract data from sources and load it into destinations using JSON schemas. | connector framework | 8.1/10 | 7.6/10 | 7.3/10 | 8.7/10 |

| 9 | Meltano Orchestrates ELT pipelines by running Singer-based taps and targets with scheduling, versioned configs, and logs. | open-source ELT | 7.6/10 | 8.4/10 | 6.9/10 | 7.8/10 |

| 10 | Talend Designs and runs ETL workflows with data integration jobs and manages connectivity across on-prem and cloud systems. | enterprise integration | 7.2/10 | 8.0/10 | 6.8/10 | 6.9/10 |

Orchestrates data pipelines with scheduled and event-driven workflows using DAGs, task retries, and dependency tracking.

Runs managed ETL jobs that transform data with Spark-based scripts and integrates with the AWS data catalog.

Moves and transforms data across sources using visual pipelines, mapping data flows, and orchestration with managed triggers.

Executes batch and streaming ETL transformations using the Apache Beam programming model with autoscaling and managed execution.

Continuously replicates data from SaaS and databases into warehouses using connectors and automated schema management.

Builds ELT pipelines for cloud warehouses with a web interface that generates transformations and manages job dependencies.

Transforms analytics-ready data in warehouses using SQL models, version control workflows, and CI-friendly runs.

Standardizes ETL taps and targets so connectors can extract data from sources and load it into destinations using JSON schemas.

Orchestrates ELT pipelines by running Singer-based taps and targets with scheduling, versioned configs, and logs.

Designs and runs ETL workflows with data integration jobs and manages connectivity across on-prem and cloud systems.

Apache Airflow

open-source orchestratorOrchestrates data pipelines with scheduled and event-driven workflows using DAGs, task retries, and dependency tracking.

DAG-based scheduling with backfills and dependency-aware task execution

Apache Airflow stands out for orchestrating ETL and data workflows with code-defined Directed Acyclic Graphs that can express complex dependencies. It provides a scheduler, web UI, and extensible operators and hooks for moving and transforming data across many systems. Airflow supports retries, backfills, and rich execution metadata so teams can monitor and troubleshoot pipeline runs. It also supports distributed execution with worker components and flexible configuration for production deployments.

Pros

- Code-defined DAGs model complex ETL dependencies with clear lineage

- Built-in retries, schedules, and backfills support reliable recurring processing

- Rich web UI shows run status, logs, and task-level execution history

- Large ecosystem of operators and hooks covers common data platforms

Cons

- Operational overhead is higher than lightweight ETL tools

- State and concurrency tuning can be complex in production environments

- Python-first authoring requires software engineering skills for best results

Best For

Teams building maintainable, dependency-rich ETL pipelines with strong observability

More related reading

AWS Glue

managed ETLRuns managed ETL jobs that transform data with Spark-based scripts and integrates with the AWS data catalog.

Glue Data Catalog with crawlers and schema inference powering incremental ETL via job bookmarks

AWS Glue stands out for serverless ETL that runs managed Apache Spark and Python jobs on AWS data stores. It provides crawlers to infer schemas and auto-generate Data Catalog entries, then feeds those schemas into ETL workflows. Glue integrates tightly with S3 and the Glue Data Catalog, and it supports job bookmarks for incremental processing to avoid full re-runs. It also supports event-driven orchestration with triggers and can run visual and code-based transformations using Glue Studio.

Pros

- Serverless Spark ETL eliminates cluster provisioning and tuning overhead

- Glue crawlers populate the Glue Data Catalog from S3 sources

- Job bookmarks enable incremental ETL with less repeated data processing

- Glue Studio provides visual job authoring for common transformation patterns

- Tight integration with S3, IAM, and AWS observability tools

Cons

- AWS-centric workflows can be harder to operate outside the AWS stack

- Fine-grained cost control can be difficult with variable Spark workload sizing

- Schema evolution management can require extra ETL logic and catalog discipline

- Debugging distributed Spark failures needs more expertise than simple ETL tools

Best For

AWS-first teams building scalable Spark ETL with managed catalog and incremental runs

Azure Data Factory

cloud ETL orchestrationMoves and transforms data across sources using visual pipelines, mapping data flows, and orchestration with managed triggers.

Integration Runtime enables secure hybrid connectivity for both data transfer and execution.

Azure Data Factory stands out with a managed, code-friendly orchestration experience built for integrating cloud and on-prem data sources. It supports visual pipeline authoring with activity-based data movement, transformation via mapping data flows, and robust control flow for scheduling and dependencies. You can execute pipelines on Azure Integration Runtime, which handles both cloud-to-cloud transfers and on-prem connectivity. Tight integration with Azure services like Azure SQL, Synapse Analytics, and Azure Key Vault supports secure ingestion patterns and operational monitoring.

Pros

- Visual pipeline designer with granular activity control

- Mapping data flows provide scalable ETL without custom Spark code

- Integration Runtime supports both cloud and on-prem data movement

- Native security ties into Azure Key Vault for secret management

- Monitoring and retry controls help stabilize production ETL runs

Cons

- Debugging complex pipelines often requires deep activity-level inspection

- Data flow performance tuning can be nontrivial without ETL optimization knowledge

- Cost can rise quickly with frequent triggers and large data movement

Best For

Teams building cloud and hybrid ETL pipelines with strong Azure integration

Google Cloud Dataflow

streaming ETLExecutes batch and streaming ETL transformations using the Apache Beam programming model with autoscaling and managed execution.

Apache Beam runner with event-time windowing and triggers for streaming ETL

Google Cloud Dataflow stands out for running Apache Beam pipelines on Google-managed distributed workers for both batch and streaming ETL. It supports windowing, triggers, and event-time processing, which makes it strong for incremental data movement and near-real-time transformations. Built-in integrations with Google Cloud storage and messaging simplify common ETL patterns across Pub/Sub, BigQuery, and Cloud Storage. Operationally, you get autoscaling, job monitoring in the Cloud console, and a rich connector ecosystem for standard data sources and sinks.

Pros

- Apache Beam support enables one pipeline for batch and streaming ETL

- Event-time windowing, triggers, and watermarks fit complex streaming transformations

- Autoscaling workers reduce tuning effort during traffic changes

- Tight connectors for BigQuery, Pub/Sub, and Cloud Storage speed common ETL paths

Cons

- Beam programming model adds learning overhead for ETL teams

- Cost can rise quickly with high throughput streaming workloads

- Debugging distributed transforms is harder than with single-node ETL tools

Best For

Teams building Beam-based batch and streaming ETL on Google Cloud

Fivetran

ELT automationContinuously replicates data from SaaS and databases into warehouses using connectors and automated schema management.

Automatic schema sync in managed connectors that updates destination tables.

Fivetran stands out for automated, continuously running data connectors that handle replication without custom pipeline code. It supports broad integration targets through managed connectors to sources and destinations, then applies schema and mapping management to keep tables aligned as data changes. Built-in orchestration and monitoring reduce operational overhead compared with DIY ETL jobs. It is strongest for ELT-style warehouse loading at scale with governance-friendly lineage and repeatable connector deployments.

Pros

- Managed connectors for many Saa-side sources and common warehouses

- Automatic schema syncing reduces breakage when upstream fields change

- Continuous sync with scheduling and built-in monitoring

- Reusable connector configuration for faster environment setup

Cons

- Less suited for custom transformations that require full control

- Cost increases with data volume and connector count

- Complex multi-step business logic still needs external tooling

Best For

Teams standardizing reliable warehouse ingestion with minimal pipeline maintenance

Matillion ETL

warehouse ELTBuilds ELT pipelines for cloud warehouses with a web interface that generates transformations and manages job dependencies.

Visual job builder with SQL transformations for warehouse ETL

Matillion ETL stands out for its cloud-first approach that turns SQL-based transformations into guided ETL workflows in a web UI. It supports data loading, transformation, and orchestration across major warehouses with reusable jobs, scheduling, and dependency handling. Its strength is accelerating analytics pipelines with native connectors, transformation patterns, and integration with Git for versioning. It is less suited to fully managed orchestration across non-warehouse systems and may require warehouse-centric design.

Pros

- Warehouse-native transformations with SQL-focused job building

- Strong connector coverage for common cloud data sources

- Job orchestration with retries, dependencies, and scheduling

- Git integration supports versioned ETL code workflows

Cons

- Workflow design is most effective for warehouse-centric architectures

- Non-warehouse orchestration needs more custom integration

- Advanced governance and lineage can require extra setup

Best For

Cloud analytics teams building warehouse ETL with SQL-first workflows

More related reading

dbt Cloud

SQL transformationsTransforms analytics-ready data in warehouses using SQL models, version control workflows, and CI-friendly runs.

Run history with model-level lineage and test results for end-to-end dbt observability

dbt Cloud focuses on managed dbt execution with job orchestration, run history, and built-in environment management. It supports SQL-based ELT transformations, including model dependencies, incremental materializations, and test-driven pipelines. Teams can collaborate with lineage views and role-based access while keeping CI-style runs and artifacts centralized. It fits best when your transformations are already expressed in dbt models rather than when you need a general-purpose ETL connector factory.

Pros

- Managed dbt runs remove the need to operate orchestration infrastructure

- Model lineage and dependency graphs make impact analysis straightforward

- Built-in job scheduling and run history speed up release operations

- Integrated testing gates prevent silent data quality regressions

- Incremental model support reduces compute by processing only changed data

Cons

- Best fit for dbt-driven ELT, not custom non-dbt ETL workflows

- Advanced connector and data movement patterns can require additional tooling

- Cost scales with usage and team size, which can strain smaller projects

- Transformations still require SQL model authoring in your dbt project

- Cross-system orchestration beyond dbt jobs needs external workflows

Best For

Teams running dbt ELT who want managed orchestration, testing, and lineage visibility

Singer

connector frameworkStandardizes ETL taps and targets so connectors can extract data from sources and load it into destinations using JSON schemas.

Singer tap and target ecosystem support

Singer stands out by focusing on Singer taps and targets to move data through a standardized ETL interface across many sources. It enables you to run connectors that extract from source systems and load into targets, which fits teams that already rely on the Singer ecosystem. You get a workflow for orchestration and execution that supports batch style pipelines and repeatable runs. It is best suited to connector-based integration rather than building a large transformation layer inside the tool.

Pros

- Singer tap and target compatibility reduces custom connector work

- Connector-first architecture supports many data sources and destinations

- Repeatable pipeline runs make scheduled extraction and loading straightforward

Cons

- Transformation depth depends on external tooling, not built-in capabilities

- Connector setup can require technical configuration for reliable runs

- Complex orchestration needs may push teams to additional platforms

Best For

Teams building connector-driven ETL pipelines using Singer taps and targets

Meltano

open-source ELTOrchestrates ELT pipelines by running Singer-based taps and targets with scheduling, versioned configs, and logs.

Meltano's Singer tap and target runner with a plugin system for standardized pipelines

Meltano stands out for turning data workflows into versioned code using Singer taps and targets with orchestration from the Meltano command line and UI. It supports ELT-style pipelines, incremental syncs, and scheduling that runs existing extraction and load components. The Meltano Plugin system lets you add connectors for common sources and destinations while keeping configurations consistent across projects. It is strongest for teams that want repeatable ETL pipelines with Git-based management rather than a fully managed drag-and-drop stack.

Pros

- Singer-based tap and target ecosystem speeds up source and destination integrations

- Plugin framework centralizes reusable pipeline components across projects

- Git-friendly orchestration supports reproducible runs and code review

- Incremental syncs reduce load on APIs and storage

Cons

- Connector setup often requires manual configuration and debugging

- Operational control is more engineering-focused than business-user friendly

- Complex transformations still require external logic or tools

Best For

Teams building code-managed ELT pipelines from many sources into warehouses

Talend

enterprise integrationDesigns and runs ETL workflows with data integration jobs and manages connectivity across on-prem and cloud systems.

Data Quality components with rule-based validations and profiling for improving trust in ETL outputs

Talend stands out for combining visual data integration with code generation through its Studio for designing ETL jobs and streaming or batch pipelines. It supports connectivity to common databases and SaaS sources, data quality checks, and orchestration with scheduling and job dependency management. Talend also offers governance tooling for metadata management and lineage so teams can track where data comes from and how it changes. Enterprise deployments typically focus on scale and manageability across multiple environments and teams.

Pros

- Visual Studio with code generation speeds up ETL development

- Strong data quality and profiling capabilities for production datasets

- Metadata, lineage, and governance features support compliance workflows

- Broad connector ecosystem covers databases and many SaaS sources

Cons

- Job design can become complex for large pipelines

- Advanced governance and administration add overhead for small teams

- Licensing costs can outweigh value for lightweight ETL needs

Best For

Enterprises modernizing batch and streaming ETL with governance requirements

Conclusion

After evaluating 10 data science analytics, Apache Airflow stands out as our overall top pick — it scored highest across our combined criteria of features, ease of use, and value, which is why it sits at #1 in the rankings above.

Use the comparison table and detailed reviews above to validate the fit against your own requirements before committing to a tool.

How to Choose the Right Data ETL Software

This buyer's guide explains how to select Data ETL software for scheduled batch pipelines, hybrid connectivity, and modern warehouse and analytics workflows. You will see how Apache Airflow, AWS Glue, Azure Data Factory, Google Cloud Dataflow, and dbt Cloud map to different ETL delivery models. You will also get feature requirements, common traps, and a clear decision path using the same tool set: Fivetran, Matillion ETL, Singer, Meltano, and Talend.

What Is Data ETL Software?

Data ETL software moves data from sources into target systems and transforms it into analytics-ready or operationally usable structures. It solves pipeline orchestration, incremental processing, and operational observability problems that appear when data flows must run repeatedly and reliably. Tools like Apache Airflow orchestrate dependency-rich workflows with scheduled and event-driven DAG runs. Tools like AWS Glue run managed Spark-based ETL jobs with schema inference and incremental processing via job bookmarks.

Key Features to Look For

The right ETL features determine whether your team spends time building pipelines or operates fragile jobs with slow troubleshooting.

Dependency-aware orchestration with DAG scheduling and backfills

Apache Airflow models ETL dependencies with code-defined DAGs and executes tasks with dependency tracking. It also supports backfills and retries, which helps recover from upstream changes without manual rework.

Managed incremental ETL with catalog-driven schema inference

AWS Glue uses Glue crawlers to infer schemas into the Glue Data Catalog and then runs incremental ETL using job bookmarks. This combination targets incremental processing while reducing full re-runs when source data changes.

Hybrid and secure connectivity via an execution runtime

Azure Data Factory runs pipelines on Azure Integration Runtime, which supports both cloud-to-cloud transfers and on-prem connectivity. It also integrates with Azure Key Vault for secret management, which reduces exposure when pipelines need credentials.

Streaming-ready transformations with event-time windowing and triggers

Google Cloud Dataflow runs Apache Beam pipelines and provides event-time windowing, triggers, and watermarks for event-time correctness. This is a strong fit when ETL must handle near-real-time updates and late-arriving data.

Automated schema synchronization for continuous warehouse ingestion

Fivetran continuously replicates data with managed connectors and automatically applies schema synchronization to destination tables. This reduces breakage when upstream fields change and keeps warehouse loads stable.

Model-level lineage, test gates, and managed dbt execution

dbt Cloud focuses on managed dbt runs with run history that includes model-level lineage and test results. It also supports incremental model execution, which reduces compute by processing only changed data.

How to Choose the Right Data ETL Software

Pick the tool that matches your orchestration model, transformation approach, and connectivity constraints rather than trying to force one platform to fit every workload.

Match orchestration to your dependency and scheduling needs

If your ETL has complex dependencies across many steps, use Apache Airflow because it schedules via DAGs and supports dependency-aware execution. If your pipelines are mainly warehouse transformations authored in dbt, use dbt Cloud so model dependencies, scheduling, and run history align to your dbt project.

Choose the transformation style your team can maintain

For warehouse-focused SQL transformations with guided workflow building, Matillion ETL turns SQL transformations into reusable jobs in a web interface. For scalable batch and streaming transformations in a unified framework, Google Cloud Dataflow runs Apache Beam pipelines using windowing and triggers that fit event-time ETL.

Decide how you want schemas and incrementality to work

If you want schema inference that feeds a managed catalog, AWS Glue uses Glue crawlers to populate the Glue Data Catalog and then drives incremental runs using job bookmarks. If you want continuous ingestion with reduced schema breakage, use Fivetran because managed connectors handle schema syncing into destination tables.

Validate connectivity requirements for hybrid and multi-environment runs

If you need on-prem connectivity and secret management inside the pipeline execution model, Azure Data Factory with Azure Integration Runtime and Azure Key Vault is built for hybrid scenarios. If you are already built around Singer taps and targets, use Singer or Meltano so your extraction and loading follow the Singer ecosystem and run with repeatable connector execution.

Confirm governance and quality needs for production pipelines

If you must enforce rule-based data quality checks and profiling for production datasets, Talend provides data quality components with rule-based validations and profiling. If you need end-to-end observability for dbt logic with test results and lineage, dbt Cloud ties run history and tests to model dependencies.

Who Needs Data ETL Software?

Data ETL software fits teams that need reliable repeatable data movement, controlled transformations, and operational visibility rather than one-off exports.

Teams building dependency-rich ETL with strong observability

Apache Airflow is the best match because DAG-based scheduling, dependency tracking, retries, and backfills support reliable recurring processing with detailed run status and task-level history. Teams that need maintainable ETL logic expressed as DAG code typically prefer Apache Airflow to orchestration layers that focus on visual steps.

AWS-first teams standardizing Spark-based incremental ETL

AWS Glue fits teams that want serverless Spark ETL with Glue crawlers populating the Glue Data Catalog. Job bookmarks support incremental processing so teams avoid full re-runs when data changes.

Cloud and hybrid integration teams executing ETL across on-prem and cloud

Azure Data Factory targets teams that need Azure-native security and hybrid connectivity because Azure Integration Runtime supports both cloud-to-cloud transfers and on-prem connectivity. Azure Key Vault integration supports secure ingestion patterns when pipelines need managed credentials.

Analytics engineering teams running SQL ELT with dbt workflows

dbt Cloud is designed for teams whose transformations already live in dbt models and who want managed orchestration. Built-in run history, model-level lineage, and integrated test gates support release operations without maintaining their own orchestration infrastructure.

Common Mistakes to Avoid

The most common failures come from choosing a tool that cannot match the workflow shape your data platform requires.

Forcing a warehouse ELT workflow tool onto broad ETL orchestration

Matillion ETL is strongest for warehouse-centric architectures because it focuses on SQL transformations and job orchestration around data loading and transformations. If you need general-purpose non-warehouse orchestration, Apache Airflow or Azure Data Factory provides more control over pipeline execution across systems.

Underestimating operational tuning and engineering overhead for DIY orchestration

Apache Airflow delivers powerful control via DAGs but requires software engineering skills for Python-first authoring and can involve concurrency and state tuning in production. AWS Glue and AWS-managed ETL patterns reduce operational overhead by running managed Spark jobs.

Skipping schema and incremental strategy for continuous ingestion

Fivetran reduces breakage by applying automatic schema synchronization into destination tables during continuous replication. AWS Glue also requires schema discipline because crawlers and job bookmarks depend on catalog accuracy for incremental behavior.

Trying to build deep transformation logic inside connector-first tools

Singer prioritizes Singer tap and target compatibility and keeps transformation depth dependent on external tooling. Fivetran handles replication well but is less suited for custom transformations that need full control, so pair it with a transformation layer when business logic is complex.

How We Selected and Ranked These Tools

We evaluated each tool on overall capability, feature depth, ease of use, and value fit for the workloads its design targets. We separated Apache Airflow from lower-ranked options because DAG-based scheduling with backfills and dependency-aware execution directly addresses complex ETL dependency graphs while also providing rich observability for pipeline runs. We also compared managed orchestration paths like dbt Cloud and AWS Glue against connector-led automation like Fivetran and Singer, then scored tools higher when their standout capabilities reduce operational overhead for the intended workflow type.

Frequently Asked Questions About Data ETL Software

Which ETL tool is best for dependency-rich batch pipelines with strong monitoring?

Apache Airflow models ETL as DAGs, so complex task dependencies, retries, and backfills are first-class features. Its web UI and execution metadata help teams troubleshoot pipeline failures across distributed workers.

What ETL option fits an AWS-first setup that needs serverless Spark and incremental loads?

AWS Glue runs managed Spark and Python ETL jobs without provisioning clusters. It uses crawlers to infer schemas and Glue Data Catalog entries, then supports incremental processing through job bookmarks.

How do I choose between Azure Data Factory and Apache Airflow for hybrid integrations?

Azure Data Factory integrates with Azure Integration Runtime to connect cloud-to-cloud and cloud-to-on-prem sources through managed connectivity. Apache Airflow can also orchestrate hybrid workflows, but Azure Data Factory provides a more managed runtime for ingestion and execution patterns tied to Azure services like Azure Key Vault.

Which tool is designed for streaming ETL with event-time windowing and triggers?

Google Cloud Dataflow runs Apache Beam pipelines on Google-managed distributed workers. It supports event-time windowing and triggers, which makes it well-suited for near-real-time transformations driven by Pub/Sub events.

Which ETL tool minimizes custom pipeline code by automating replication and schema changes?

Fivetran uses managed connectors to replicate data continuously without writing ETL code. It automatically syncs schemas to keep destination tables aligned as source structures evolve.

When should I use Matillion ETL instead of writing a fully coded orchestrator?

Matillion ETL turns SQL transformations into guided ETL workflows in a web UI. It pairs warehouse-centric execution with reusable jobs, scheduling, and dependency handling for teams that want SQL-first development rather than building orchestration code.

How do I handle data quality and trust requirements in an enterprise ETL workflow?

Talend includes data quality components with rule-based validations and profiling to improve confidence in ETL outputs. Azure Data Factory and Apache Airflow can orchestrate quality checks, but Talend provides built-in quality tooling designed for governance-heavy environments.

What should I use for dbt-style ELT when I need managed runs, testing, and lineage?

dbt Cloud is built to manage dbt execution with run history, model-level lineage, and centralized artifacts. It also supports incremental materializations and test-driven pipelines that keep ELT behavior consistent across environments.

Which option fits an ecosystem built around Singer taps and targets?

Singer focuses on standardized tap and target connectors that move data through an ETL interface. Meltano extends that approach by orchestrating Singer taps and targets with a Git-friendly, command-line and UI workflow for repeatable pipelines.

Why would I choose Singer or Meltano over a general ETL orchestrator?

Singer is best when you want connector-driven integration and a consistent tap-target workflow rather than building a transformation layer inside the tool. Meltano is a strong fit when you want the same Singer connector approach but with versioned pipeline configuration and execution managed via Meltano’s plugin system.

Tools reviewed

Referenced in the comparison table and product reviews above.

Keep exploring

Comparing two specific tools?

Software Alternatives

See head-to-head software comparisons with feature breakdowns, pricing, and our recommendation for each use case.

Explore software alternatives→In this category

Data Science Analytics alternatives

See side-by-side comparisons of data science analytics tools and pick the right one for your stack.

Compare data science analytics tools→FOR SOFTWARE VENDORS

Not on this list? Let’s fix that.

Our best-of pages are how many teams discover and compare tools in this space. If you think your product belongs in this lineup, we’d like to hear from you—we’ll walk you through fit and what an editorial entry looks like.

Apply for a ListingWHAT THIS INCLUDES

Where buyers compare

Readers come to these pages to shortlist software—your product shows up in that moment, not in a random sidebar.

Editorial write-up

We describe your product in our own words and check the facts before anything goes live.

On-page brand presence

You appear in the roundup the same way as other tools we cover: name, positioning, and a clear next step for readers who want to learn more.

Kept up to date

We refresh lists on a regular rhythm so the category page stays useful as products and pricing change.